07a00828af

1347 Commits

| Author | SHA1 | Message | Date | |

|---|---|---|---|---|

|

|

a479c2c2b2 |

Fix stress test failure "Corruption: checksum mismatch" or "Iterator Diverged" with async_io enabled (#10032)

Summary: In case of non sequential reads with `async_io`, `FilePRefetchBuffer::TryReadFromCacheAsync` can be called for previous blocks with `offset < bufs_[curr_].offset_` which wasn't handled correctly resulting wrong data being returned from buffer. Since `FilePRefetchBuffer::PrefetchAsync` can be called for any data block, it sets `prev_len_` to 0 indicating `FilePRefetchBuffer::TryReadFromCacheAsync` to go for the prefetching even though offset < bufs_[curr_].offset_ This is because async prefetching is always done in second buffer (to avoid mutex) even though curr_ is empty leading to offset < bufs_[curr_].offset_ in some cases. If prev_len_ is non zero then `TryReadFromCacheAsync` returns false if `offset < bufs_[curr_].offset_ && prev_len != 0` indicating reads are not sequential and previous call wasn't PrefetchAsync. - This PR also simplifies `FilePRefetchBuffer::TryReadFromCacheAsync` as it was getting complicated covering different scenarios based on `async_io` enabled/disabled. If `for_compaction` is set true, it now calls `FilePRefetchBufferTryReadFromCache` following synchronous flow as before. Its decided in BlockFetcher.cc Pull Request resolved: https://github.com/facebook/rocksdb/pull/10032 Test Plan: 1. export CRASH_TEST_EXT_ARGS=" --async_io=1" make crash_test -j completed successfully locally 2. make crash_test -j completed successfully locally 3. Reran CircleCi mini crashtest job 4 - 5 times. 4. Updated prefetch_test for more coverage. Reviewed By: anand1976 Differential Revision: D36579858 Pulled By: akankshamahajan15 fbshipit-source-id: 0c428d62b45e12e082a83acf533a5e37a584bedf |

||

|

|

2db6a4a1d6 |

Seek parallelization (#9994)

Summary: The RocksDB iterator is a hierarchy of iterators. MergingIterator maintains a heap of LevelIterators, one for each L0 file and for each non-zero level. The Seek() operation naturally lends itself to parallelization, as it involves positioning every LevelIterator on the correct data block in the correct SST file. It lookups a level for a target key, to find the first key that's >= the target key. This typically involves reading one data block that is likely to contain the target key, and scan forward to find the first valid key. The forward scan may read more data blocks. In order to find the right data block, the iterator may read some metadata blocks (required for opening a file and searching the index). This flow can be parallelized. Design: Seek will be called two times under async_io option. First seek will send asynchronous request to prefetch the data blocks at each level and second seek will follow the normal flow and in FilePrefetchBuffer::TryReadFromCacheAsync it will wait for the Poll() to get the results and add the iterator to min_heap. - Status::TryAgain is passed down from FilePrefetchBuffer::PrefetchAsync to block_iter_.Status indicating asynchronous request has been submitted. - If for some reason asynchronous request returns error in submitting the request, it will fallback to sequential reading of blocks in one pass. - If the data already exists in prefetch_buffer, it will return the data without prefetching further and it will be treated as single pass of seek. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9994 Test Plan: - **Run Regressions.** ``` ./db_bench -db=/tmp/prefix_scan_prefetch_main -benchmarks="fillseq" -key_size=32 -value_size=512 -num=5000000 -use_direct_io_for_flush_and_compaction=true -target_file_size_base=16777216 ``` i) Previous release 7.0 run for normal prefetching with async_io disabled: ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.0 Date: Thu Mar 17 13:11:34 2022 CPU: 24 * Intel Core Processor (Broadwell) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] seekrandom : 483618.390 micros/op 2 ops/sec; 338.9 MB/s (249 of 249 found) ``` ii) normal prefetching after changes with async_io disable: ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Set seed to 1652922591315307 because --seed was 0 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.3 Date: Wed May 18 18:09:51 2022 CPU: 32 * Intel Xeon Processor (Skylake) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] seekrandom : 483080.466 micros/op 2 ops/sec 120.287 seconds 249 operations; 340.8 MB/s (249 of 249 found) ``` iii) db_bench with async_io enabled completed succesfully ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 -async_io=1 -adaptive_readahead=1 Set seed to 1652924062021732 because --seed was 0 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.3 Date: Wed May 18 18:34:22 2022 CPU: 32 * Intel Xeon Processor (Skylake) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] seekrandom : 553913.576 micros/op 1 ops/sec 120.199 seconds 217 operations; 293.6 MB/s (217 of 217 found) ``` - db_stress with async_io disabled completed succesfully ``` export CRASH_TEST_EXT_ARGS=" --async_io=0" make crash_test -j ``` I**n Progress**: db_stress with async_io is failing and working on debugging/fixing it. Reviewed By: anand1976 Differential Revision: D36459323 Pulled By: akankshamahajan15 fbshipit-source-id: abb1cd944abe712bae3986ae5b16704b3338917c |

||

|

|

e015206dd6 |

Fix crash due to MultiGet async IO and direct IO (#10024)

Summary: MultiGet with async IO is not officially supported with Posix yet. Avoid a crash by using synchronous MultiRead when direct IO is enabled. Pull Request resolved: https://github.com/facebook/rocksdb/pull/10024 Test Plan: Run db_crashtest.py manually Reviewed By: akankshamahajan15 Differential Revision: D36551053 Pulled By: anand1976 fbshipit-source-id: 72190418fa92dd0397e87825df618b12c9bdecda |

||

|

|

cc23b46da1 |

Support using ZDICT_finalizeDictionary to generate zstd dictionary (#9857)

Summary:

An untrained dictionary is currently simply the concatenation of several samples. The ZSTD API, ZDICT_finalizeDictionary(), can improve such a dictionary's effectiveness at low cost. This PR changes how dictionary is created by calling the ZSTD ZDICT_finalizeDictionary() API instead of creating raw content dictionary (when max_dict_buffer_bytes > 0), and pass in all buffered uncompressed data blocks as samples.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/9857

Test Plan:

#### db_bench test for cpu/memory of compression+decompression and space saving on synthetic data:

Set up: change the parameter [here](

|

||

|

|

57997ddaaf |

Multi file concurrency in MultiGet using coroutines and async IO (#9968)

Summary: This PR implements a coroutine version of batched MultiGet in order to concurrently read from multiple SST files in a level using async IO, thus reducing the latency of the MultiGet. The API from the user perspective is still synchronous and single threaded, with the RocksDB part of the processing happening in the context of the caller's thread. In Version::MultiGet, the decision is made whether to call synchronous or coroutine code. A good way to review this PR is to review the first 4 commits in order - de773b3, 70c2f70, 10b50e1, and 377a597 - before reviewing the rest. TODO: 1. Figure out how to build it in CircleCI (requires some dependencies to be installed) 2. Do some stress testing with coroutines enabled No regression in synchronous MultiGet between this branch and main - ``` ./db_bench -use_existing_db=true --db=/data/mysql/rocksdb/prefix_scan -benchmarks="readseq,multireadrandom" -key_size=32 -value_size=512 -num=5000000 -batch_size=64 -multiread_batched=true -use_direct_reads=false -duration=60 -ops_between_duration_checks=1 -readonly=true -adaptive_readahead=true -threads=16 -cache_size=10485760000 -async_io=false -multiread_stride=40000 -statistics ``` Branch - ```multireadrandom : 4.025 micros/op 3975111 ops/sec 60.001 seconds 238509056 operations; 2062.3 MB/s (14767808 of 14767808 found)``` Main - ```multireadrandom : 3.987 micros/op 4013216 ops/sec 60.001 seconds 240795392 operations; 2082.1 MB/s (15231040 of 15231040 found)``` More benchmarks in various scenarios are given below. The measurements were taken with ```async_io=false``` (no coroutines) and ```async_io=true``` (use coroutines). For an IO bound workload (with every key requiring an IO), the coroutines version shows a clear benefit, being ~2.6X faster. For CPU bound workloads, the coroutines version has ~6-15% higher CPU utilization, depending on how many keys overlap an SST file. 1. Single thread IO bound workload on remote storage with sparse MultiGet batch keys (~1 key overlap/file) - No coroutines - ```multireadrandom : 831.774 micros/op 1202 ops/sec 60.001 seconds 72136 operations; 0.6 MB/s (72136 of 72136 found)``` Using coroutines - ```multireadrandom : 318.742 micros/op 3137 ops/sec 60.003 seconds 188248 operations; 1.6 MB/s (188248 of 188248 found)``` 2. Single thread CPU bound workload (all data cached) with ~1 key overlap/file - No coroutines - ```multireadrandom : 4.127 micros/op 242322 ops/sec 60.000 seconds 14539384 operations; 125.7 MB/s (14539384 of 14539384 found)``` Using coroutines - ```multireadrandom : 4.741 micros/op 210935 ops/sec 60.000 seconds 12656176 operations; 109.4 MB/s (12656176 of 12656176 found)``` 3. Single thread CPU bound workload with ~2 key overlap/file - No coroutines - ```multireadrandom : 3.717 micros/op 269000 ops/sec 60.000 seconds 16140024 operations; 139.6 MB/s (16140024 of 16140024 found)``` Using coroutines - ```multireadrandom : 4.146 micros/op 241204 ops/sec 60.000 seconds 14472296 operations; 125.1 MB/s (14472296 of 14472296 found)``` 4. CPU bound multi-threaded (16 threads) with ~4 key overlap/file - No coroutines - ```multireadrandom : 4.534 micros/op 3528792 ops/sec 60.000 seconds 211728728 operations; 1830.7 MB/s (12737024 of 12737024 found) ``` Using coroutines - ```multireadrandom : 4.872 micros/op 3283812 ops/sec 60.000 seconds 197030096 operations; 1703.6 MB/s (12548032 of 12548032 found) ``` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9968 Reviewed By: akankshamahajan15 Differential Revision: D36348563 Pulled By: anand1976 fbshipit-source-id: c0ce85a505fd26ebfbb09786cbd7f25202038696 |

||

|

|

280b9f371a |

Fix auto_prefix_mode performance with partitioned filters (#10012)

Summary: Essentially refactored the RangeMayExist implementation in FullFilterBlockReader to FilterBlockReaderCommon so that it applies to partitioned filters as well. (The function is not called for the block-based filter case.) RangeMayExist is essentially a series of checks around a possible PrefixMayExist, and I'm confident those checks should be the same for partitioned as for full filters. (I think it's likely that bugs remain in those checks, but this change is overall a simplifying one.) Added auto_prefix_mode support to db_bench Other small fixes as well Fixes https://github.com/facebook/rocksdb/issues/10003 Pull Request resolved: https://github.com/facebook/rocksdb/pull/10012 Test Plan: Expanded unit test that uses statistics to check for filter optimization, fails without the production code changes here Performance: populate two DBs with ``` TEST_TMPDIR=/dev/shm/rocksdb_nonpartitioned ./db_bench -benchmarks=fillrandom -num=10000000 -disable_wal=1 -write_buffer_size=30000000 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=8 TEST_TMPDIR=/dev/shm/rocksdb_partitioned ./db_bench -benchmarks=fillrandom -num=10000000 -disable_wal=1 -write_buffer_size=30000000 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=8 -partition_index_and_filters ``` Observe no measurable change in non-partitioned performance ``` TEST_TMPDIR=/dev/shm/rocksdb_nonpartitioned ./db_bench -benchmarks=seekrandom[-X1000] -num=10000000 -readonly -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=8 -auto_prefix_mode -cache_index_and_filter_blocks=1 -cache_size=1000000000 -duration 20 ``` Before: seekrandom [AVG 15 runs] : 11798 (± 331) ops/sec After: seekrandom [AVG 15 runs] : 11724 (± 315) ops/sec Observe big improvement with partitioned (also supported by bloom use statistics) ``` TEST_TMPDIR=/dev/shm/rocksdb_partitioned ./db_bench -benchmarks=seekrandom[-X1000] -num=10000000 -readonly -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=8 -partition_index_and_filters -auto_prefix_mode -cache_index_and_filter_blocks=1 -cache_size=1000000000 -duration 20 ``` Before: seekrandom [AVG 12 runs] : 2942 (± 57) ops/sec After: seekrandom [AVG 12 runs] : 7489 (± 184) ops/sec Reviewed By: siying Differential Revision: D36469796 Pulled By: pdillinger fbshipit-source-id: bcf1e2a68d347b32adb2b27384f945434e7a266d |

||

|

|

c6d326d3d7 |

Track SST unique id in MANIFEST and verify (#9990)

Summary: Start tracking SST unique id in MANIFEST, which is used to verify with SST properties to make sure the SST file is not overwritten or misplaced. A DB option `try_verify_sst_unique_id` is introduced to enable/disable the verification, if enabled, it opens all SST files during DB-open to read the unique_id from table properties (default is false), so it's recommended to use it with `max_open_files = -1` to pre-open the files. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9990 Test Plan: unittests, format-compatible test, mini-crash Reviewed By: anand1976 Differential Revision: D36381863 Pulled By: jay-zhuang fbshipit-source-id: 89ea2eb6b35ed3e80ead9c724eb096083eaba63f |

||

|

|

dde774db64 |

Mark old reserve* option deprecated (#10016)

Summary: **Context/Summary:** https://github.com/facebook/rocksdb/pull/9926 removed inefficient `reserve*` option API but forgot to mark them deprecated in `block_based_table_type_info` for compatible table format. Pull Request resolved: https://github.com/facebook/rocksdb/pull/10016 Test Plan: build-format-compatible Reviewed By: pdillinger Differential Revision: D36484247 Pulled By: hx235 fbshipit-source-id: c41b90cc99fb7ab7098934052f0af7290b221f98 |

||

|

|

4da34b97ee |

Set Read rate limiter priority dynamically and pass it to FS (#9996)

Summary: ### Context: Background compactions and flush generate large reads and writes, and can be long running, especially for universal compaction. In some cases, this can impact foreground reads and writes by users. ### Solution User, Flush, and Compaction reads share some code path. For this task, we update the rate_limiter_priority in ReadOptions for code paths (e.g. FindTable (mainly in BlockBasedTable::Open()) and various iterators), and eventually update the rate_limiter_priority in IOOptions for FSRandomAccessFile. **This PR is for the Read path.** The **Read:** dynamic priority for different state are listed as follows: | State | Normal | Delayed | Stalled | | ----- | ------ | ------- | ------- | | Flush (verification read in BuildTable()) | IO_USER | IO_USER | IO_USER | | Compaction | IO_LOW | IO_USER | IO_USER | | User | User provided | User provided | User provided | We will respect the read_options that the user provided and will not set it. The only sst read for Flush is the verification read in BuildTable(). It claims to be "regard as user read". **Details** 1. Set read_options.rate_limiter_priority dynamically: - User: Do not update the read_options. Use the read_options that the user provided. - Compaction: Update read_options in CompactionJob::ProcessKeyValueCompaction(). - Flush: Update read_options in BuildTable(). 2. Pass the rate limiter priority to FSRandomAccessFile functions: - After calling the FindTable(), read_options is passed through GetTableReader(table_cache.cc), BlockBasedTableFactory::NewTableReader(block_based_table_factory.cc), and BlockBasedTable::Open(). The Open() needs some updates for the ReadOptions variable and the updates are also needed for the called functions, including PrefetchTail(), PrepareIOOptions(), ReadFooterFromFile(), ReadMetaIndexblock(), ReadPropertiesBlock(), PrefetchIndexAndFilterBlocks(), and ReadRangeDelBlock(). - In RandomAccessFileReader, the functions to be updated include Read(), MultiRead(), ReadAsync(), and Prefetch(). - Update the downstream functions of NewIndexIterator(), NewDataBlockIterator(), and BlockBasedTableIterator(). ### Test Plans Add unit tests. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9996 Reviewed By: anand1976 Differential Revision: D36452483 Pulled By: gitbw95 fbshipit-source-id: 60978204a4f849bb9261cb78d9bc1cb56d6008cf |

||

|

|

0070680cfd |

Adjust public APIs to prefer 128-bit SST unique ID (#10009)

Summary: 128 bits should suffice almost always and for tracking in manifest. Note that this changes the output of sst_dump --show_properties to only show 128 bits. Also introduces InternalUniqueIdToHumanString for presenting internal IDs for debugging purposes. Pull Request resolved: https://github.com/facebook/rocksdb/pull/10009 Test Plan: unit tests updated Reviewed By: jay-zhuang Differential Revision: D36458189 Pulled By: pdillinger fbshipit-source-id: 93ebc4a3b6f9c73ee154383a1f8b291a5d6bbef5 |

||

|

|

3573558ec5 |

Rewrite memory-charging feature's option API (#9926)

Summary: **Context:** Previous PR https://github.com/facebook/rocksdb/pull/9748, https://github.com/facebook/rocksdb/pull/9073, https://github.com/facebook/rocksdb/pull/8428 added separate flag for each charged memory area. Such API design is not scalable as we charge more and more memory areas. Also, we foresee an opportunity to consolidate this feature with other cache usage related features such as `cache_index_and_filter_blocks` using `CacheEntryRole`. Therefore we decided to consolidate all these flags with `CacheUsageOptions cache_usage_options` and this PR serves as the first step by consolidating memory-charging related flags. **Summary:** - Replaced old API reference with new ones, including making `kCompressionDictionaryBuildingBuffer` opt-out and added a unit test for that - Added missing db bench/stress test for some memory charging features - Renamed related test suite to indicate they are under the same theme of memory charging - Refactored a commonly used mocked cache component in memory charging related tests to reduce code duplication - Replaced the phrases "memory tracking" / "cache reservation" (other than CacheReservationManager-related ones) with "memory charging" for standard description of this feature. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9926 Test Plan: - New unit test for opt-out `kCompressionDictionaryBuildingBuffer` `TEST_F(ChargeCompressionDictionaryBuildingBufferTest, Basic)` - New unit test for option validation/sanitization `TEST_F(CacheUsageOptionsOverridesTest, SanitizeAndValidateOptions)` - CI - db bench (in case querying new options introduces regression) **+0.5% micros/op**: `TEST_TMPDIR=/dev/shm/testdb ./db_bench -benchmarks=fillseq -db=$TEST_TMPDIR -charge_compression_dictionary_building_buffer=1(remove this for comparison) -compression_max_dict_bytes=10000 -disable_auto_compactions=1 -write_buffer_size=100000 -num=4000000 | egrep 'fillseq'` #-run | (pre-PR) avg micros/op | std micros/op | (post-PR) micros/op | std micros/op | change (%) -- | -- | -- | -- | -- | -- 10 | 3.9711 | 0.264408 | 3.9914 | 0.254563 | 0.5111933721 20 | 3.83905 | 0.0664488 | 3.8251 | 0.0695456 | **-0.3633711465** 40 | 3.86625 | 0.136669 | 3.8867 | 0.143765 | **0.5289363078** - db_stress: `python3 tools/db_crashtest.py blackbox -charge_compression_dictionary_building_buffer=1 -charge_filter_construction=1 -charge_table_reader=1 -cache_size=1` killed as normal Reviewed By: ajkr Differential Revision: D36054712 Pulled By: hx235 fbshipit-source-id: d406e90f5e0c5ea4dbcb585a484ad9302d4302af |

||

|

|

b11ff347b4 |

Use STATIC_AVOID_DESTRUCTION for static objects with non-trivial destructors (#9958)

Summary: Changed the static objects that had non-trivial destructors to use the STATIC_AVOID_DESTRUCTION construct. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9958 Reviewed By: pdillinger Differential Revision: D36442982 Pulled By: mrambacher fbshipit-source-id: 029d47b1374d30d198bfede369a4c0ae7a4eb519 |

||

|

|

204a42ca97 |

Added GetFactoryCount/Names/Types to ObjectRegistry (#9358)

Summary: These methods allow for more thorough testing of the ObjectRegistry and Customizable infrastructure in a simpler manner. With this change, the Customizable tests can now check what factories are registered and attempt to create each of them in a systematic fashion. With this change, I think all of the factories registered with the ObjectRegistry/CreateFromString are now tested via the customizable_test classes. Note that there were a few other minor changes. There was a "posix://*" register with the ObjectRegistry which was missed during the PatternEntry conversion -- these changes found that. The nickname and default names for the FileSystem classes was also inverted. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9358 Reviewed By: pdillinger Differential Revision: D33433542 Pulled By: mrambacher fbshipit-source-id: 9a32da74e6620745b4eeffb2712be70eeeadfa7e |

||

|

|

c4cd8e1acc |

Fix a bug handling multiget index I/O error. (#9993)

Summary: In one path of BlockBasedTable::MultiGet(), Next() is directly called after calling Seek() against the index iterator. This might cause crash if an I/O error happens in Seek(). The bug is discovered in crash test. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9993 Test Plan: See existing CI tests pass. Reviewed By: anand1976 Differential Revision: D36381758 fbshipit-source-id: a11e0aa48dcee168c2554c33b532646ffdb68877 |

||

|

|

736a7b5433 |

Remove own ToString() (#9955)

Summary: ToString() is created as some platform doesn't support std::to_string(). However, we've already used std::to_string() by mistake for 16 months (in db/db_info_dumper.cc). This commit just remove ToString(). Pull Request resolved: https://github.com/facebook/rocksdb/pull/9955 Test Plan: Watch CI tests Reviewed By: riversand963 Differential Revision: D36176799 fbshipit-source-id: bdb6dcd0e3a3ab96a1ac810f5d0188f684064471 |

||

|

|

49628c9a83 |

Use std::numeric_limits<> (#9954)

Summary: Right now we still don't fully use std::numeric_limits but use a macro, mainly for supporting VS 2013. Right now we only support VS 2017 and up so it is not a problem. The code comment claims that MinGW still needs it. We don't have a CI running MinGW so it's hard to validate. since we now require C++17, it's hard to imagine MinGW would still build RocksDB but doesn't support std::numeric_limits<>. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9954 Test Plan: See CI Runs. Reviewed By: riversand963 Differential Revision: D36173954 fbshipit-source-id: a35a73af17cdcae20e258cdef57fcf29a50b49e0 |

||

|

|

8b74cea7fe |

Reduce comparator objects init cost in BlockIter (#9611)

Summary: This PR solves the problem discussed in https://github.com/facebook/rocksdb/issues/7149. By storing the pointer of InternalKeyComparator as icmp_ in BlockIter, the object size remains the same. And for each call to CompareCurrentKey, there is no need to create Comparator objects. One can use icmp_ directly or use the "user_comparator" from the icmp_. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9611 Test Plan: with https://github.com/facebook/rocksdb/issues/9903, ``` $ TEST_TMPDIR=/dev/shm python3.6 ../benchmark/tools/compare.py benchmarks ./db_basic_bench ../rocksdb-pr9611/db_basic_bench --benchmark_filter=DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1 --benchmark_repetitions=50 ... Comparing ./db_basic_bench to ../rocksdb-pr9611/db_basic_bench Benchmark Time CPU Time Old Time New CPU Old CPU New ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- ... DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1_pvalue 0.0001 0.0001 U Test, Repetitions: 50 vs 50 DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1_mean -0.0483 -0.0483 3924 3734 3924 3734 DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1_median -0.0713 -0.0713 3971 3687 3970 3687 DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1_stddev -0.0342 -0.0344 225 217 225 217 DBGet/comp_style:0/max_data:134217728/per_key_size:256/enable_statistics:0/negative_query:0/enable_filter:0/mmap:1/iterations:262144/threads:1_cv +0.0148 +0.0146 0 0 0 0 OVERALL_GEOMEAN -0.0483 -0.0483 0 0 0 0 ``` Reviewed By: akankshamahajan15 Differential Revision: D35882037 Pulled By: ajkr fbshipit-source-id: 9e5337bbad8f1239dff7aa9f6549020d599bfcdf |

||

|

|

9d0cae7104 |

Eliminate unnecessary (slow) block cache Ref()ing in MultiGet (#9899)

Summary: When MultiGet() determines that multiple query keys can be served by examining the same data block in block cache (one Lookup()), each PinnableSlice referring to data in that data block needs to hold on to the block in cache so that they can be released at arbitrary times by the API user. Historically this is accomplished with extra calls to Ref() on the Handle from Lookup(), with each PinnableSlice cleanup calling Release() on the Handle, but this creates extra contention on the block cache for the extra Ref()s and Release()es, especially because they hit the same cache shard repeatedly. In the case of merge operands (possibly more cases?), the problem was compounded by doing an extra Ref()+eventual Release() for each merge operand for a key reusing a block (which could be the same key!), rather than one Ref() per key. (Note: the non-shared case with `biter` was already one per key.) This change optimizes MultiGet not to rely on these extra, contentious Ref()+Release() calls by instead, in the shared block case, wrapping the cache Release() cleanup in a refcounted object referenced by the PinnableSlices, such that after the last wrapped reference is released, the cache entry is Release()ed. Relaxed atomic refcounts should be much faster than mutex-guarded Ref() and Release(), and much less prone to a performance cliff when MultiGet() does a lot of block sharing. Note that I did not use std::shared_ptr, because that would require an extra indirection object (shared_ptr itself new/delete) in order to associate a ref increment/decrement with a Cleanable cleanup entry. (If I assumed it was the size of two pointers, I could do some hackery to make it work without the extra indirection, but that's too fragile.) Some details: * Fixed (removed) extra block cache tracing entries in cases of cache entry reuse in MultiGet, but it's likely that in some other cases traces are missing (XXX comment inserted) * Moved existing implementations for cleanable.h from iterator.cc to new cleanable.cc * Improved API comments on Cleanable * Added a public SharedCleanablePtr class to cleanable.h in case others could benefit from the same pattern (potentially many Cleanables and/or smart pointers referencing a shared Cleanable) * Add a typedef for MultiGetContext::Mask * Some variable renaming for clarity Pull Request resolved: https://github.com/facebook/rocksdb/pull/9899 Test Plan: Added unit tests for SharedCleanablePtr. Greatly enhanced ability of existing tests to detect cache use-after-free. * Release PinnableSlices from MultiGet as they are read rather than in bulk (in db_test_util wrapper). * In ASAN build, default to using a trivially small LRUCache for block_cache so that entries are immediately erased when unreferenced. (Updated two tests that depend on caching.) New ASAN testsuite running time seems OK to me. If I introduce a bug into my implementation where we skip the shared cleanups on block reuse, ASAN detects the bug in `db_basic_test *MultiGet*`. If I remove either of the above testing enhancements, the bug is not detected. Consider for follow-up work: manipulate or randomize ordering of PinnableSlice use and release from MultiGet db_test_util wrapper. But in typical cases, natural ordering gives pretty good functional coverage. Performance test: In the extreme (but possible) case of MultiGetting the same or adjacent keys in a batch, throughput can improve by an order of magnitude. `./db_bench -benchmarks=multireadrandom -db=/dev/shm/testdb -readonly -num=5 -duration=10 -threads=20 -multiread_batched -batch_size=200` Before ops/sec, num=5: 1,384,394 Before ops/sec, num=500: 6,423,720 After ops/sec, num=500: 10,658,794 After ops/sec, num=5: 16,027,257 Also note that previously, with high parallelism, having query keys concentrated in a single block was worse than spreading them out a bit. Now concentrated in a single block is faster than spread out, which is hopefully consistent with natural expectation. Random query performance: with num=1000000, over 999 x 10s runs running before & after simultaneously (each -threads=12): Before: multireadrandom [AVG 999 runs] : 1088699 (± 7344) ops/sec; 120.4 (± 0.8 ) MB/sec After: multireadrandom [AVG 999 runs] : 1090402 (± 7230) ops/sec; 120.6 (± 0.8 ) MB/sec Possibly better, possibly in the noise. Reviewed By: anand1976 Differential Revision: D35907003 Pulled By: pdillinger fbshipit-source-id: bbd244d703649a8ca12d476f2d03853ed9d1a17e |

||

|

|

3653029dda |

Add stats related to async prefetching (#9845)

Summary: Add stats PREFETCHED_BYTES_DISCARDED and POLL_WAIT_MICROS. PREFETCHED_BYTES_DISCARDED records number of prefetched bytes discarded by FilePrefetchBuffer. POLL_WAIT_MICROS records the time taken by underling file_system Poll API. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9845 Test Plan: Update existing tests Reviewed By: anand1976 Differential Revision: D35909694 Pulled By: akankshamahajan15 fbshipit-source-id: e009ef940bb9ed72c9446f5529095caabb8a1e36 |

||

|

|

a5063c8931 |

Fix issue of opening too many files in BlockBasedTableReaderCapMemoryTest.CapMemoryUsageUnderCacheCapacity (#9869)

Summary: **Context:** Unit test for https://github.com/facebook/rocksdb/pull/9748 keeps opening new files to see whether the new feature is able to correctly constrain the opening based on block cache capacity. However, the unit test has two places written inefficiently that can lead to opening too many new files relative to underlying operating system/file system constraint, even before hitting the block cache capacity: (1) [opened_table_reader_num < 2 * max_table_reader_num](https://github.com/facebook/rocksdb/pull/9748/files?show-viewed-files=true&file-filters%5B%5D=#diff-ec9f5353e317df71093094734ba29193b94a998f0f9c9af924e4c99692195eeaR438), which can leads to 1200 + open files because of (2) below (2) NewLRUCache(6 * CacheReservationManagerImpl<CacheEntryRole::kBlockBasedTableReader>::GetDummyEntrySize()) in [here](https://github.com/facebook/rocksdb/pull/9748/files?show-viewed-files=true&file-filters%5B%5D=#diff-ec9f5353e317df71093094734ba29193b94a998f0f9c9af924e4c99692195eeaR364) Therefore we see CI failures like this on machine with a strict open file limit ~1000 (see the "table_1021" naming in following error msg) https://app.circleci.com/pipelines/github/facebook/rocksdb/12886/workflows/75524682-3fa4-41ee-9a61-81827b51d99b/jobs/345270 ``` fs_->NewWritableFile(path, foptions, &file, nullptr) IO error: While open a file for appending: /dev/shm/rocksdb.Jedwt/run-block_based_table_reader_test-CapMemoryUsageUnderCacheCapacity-BlockBasedTableReaderCapMemoryTest.CapMemoryUsageUnderCacheCapacity-0/block_based_table_reader_test_1668910_829492452552920927/**table_1021**: Too many open files ``` **Summary:** - Revised the test more efficiently on the above 2 places, including using 1.1 instead 2 in the threshold and lowering down the block cache capacity a bit - Renamed some variables for clarity Pull Request resolved: https://github.com/facebook/rocksdb/pull/9869 Test Plan: - Manual inspection of max opened table reader in all test case, which is around ~389 - Circle CI to see if error is gone Reviewed By: ajkr Differential Revision: D35752655 Pulled By: hx235 fbshipit-source-id: 8a0953d39d561babfa4257b8ed8550bb21b04839 |

||

|

|

0c7f455f85 |

Make initial auto readahead_size configurable (#9836)

Summary: Make initial auto readahead_size configurable Pull Request resolved: https://github.com/facebook/rocksdb/pull/9836 Test Plan: Added new unit test Ran regression: Without change: ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.0 Date: Thu Mar 17 13:11:34 2022 CPU: 24 * Intel Core Processor (Broadwell) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] seekrandom : 483618.390 micros/op 2 ops/sec; 338.9 MB/s (249 of 249 found) ``` With this change: ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Set seed to 1649895440554504 because --seed was 0 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.2 Date: Wed Apr 13 17:17:20 2022 CPU: 24 * Intel Core Processor (Broadwell) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] ... finished 100 ops seekrandom : 476892.488 micros/op 2 ops/sec; 344.6 MB/s (252 of 252 found) ``` Reviewed By: anand1976 Differential Revision: D35632815 Pulled By: akankshamahajan15 fbshipit-source-id: c8057a88f9294c9d03b1d434b03affe02f74d796 |

||

|

|

efd035164b |

Meta-internal folly integration with F14FastMap (#9546)

Summary: Especially after updating to C++17, I don't see a compelling case for *requiring* any folly components in RocksDB. I was able to purge the existing hard dependencies, and it can be quite difficult to strip out non-trivial components from folly for use in RocksDB. (The prospect of doing that on F14 has changed my mind on the best approach here.) But this change creates an optional integration where we can plug in components from folly at compile time, starting here with F14FastMap to replace std::unordered_map when possible (probably no public APIs for example). I have replaced the biggest CPU users of std::unordered_map with compile-time pluggable UnorderedMap which will use F14FastMap when USE_FOLLY is set. USE_FOLLY is always set in the Meta-internal buck build, and a simulation of that is in the Makefile for public CI testing. A full folly build is not needed, but checking out the full folly repo is much simpler for getting the dependency, and anything else we might want to optionally integrate in the future. Some picky details: * I don't think the distributed mutex stuff is actually used, so it was easy to remove. * I implemented an alternative to `folly::constexpr_log2` (which is much easier in C++17 than C++11) so that I could pull out the hard dependencies on `ConstexprMath.h` * I had to add noexcept move constructors/operators to some types to make F14's complainUnlessNothrowMoveAndDestroy check happy, and I added a macro to make that easier in some common cases. * Updated Meta-internal buck build to use folly F14Map (always) No updates to HISTORY.md nor INSTALL.md as this is not (yet?) considered a production integration for open source users. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9546 Test Plan: CircleCI tests updated so that a couple of them use folly. Most internal unit & stress/crash tests updated to use Meta-internal latest folly. (Note: they should probably use buck but they currently use Makefile.) Example performance improvement: when filter partitions are pinned in cache, they are tracked by PartitionedFilterBlockReader::filter_map_ and we can build a test that exercises that heavily. Build DB with ``` TEST_TMPDIR=/dev/shm/rocksdb ./db_bench -benchmarks=fillrandom -num=10000000 -disable_wal=1 -write_buffer_size=30000000 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -partition_index_and_filters ``` and test with (simultaneous runs with & without folly, ~20 times each to see convergence) ``` TEST_TMPDIR=/dev/shm/rocksdb ./db_bench_folly -readonly -use_existing_db -benchmarks=readrandom -num=10000000 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -partition_index_and_filters -duration=40 -pin_l0_filter_and_index_blocks_in_cache ``` Average ops/s no folly: 26229.2 Average ops/s with folly: 26853.3 (+2.4%) Reviewed By: ajkr Differential Revision: D34181736 Pulled By: pdillinger fbshipit-source-id: ffa6ad5104c2880321d8a1aa7187e00ab0d02e94 |

||

|

|

63e68a4e77 |

Enable async prefetching for ReadOptions.readahead_size (#9827)

Summary: Currently async prefetching is enabled for implicit internal auto readahead in FilePrefetchBuffer if `ReadOptions.async_io` is set. This PR enables async prefetching for `ReadOptions.readahead_size` when `ReadOptions.async_io` is set true. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9827 Test Plan: Update unit test Reviewed By: anand1976 Differential Revision: D35552129 Pulled By: akankshamahajan15 fbshipit-source-id: d9f9a96672852a591375a21eef15355cf3289f5c |

||

|

|

f337542948 |

Fix a bug of TEST_SetRandomTableProperties due to non-zero padding between fields in TableProperties struct (#9812)

Summary: Context: https://github.com/facebook/rocksdb/pull/9748#discussion_r843134214 reveals an issue with TEST_SetRandomTableProperties when non-zero padding is used between the last string field and first non-string field in TableProperties. Fixed by https://github.com/facebook/rocksdb/pull/9748#discussion_r843244375 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9812 Test Plan: No production code changes and rely on existing CI Reviewed By: ajkr Differential Revision: D35423680 Pulled By: hx235 fbshipit-source-id: fd855eef3d32771bb79c65bd7012ab8bb3c400ab |

||

|

|

8ce7cea93f |

Tests for filter compatibility (#9773)

Summary: This change adds two unit tests that would each catch the regression fixed in https://github.com/facebook/rocksdb/issues/9736 * TableMetaIndexKeys - detects any churn in metaindex block keys generated by SST files using standard db_test_util configurations. * BloomFilterCompatibility - this detects if any common built-in FilterPolicy configurations fail to read filters generated by another. (The regression bug caused NewRibbonFilterPolicy not to read filters from NewBloomFilterPolicy and vice-versa.) This replaces some previous tests that didn't really appear to be testing much of anything except basic data correctness, which doesn't tell you a filter is being used. Light refactoring in meta_blocks.cc/h to support inspecting metaindex keys. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9773 Test Plan: this is the test. Verified that 7.0.2 fails both tests and 7.0.3 passes. With backporting for intentional API changes in 7.0, 6.29 also passes. Reviewed By: ajkr Differential Revision: D35236248 Pulled By: pdillinger fbshipit-source-id: 493dfe9ad7e27524bf7c6c1af8a4b8c31bc6ef5a |

||

|

|

49623f9c8e |

Account memory of big memory users in BlockBasedTable in global memory limit (#9748)

Summary: **Context:** Through heap profiling, we discovered that `BlockBasedTableReader` objects can accumulate and lead to high memory usage (e.g, `max_open_file = -1`). These memories are currently not saved, not tracked, not constrained and not cache evict-able. As a first step to improve this, similar to https://github.com/facebook/rocksdb/pull/8428, this PR is to track an estimate of `BlockBasedTableReader` object's memory in block cache and fail future creation if the memory usage exceeds the available space of cache at the time of creation. **Summary:** - Approximate big memory users (`BlockBasedTable::Rep` and `TableProperties` )' memory usage in addition to the existing estimated ones (filter block/index block/un-compression dictionary) - Charge all of these memory usages to block cache on `BlockBasedTable::Open()` and release them on `~BlockBasedTable()` as there is no memory usage fluctuation of concern in between - Refactor on CacheReservationManager (and its call-sites) to add concurrent support for BlockBasedTable used in this PR. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9748 Test Plan: - New unit tests - db bench: `OpenDb` : **-0.52% in ms** - Setup `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -write_buffer_size=1048576` - Repeated run with pre-change w/o feature and post-change with feature, benchmark `OpenDb`: `./db_bench -benchmarks=readrandom -use_existing_db=1 -db=/dev/shm/testdb -reserve_table_reader_memory=true (remove this when running w/o feature) -file_opening_threads=3 -open_files=-1 -report_open_timing=true| egrep 'OpenDb:'` #-run | (feature-off) avg milliseconds | std milliseconds | (feature-on) avg milliseconds | std milliseconds | change (%) -- | -- | -- | -- | -- | -- 10 | 11.4018 | 5.95173 | 9.47788 | 1.57538 | -16.87382694 20 | 9.23746 | 0.841053 | 9.32377 | 1.14074 | 0.9343477536 40 | 9.0876 | 0.671129 | 9.35053 | 1.11713 | 2.893283155 80 | 9.72514 | 2.28459 | 9.52013 | 1.0894 | -2.108041632 160 | 9.74677 | 0.991234 | 9.84743 | 1.73396 | 1.032752389 320 | 10.7297 | 5.11555 | 10.547 | 1.97692 | **-1.70275031** 640 | 11.7092 | 2.36565 | 11.7869 | 2.69377 | **0.6635807741** - db bench on write with cost to cache in WriteBufferManager (just in case this PR's CRM refactoring accidentally slows down anything in WBM) : `fillseq` : **+0.54% in micros/op** `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -cost_write_buffer_to_cache=true -write_buffer_size=10000000000 | egrep 'fillseq'` #-run | (pre-PR) avg micros/op | std micros/op | (post-PR) avg micros/op | std micros/op | change (%) -- | -- | -- | -- | -- | -- 10 | 6.15 | 0.260187 | 6.289 | 0.371192 | 2.260162602 20 | 7.28025 | 0.465402 | 7.37255 | 0.451256 | 1.267813605 40 | 7.06312 | 0.490654 | 7.13803 | 0.478676 | **1.060579461** 80 | 7.14035 | 0.972831 | 7.14196 | 0.92971 | **0.02254791432** - filter bench: `bloom filter`: **-0.78% in ms/key** - ` ./filter_bench -impl=2 -quick -reserve_table_builder_memory=true | grep 'Build avg'` #-run | (pre-PR) avg ns/key | std ns/key | (post-PR) ns/key | std ns/key | change (%) -- | -- | -- | -- | -- | -- 10 | 26.4369 | 0.442182 | 26.3273 | 0.422919 | **-0.4145720565** 20 | 26.4451 | 0.592787 | 26.1419 | 0.62451 | **-1.1465262** - Crash test `python3 tools/db_crashtest.py blackbox --reserve_table_reader_memory=1 --cache_size=1` killed as normal Reviewed By: ajkr Differential Revision: D35136549 Pulled By: hx235 fbshipit-source-id: 146978858d0f900f43f4eb09bfd3e83195e3be28 |

||

|

|

36bc3da97f |

Fix segfault in FilePrefetchBuffer with async_io enabled (#9777)

Summary: If FilePrefetchBuffer object is destroyed and then later Poll() calls callback on object which has been destroyed, it gives segfault on accessing destroyed object. It was caught after adding unit tests that tests Posix implementation of ReadAsync and Poll APIs. This PR also updates and fixes existing IOURing tests which were not running locally because RocksDbIOUringEnable function wasn't defined and IOUring was disabled for those tests Pull Request resolved: https://github.com/facebook/rocksdb/pull/9777 Test Plan: Added new unit test Reviewed By: anand1976 Differential Revision: D35254002 Pulled By: akankshamahajan15 fbshipit-source-id: 68e80054ffb14ae25c255920ebc6548ca5f130a1 |

||

|

|

b6ad0d958f |

Fb 9718 verify checksums is ignored (#9767)

Summary: Fixes https://github.com/facebook/rocksdb/issues/9718 The verify_checksums flag of read_options should be passed to the read options used by the BlockFetcher in a couple of cases where it is not at present. It will now happen (but did not, previously) on iteration and on [multi]get, where a fetcher is created as part of the iterate/get call. This may result in much better performance in a few workloads where the client chooses to remove verification. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9767 Reviewed By: mrambacher Differential Revision: D35218986 Pulled By: jay-zhuang fbshipit-source-id: 329d29764bb70fbc7f2673440bc46c107a813bc8 |

||

|

|

98130c5a26 |

Enable READ_BLOCK_COMPACTION_MICROS to track stats (#9722)

Summary: After commit [ |

||

|

|

91687d70ea |

Fix a major performance bug in 7.0 re: filter compatibility (#9736)

Summary: Bloom filters generated by pre-7.0 releases are not read by 7.0.x releases (and vice-versa) due to changes to FilterPolicy::Name() in https://github.com/facebook/rocksdb/issues/9590. This can severely impact read performance and read I/O on upgrade or downgrade with existing DB, but not data correctness. To fix, we go back using the old, unified name in SST metadata but (for a while anyway) recognize the aliases that could be generated by early 7.0.x releases. This unfortunately requires a public API change to avoid interfering with all the good changes from https://github.com/facebook/rocksdb/issues/9590, but the API change only affects users with custom FilterPolicy, which should be very few. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9736 Test Plan: manual Generate DBs with ``` ./db_bench.7.0 -db=/dev/shm/rocksdb.7.0 -bloom_bits=10 -cache_index_and_filter_blocks=1 -benchmarks=fillrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 ``` and similar. Compare with ``` for IMPL in 6.29 7.0 fixed; do for DB in 6.29 7.0 fixed; do echo "Testing $IMPL on $DB:"; ./db_bench.$IMPL -db=/dev/shm/rocksdb.$DB -use_existing_db -readonly -bloom_bits=10 -benchmarks=readrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -duration=10 2>&1 | grep micros/op; done; done ``` Results: ``` Testing 6.29 on 6.29: readrandom : 34.381 micros/op 29085 ops/sec; 3.2 MB/s (291999 of 291999 found) Testing 6.29 on 7.0: readrandom : 190.443 micros/op 5249 ops/sec; 0.6 MB/s (52999 of 52999 found) Testing 6.29 on fixed: readrandom : 40.148 micros/op 24907 ops/sec; 2.8 MB/s (249999 of 249999 found) Testing 7.0 on 6.29: readrandom : 229.430 micros/op 4357 ops/sec; 0.5 MB/s (43999 of 43999 found) Testing 7.0 on 7.0: readrandom : 33.348 micros/op 29986 ops/sec; 3.3 MB/s (299999 of 299999 found) Testing 7.0 on fixed: readrandom : 152.734 micros/op 6546 ops/sec; 0.7 MB/s (65999 of 65999 found) Testing fixed on 6.29: readrandom : 32.024 micros/op 31224 ops/sec; 3.5 MB/s (312999 of 312999 found) Testing fixed on 7.0: readrandom : 33.990 micros/op 29390 ops/sec; 3.3 MB/s (294999 of 294999 found) Testing fixed on fixed: readrandom : 28.714 micros/op 34825 ops/sec; 3.9 MB/s (348999 of 348999 found) ``` Just paying attention to order of magnitude of ops/sec (short test durations, lots of noise), it's clear that with the fix we can read <= 6.29 & >= 7.0 at full speed, where neither 6.29 nor 7.0 can on both. And 6.29 release can properly read fixed DB at full speed. Reviewed By: siying, ajkr Differential Revision: D35057844 Pulled By: pdillinger fbshipit-source-id: a46893a6af4bf084375ebe4728066d00eb08f050 |

||

|

|

8102690a52 |

Update Cache::Release param from force_erase to erase_if_last_ref (#9728)

Summary: The param name force_erase may be misleading, since the handle is erased only if it has last reference even if the param is set true. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9728 Reviewed By: pdillinger Differential Revision: D35038673 Pulled By: gitbw95 fbshipit-source-id: 0d16d1e8fed17b97eba7fb53207119332f659a5f |

||

|

|

49a10feb21 |

Provide implementation to prefetch data asynchronously in FilePrefetchBuffer (#9674)

Summary: In FilePrefetchBuffer if reads are sequential, after prefetching call ReadAsync API to prefetch data asynchronously so that in next prefetching data will be available. Data prefetched asynchronously will be readahead_size/2. It uses two buffers, one for synchronous prefetching and one for asynchronous. In case, the data is overlapping, the data is copied from both buffers to third buffer to make it continuous. This feature is under ReadOptions::async_io and is under experimental. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9674 Test Plan: 1. Add new unit tests 2. Run **db_stress** to make sure nothing crashes. - Normal prefetch without `async_io` ran successfully: ``` export CRASH_TEST_EXT_ARGS=" --async_io=0" make crash_test -j ``` 3. **Run Regressions**. i) Main branch without any change for normal prefetching with async_io disabled: ``` ./db_bench -db=/tmp/prefix_scan_prefetch_main -benchmarks="fillseq" -key_size=32 -value_size=512 -num=5000000 - use_direct_io_for_flush_and_compaction=true -target_file_size_base=16777216 ``` ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.0 Date: Thu Mar 17 13:11:34 2022 CPU: 24 * Intel Core Processor (Broadwell) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_main] seekrandom : 483618.390 micros/op 2 ops/sec; 338.9 MB/s (249 of 249 found) ``` ii) normal prefetching after changes with async_io disable: ``` ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_withchange -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags RocksDB: version 7.0 Date: Thu Mar 17 14:11:31 2022 CPU: 24 * Intel Core Processor (Broadwell) CPUCache: 16384 KB Keys: 32 bytes each (+ 0 bytes user-defined timestamp) Values: 512 bytes each (256 bytes after compression) Entries: 5000000 Prefix: 0 bytes Keys per prefix: 0 RawSize: 2594.0 MB (estimated) FileSize: 1373.3 MB (estimated) Write rate: 0 bytes/second Read rate: 0 ops/second Compression: Snappy Compression sampling rate: 0 Memtablerep: SkipListFactory Perf Level: 1 ------------------------------------------------ DB path: [/tmp/prefix_scan_prefetch_withchange] seekrandom : 471347.227 micros/op 2 ops/sec; 348.1 MB/s (255 of 255 found) ``` Reviewed By: anand1976 Differential Revision: D34731543 Pulled By: akankshamahajan15 fbshipit-source-id: 8e23aa93453d5fe3c672b9231ad582f60207937f |

||

|

|

cff0d1e8e6 |

New backup meta schema, with file temperatures (#9660)

Summary: The primary goal of this change is to add support for backing up and restoring (applying on restore) file temperature metadata, without committing to either the DB manifest or the FS reported "current" temperatures being exclusive "source of truth". To achieve this goal, we need to add temperature information to backup metadata, which requires updated backup meta schema. Fortunately I prepared for this in https://github.com/facebook/rocksdb/issues/8069, which began forward compatibility in version 6.19.0 for this kind of schema update. (Previously, backup meta schema was not extensible! Making this schema update public will allow some other "nice to have" features like taking backups with hard links, and avoiding crc32c checksum computation when another checksum is already available.) While schema version 2 is newly public, the default schema version is still 1. Until we change the default, users will need to set to 2 to enable features like temperature data backup+restore. New metadata like temperature information will be ignored with a warning in versions before this change and since 6.19.0. The metadata is considered ignorable because a functioning DB can be restored without it. Some detail: * Some renaming because "future schema" is now just public schema 2. * Initialize some atomics in TestFs (linter reported) * Add temperature hint support to SstFileDumper (used by BackupEngine) Pull Request resolved: https://github.com/facebook/rocksdb/pull/9660 Test Plan: related unit test majorly updated for the new functionality, including some shared testing support for tracking temperatures in a FS. Some other tests and testing hooks into production code also updated for making the backup meta schema change public. Reviewed By: ajkr Differential Revision: D34686968 Pulled By: pdillinger fbshipit-source-id: 3ac1fa3e67ee97ca8a5103d79cc87d872c1d862a |

||

|

|

60422f1676 |

Replace GetUserKey with ExtractUserKey (#9664)

Summary: Replace `GetUserKey` with `ExtractUserKey` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9664 Reviewed By: jay-zhuang Differential Revision: D34673571 Pulled By: ajkr fbshipit-source-id: 5acf7d0d1a45efce0ccbe505991acf76c9cdc461 |

||

|

|

36aec94d85 |

compression_per_level should be used for flush and changeable (#9658)

Summary: - Make `compression_per_level` dynamical changeable with `SetOptions`; - Fix a bug that `compression_per_level` is not used for flush; Pull Request resolved: https://github.com/facebook/rocksdb/pull/9658 Test Plan: CI Reviewed By: ajkr Differential Revision: D34700749 Pulled By: jay-zhuang fbshipit-source-id: a23b9dfa7ad03d393c1d71781d19e91de796f49c |

||

|

|

4a776d81cc |

Dynamic toggling of BlockBasedTableOptions::detect_filter_construct_corruption (#9654)

Summary: **Context/Summary:** As requested, `BlockBasedTableOptions::detect_filter_construct_corruption` can now be dynamically configured using `DB::SetOptions` after this PR Pull Request resolved: https://github.com/facebook/rocksdb/pull/9654 Test Plan: - New unit test Reviewed By: pdillinger Differential Revision: D34622609 Pulled By: hx235 fbshipit-source-id: c06773ef3d029e6bf1724d3a72dffd37a8ec66d9 |

||

|

|

33742c2a9f |

Remove BlockBasedTableOptions.hash_index_allow_collision (#9454)

Summary: BlockBasedTableOptions.hash_index_allow_collision is already deprecated and has no effect. Delete it for preparing 7.0 release. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9454 Test Plan: Run all existing tests. Reviewed By: ajkr Differential Revision: D33805827 fbshipit-source-id: ed8a436d1d083173ec6aef2a762ba02e1eefdc9d |

||

|

|

0a89cea5f5 |

Handle failures in block-based table size/offset approximation (#9615)

Summary: In crash test with fault injection, we were seeing stack traces like the following: ``` https://github.com/facebook/rocksdb/issues/3 0x00007f75f763c533 in __GI___assert_fail (assertion=assertion@entry=0x1c5b2a0 "end_offset >= start_offset", file=file@entry=0x1c580a0 "table/block_based/block_based_table_reader.cc", line=line@entry=3245, function=function@entry=0x1c60e60 "virtual uint64_t rocksdb::BlockBasedTable::ApproximateSize(const rocksdb::Slice&, const rocksdb::Slice&, rocksdb::TableReaderCaller)") at assert.c:101 https://github.com/facebook/rocksdb/issues/4 0x00000000010ea9b4 in rocksdb::BlockBasedTable::ApproximateSize (this=<optimized out>, start=..., end=..., caller=<optimized out>) at table/block_based/block_based_table_reader.cc:3224 https://github.com/facebook/rocksdb/issues/5 0x0000000000be61fb in rocksdb::TableCache::ApproximateSize (this=0x60f0000161b0, start=..., end=..., fd=..., caller=caller@entry=rocksdb::kCompaction, internal_comparator=..., prefix_extractor=...) at db/table_cache.cc:719 https://github.com/facebook/rocksdb/issues/6 0x0000000000c3eaec in rocksdb::VersionSet::ApproximateSize (this=<optimized out>, v=<optimized out>, f=..., start=..., end=..., caller=<optimized out>) at ./db/version_set.h:850 https://github.com/facebook/rocksdb/issues/7 0x0000000000c6ebc3 in rocksdb::VersionSet::ApproximateSize (this=<optimized out>, options=..., v=v@entry=0x621000047500, start=..., end=..., start_level=start_level@entry=0, end_level=<optimized out>, caller=<optimized out>) at db/version_set.cc:5657 https://github.com/facebook/rocksdb/issues/8 0x000000000166e894 in rocksdb::CompactionJob::GenSubcompactionBoundaries (this=<optimized out>) at ./include/rocksdb/options.h:1869 https://github.com/facebook/rocksdb/issues/9 0x000000000168c526 in rocksdb::CompactionJob::Prepare (this=this@entry=0x7f75f3ffcf00) at db/compaction/compaction_job.cc:546 ``` The problem occurred in `ApproximateSize()` when the index `Seek()` for the first `ApproximateDataOffsetOf()` encountered an I/O error, while the second `Seek()` did not. In the old code that scenario caused `start_offset == data_size` , thus it was easy to trip the assertion that `end_offset >= start_offset`. The fix is to set `start_offset == 0` when the first index `Seek()` fails, and `end_offset == data_size` when the second index `Seek()` fails. I doubt these give an "on average correct" answer for how this function is used, but I/O errors in index seeks are hopefully rare, it looked consistent with what was already there, and it was easier to calculate. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9615 Test Plan: run the repro command for a while and stopped seeing coredumps - ``` $ while ! ./db_stress --block_size=128 --cache_size=32768 --clear_column_family_one_in=0 --column_families=1 --continuous_verification_interval=0 --db=/dev/shm/rocksdb_crashtest --delpercent=4 --delrangepercent=1 --destroy_db_initially=0 --expected_values_dir=/dev/shm/rocksdb_crashtest_expected --index_type=2 --iterpercent=10 --kill_random_test=18887 --max_key=1000000 --max_bytes_for_level_base=2048576 --nooverwritepercent=1 --open_files=-1 --open_read_fault_one_in=32 --ops_per_thread=1000000 --prefixpercent=5 --read_fault_one_in=0 --readpercent=45 --reopen=0 --skip_verifydb=1 --subcompactions=2 --target_file_size_base=524288 --test_batches_snapshots=0 --value_size_mult=32 --write_buffer_size=524288 --writepercent=35 ; do : ; done ``` Reviewed By: pdillinger Differential Revision: D34383069 Pulled By: ajkr fbshipit-source-id: fac26c3b20ea962e75387515ba5f2724dc48719f |

||

|

|

30b08878d8 |

Make FilterPolicy Customizable (#9590)

Summary: Make FilterPolicy into a Customizable class. Allow new FilterPolicy to be discovered through the ObjectRegistry Pull Request resolved: https://github.com/facebook/rocksdb/pull/9590 Reviewed By: pdillinger Differential Revision: D34327367 Pulled By: mrambacher fbshipit-source-id: 37e7edac90ec9457422b72f359ab8ef48829c190 |

||

|

|

54fb2a8975 |

Change type of cache buffer passed to Cache::CreateCallback() to const void* (#9595)

Summary: Pull Request resolved: https://github.com/facebook/rocksdb/pull/9595 Reviewed By: riversand963 Differential Revision: D34329906 Pulled By: ajkr fbshipit-source-id: 508601856fa9bee4d40f4a68d14d333ef2143d40 |

||

|

|

725833a424 |

Hide FilterBits{Builder,Reader} from public API (#9592)

Summary: We don't have any evidence of people using these to build custom filters. The recommended way of customizing filter handling is to defer to various built-in policies based on FilterBuildingContext (e.g. to build Monkey filtering policy). With old API, we have evidence of people modifying keys going into filter, but most cases of that can be handled with prefix_extractor. Having FilterBitsBuilder+Reader in the public API is an ogoing hinderance to code evolution (e.g. recent new Finish and MaybePostVerify), and so this change removes them from the public API for 7.0. Maybe they will come back in some form later, but lacking evidence of them providing value in the public API, we want to take back more freedom to evolve these. With this moved to internal-only, there is no rush to clean up the complex Finish signatures, or add memory allocator support, but doing so is much easier with them out of public API, for example to use CacheAllocationPtr without exposing it in the public API. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9592 Test Plan: cosmetic changes only Reviewed By: hx235 Differential Revision: D34315470 Pulled By: pdillinger fbshipit-source-id: 03e03bb66a72c73df2c464d2dbbbae906dd8f99b |

||

|

|

babe56ddba |

Add rate limiter priority to ReadOptions (#9424)

Summary: Users can set the priority for file reads associated with their operation by setting `ReadOptions::rate_limiter_priority` to something other than `Env::IO_TOTAL`. Rate limiting `VerifyChecksum()` and `VerifyFileChecksums()` is the motivation for this PR, so it also includes benchmarks and minor bug fixes to get that working. `RandomAccessFileReader::Read()` already had support for rate limiting compaction reads. I changed that rate limiting to be non-specific to compaction, but rather performed according to the passed in `Env::IOPriority`. Now the compaction read rate limiting is supported by setting `rate_limiter_priority = Env::IO_LOW` on its `ReadOptions`. There is no default value for the new `Env::IOPriority` parameter to `RandomAccessFileReader::Read()`. That means this PR goes through all callers (in some cases multiple layers up the call stack) to find a `ReadOptions` to provide the priority. There are TODOs for cases I believe it would be good to let user control the priority some day (e.g., file footer reads), and no TODO in cases I believe it doesn't matter (e.g., trace file reads). The API doc only lists the missing cases where a file read associated with a provided `ReadOptions` cannot be rate limited. For cases like file ingestion checksum calculation, there is no API to provide `ReadOptions` or `Env::IOPriority`, so I didn't count that as missing. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9424 Test Plan: - new unit tests - new benchmarks on ~50MB database with 1MB/s read rate limit and 100ms refill interval; verified with strace reads are chunked (at 0.1MB per chunk) and spaced roughly 100ms apart. - setup command: `./db_bench -benchmarks=fillrandom,compact -db=/tmp/testdb -target_file_size_base=1048576 -disable_auto_compactions=true -file_checksum=true` - benchmarks command: `strace -ttfe pread64 ./db_bench -benchmarks=verifychecksum,verifyfilechecksums -use_existing_db=true -db=/tmp/testdb -rate_limiter_bytes_per_sec=1048576 -rate_limit_bg_reads=1 -rate_limit_user_ops=true -file_checksum=true` - crash test using IO_USER priority on non-validation reads with https://github.com/facebook/rocksdb/issues/9567 reverted: `python3 tools/db_crashtest.py blackbox --max_key=1000000 --write_buffer_size=524288 --target_file_size_base=524288 --level_compaction_dynamic_level_bytes=true --duration=3600 --rate_limit_bg_reads=true --rate_limit_user_ops=true --rate_limiter_bytes_per_sec=10485760 --interval=10` Reviewed By: hx235 Differential Revision: D33747386 Pulled By: ajkr fbshipit-source-id: a2d985e97912fba8c54763798e04f006ccc56e0c |

||

|

|

1e403a0c6c |

Fix assertion failure in FastLocalBloomBitsBuilder (#9585)

Summary:

As in

```

db_stress: table/block_based/filter_policy.cc:316: rocksdb::{anonymous}::FastLocalBloomBitsBuilder::FastLocalBloomBitsBuilder(int, std::atomic<long int>*, std::shared_ptr<rocksdb::CacheReservationManager>, bool): Assertion `millibits_per_key >= 1000' failed.

```

This assertion failure was actually happening with our RibbonFilterPolicy

which falls back to Bloom for some cases, often for flush, but was

missing new special logic to skip generating filter for 0 bits per key

case. Fixed by adding the logic in other builtin FilterPolicy

implementations.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/9585

Test Plan:

Updated db_bloom_filter_test to do more integration testing

of the RibbonFilterPolicy ("auto Ribbon") class, incl regression test

this with SkipFilterOnEssentiallyZeroBpk

Reviewed By: ajkr

Differential Revision: D34295101

Pulled By: pdillinger

fbshipit-source-id: 3488eb207fc1d67bbbd1301313714aa1b6406e6e

|

||

|

|

8c681087c7 |

Refactor FilterPolicies toward Customizable (#9567)

Summary: Some changes to make it easier to make FilterPolicy customizable. Especially, create distinct classes for the different testing-only and user-facing built-in FilterPolicy modes. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9567 Test Plan: tests updated, with no intended difference in functionality tested. No difference in test performance seen as a result of moving to string-based filter type configuration. Reviewed By: mrambacher Differential Revision: D34234694 Pulled By: pdillinger fbshipit-source-id: 8a94931a9e04c3bcca863a4f524cfd064aaf0122 |

||

|

|

479eb1aad6 |

Hide deprecated, inefficient block-based filter from public API (#9535)

Summary: This change removes the ability to configure the deprecated, inefficient block-based filter in the public API. Options that would have enabled it now use "full" (and optionally partitioned) filters. Existing block-based filters can still be read and used, and a "back door" way to build them still exists, for testing and in case of trouble. About the only way this removal would cause an issue for users is if temporary memory for filter construction greatly increases. In HISTORY.md we suggest a few possible mitigations: partitioned filters, smaller SST files, or setting reserve_table_builder_memory=true. Or users who have customized a FilterPolicy using the CreateFilter/KeyMayMatch mechanism removed in https://github.com/facebook/rocksdb/issues/9501 will have to upgrade their code. (It's long past time for people to move to the new builder/reader customization interface.) This change also introduces some internal-use-only configuration strings for testing specific filter implementations while bypassing some compatibility / intelligence logic. This is intended to hint at a path toward making FilterPolicy Customizable, but it also gives us a "back door" way to configure block-based filter. Aside: updated db_bench so that -readonly implies -use_existing_db Pull Request resolved: https://github.com/facebook/rocksdb/pull/9535 Test Plan: Unit tests updated. Specifically, * BlockBasedTableTest.BlockReadCountTest is tweaked to validate the back door configuration interface and ignoring of `use_block_based_builder`. * BlockBasedTableTest.TracingGetTest is migrated from testing block-based filter access pattern to full filter access patter, by re-ordering some things. * Options test (pretty self-explanatory) Performance test - create with `./db_bench -db=/dev/shm/rocksdb1 -bloom_bits=10 -cache_index_and_filter_blocks=1 -benchmarks=fillrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0` with and without `-use_block_based_filter`, which creates a DB with 21 SST files in L0. Read with `./db_bench -db=/dev/shm/rocksdb1 -readonly -bloom_bits=10 -cache_index_and_filter_blocks=1 -benchmarks=readrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -duration=30` Without -use_block_based_filter: readrandom 464 ops/sec, 689280 KB DB With -use_block_based_filter: readrandom 169 ops/sec, 690996 KB DB No consistent difference with fillrandom Reviewed By: jay-zhuang Differential Revision: D34153871 Pulled By: pdillinger fbshipit-source-id: 31f4a933c542f8f09aca47fa64aec67832a69738 |

||

|

|

68a9c186d0 |

FilterPolicy API changes for 7.0 (#9501)

Summary: * Inefficient block-based filter is no longer customizable in the public API, though (for now) can still be enabled. * Removed deprecated FilterPolicy::CreateFilter() and FilterPolicy::KeyMayMatch() * Removed `rocksdb_filterpolicy_create()` from C API * Change meaning of nullptr return from GetBuilderWithContext() from "use block-based filter" to "generate no filter in this case." This is a cleaner solution to the proposal in https://github.com/facebook/rocksdb/issues/8250. * Also, when user specifies bits_per_key < 0.5, we now round this down to "no filter" because we expect a filter with >= 80% FP rate is unlikely to be worth the CPU cost of accessing it (esp with cache_index_and_filter_blocks=1 or partition_filters=1). * bits_per_key >= 0.5 and < 1.0 is still rounded up to 1.0 (for 62% FP rate) * This also gives us some support for configuring filters from OPTIONS file as currently saved: `filter_policy=rocksdb.BuiltinBloomFilter`. Opening from such an options file will enable reading filters (an improvement) but not writing new ones. (See Customizable follow-up below.) * Also removed deprecated functions * FilterBitsBuilder::CalculateNumEntry() * FilterPolicy::GetFilterBitsBuilder() * NewExperimentalRibbonFilterPolicy() * Remove default implementations of * FilterBitsBuilder::EstimateEntriesAdded() * FilterBitsBuilder::ApproximateNumEntries() * FilterPolicy::GetBuilderWithContext() * Remove support for "filter_policy=experimental_ribbon" configuration string. * Allow "filter_policy=bloomfilter:n" without bool to discourage use of block-based filter. Some pieces for https://github.com/facebook/rocksdb/issues/9389 Likely follow-up (later PRs): * Refactoring toward FilterPolicy Customizable, so that we can generate filters with same configuration as before when configuring from options file. * Remove support for user enabling block-based filter (ignore `bool use_block_based_builder`) * Some months after this change, we could even remove read support for block-based filter, because it is not critical to DB data preservation. * Make FilterBitsBuilder::FinishV2 to avoid `using FilterBitsBuilder::Finish` mess and add support for specifying a MemoryAllocator (for cache warming) Pull Request resolved: https://github.com/facebook/rocksdb/pull/9501 Test Plan: A number of obsolete tests deleted and new tests or test cases added or updated. Reviewed By: hx235 Differential Revision: D34008011 Pulled By: pdillinger fbshipit-source-id: a39a720457c354e00d5b59166b686f7f59e392aa |

||

|

|

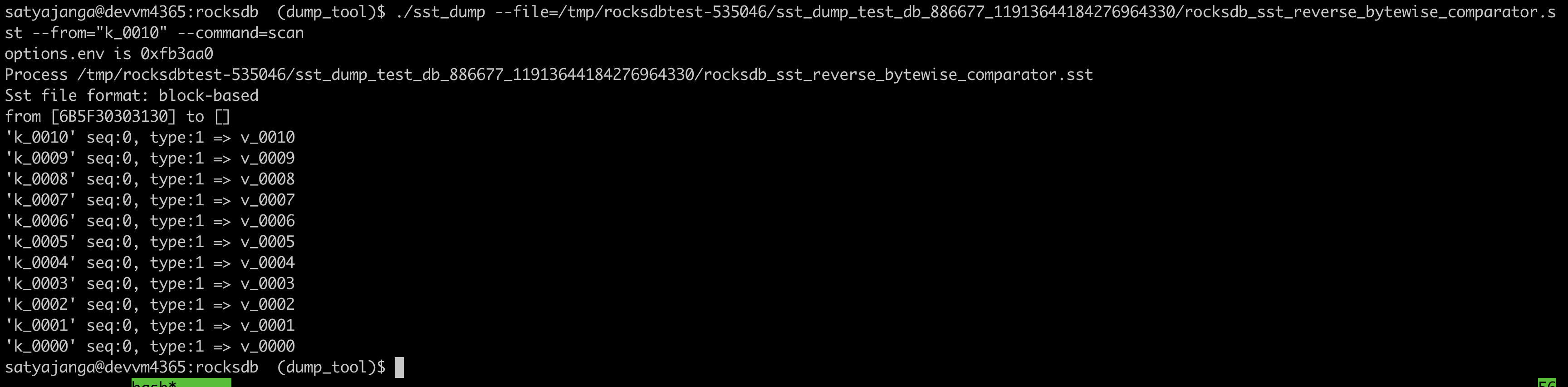

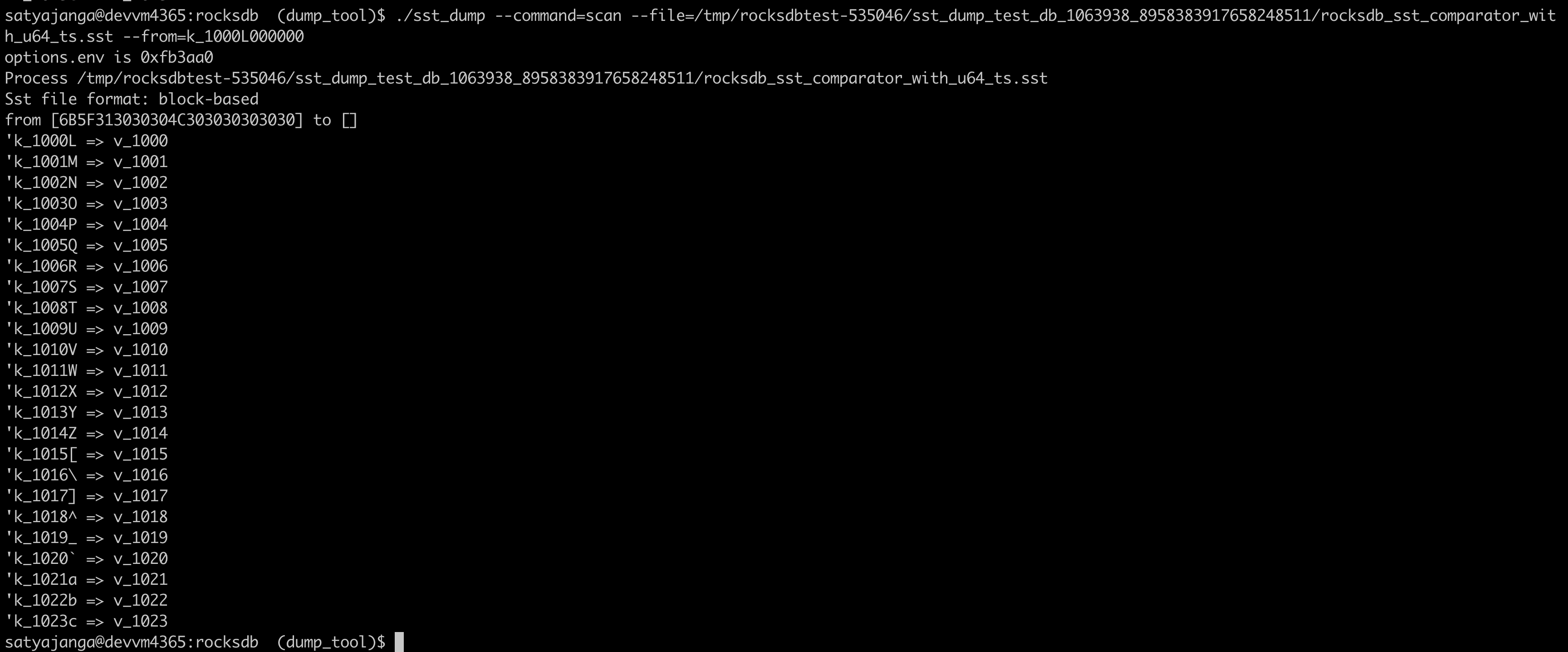

036bbab6f7 |

Use the comparator from the sst file table properties in sst_dump_tool (#9491)

Summary: We introduced a new Comparator for timestamp in user keys. In the sst_dump_tool by default we use BytewiseComparator to read sst files. This change allows us to read comparator_name from table properties in meta data block and use it to read. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9491 Test Plan: added unittests for new functionality. make check   Reviewed By: riversand963 Differential Revision: D33993614 Pulled By: satyajanga fbshipit-source-id: 4b5cf938e6d2cb3931d763bef5baccc900b8c536 |

||

|

|

920386f2b7 |

Detect (new) Bloom/Ribbon Filter construction corruption (#9342)