Summary:

This PR schedules a background thread (shared across all DB instances)

to flush info log every ten seconds. This improves debuggability in case

of RocksDB hanging since it ensures the log messages leading up to the hang

will eventually become visible in the log.

The bulk of this PR is moving monitoring/stats_dump_scheduler* to db/periodic_work_scheduler*

and making the corresponding name changes since now the scheduler handles info

log flushing, not just stats dumping.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7488

Reviewed By: riversand963

Differential Revision: D24065165

Pulled By: ajkr

fbshipit-source-id: 339c47a0ff43b79fdbd055fbd9fefbb6f9d8d3b5

Summary:

Introduce an new option options.check_flush_compaction_key_order, by default set to true, which checks key order of flush and compaction, and fail the operation if the order is violated.

Also did minor refactor hash checking code, which consolidates the hashing logic to a vlidation class, where the key ordering logic is added.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7467

Test Plan: Add unit tests to validate the check can catch reordering in flush and compaction, and can be properly disabled.

Reviewed By: riversand963

Differential Revision: D24010683

fbshipit-source-id: 8dd6292d2cda8006054e9ded7cfa4bf405f0527c

Summary:

Implement a parsing tool io_tracer_parser that takes IO trace file (binary file) with command line argument --io_trace_file and output file with --output_file and dumps the IO trace records in outputfile in human readable form.

Also added unit test cases that generates IO trace records and calls io_tracer_parse to parse those records.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7333

Test Plan:

make check -j64,

Add unit test cases.

Reviewed By: anand1976

Differential Revision: D23772360

Pulled By: akankshamahajan15

fbshipit-source-id: 9c20519c189362e6663352d08863326f3e496271

Summary:

While rocksdb can compile on both macOS and Linux with Buck, it couldn't be

compiled on Windows. The only way to compile it on Windows was with the CMake

build.

To keep the multi-platform complexity low, I've simply included all the Windows

bits in the TARGETS file, and added large #if blocks when not on Windows, the

same was done on the posix specific files.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7406

Test Plan:

On my devserver:

buck test //rocksdb/...

On Windows:

buck build mode/win //rocksdb/src:rocksdb_lib

Reviewed By: pdillinger

Differential Revision: D23874358

Pulled By: xavierd

fbshipit-source-id: 8768b5d16d7e8f44b5ca1e2483881ca4b24bffbe

Summary:

This PR merges the functionality of making the ColumnFamilyOptions, TableFactory, and DBOptions into Configurable into a single PR, resolving any merge conflicts

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5753

Reviewed By: ajkr

Differential Revision: D23385030

Pulled By: zhichao-cao

fbshipit-source-id: 8b977a7731556230b9b8c5a081b98e49ee4f160a

Summary:

as title

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7347

Test Plan: unit tests included

Reviewed By: jay-zhuang

Differential Revision: D23592552

Pulled By: pdillinger

fbshipit-source-id: 1c3571b6f42bfd0cfd723ff49d01fbc02a1be45b

Summary:

A new file interface `SupportPrefetch()` is added. When the user overrides it to `false`, an internal prefetch buffer will be used for readahead. Useful for non-directIO but FS doesn't have readahead support.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7312

Reviewed By: anand1976

Differential Revision: D23329847

Pulled By: jay-zhuang

fbshipit-source-id: 71cd4ce6f4a820840294e4e6aec111ab76175527

Summary:

The patch adds a class called `BlobFileBuilder` that can be used to build

and cut blob files in background jobs (flushes/compactions). The class

enforces a value size threshold (`min_blob_size`; smaller blobs will be inlined

in the LSM tree itself), and supports specifying a blob file size limit (`blob_file_size`),

as well as compression (`blob_compression_type`) and checksums for blob files.

It also keeps track of the generated blob files and their associated `BlobFileAddition`

metadata, which can be applied as part of the background job's `VersionEdit`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7306

Test Plan: `make check`

Reviewed By: riversand963

Differential Revision: D23298817

Pulled By: ltamasi

fbshipit-source-id: 38f35d81dab1ba81f15236240612ec173d7f21b5

Summary:

Have a global StatsDumpScheduler for all DB instance stats dumping, including `DumpStats()` and `PersistStats()`. Before this, there're 2 dedicate threads for every DB instance, one for DumpStats() one for PersistStats(), which could create lots of threads if there're hundreds DB instances.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7223

Reviewed By: riversand963

Differential Revision: D23056737

Pulled By: jay-zhuang

fbshipit-source-id: 0faa2311142a73433ebb3317361db7cbf43faeba

Summary:

We're going to support more locking protocols such as range lock in transaction.

However, in current design, `TransactionBase` has a member `tracked_keys` which assumes that point lock (lock a single key) is used, and is used in snapshot checking (isolation protocol). When using range lock, we may use read committed instead of snapshot checking as the isolation protocol.

The most significant usage scenarios of `tracked_keys` are:

1. pessimistic transaction uses it to track the locked keys, and unlock these keys when commit or rollback.

2. optimistic transaction does not lock keys upfront, it only tracks the lock intentions in tracked_keys, and do write conflict checking when commit.

3. each `SavePoint` tracks the keys that are locked since the `SavePoint`, `RollbackToSavePoint` or `PopSavePoint` relies on both the tracked keys in `SavePoint`s and `tracked_keys`.

Based on these scenarios, if we can abstract out a `LockTracker` interface to hold a set of tracked locks (can be keys or key ranges), and have methods that can be composed together to implement the scenarios, then `tracked_keys` can be an internal data structure of one implementation of `LockTracker`. See `utilities/transactions/lock/lock_tracker.h` for the detailed interface design, and `utilities/transactions/lock/point_lock_tracker.cc` for the implementation.

In the future, a `RangeLockTracker` can be implemented to track range locks without affecting other components.

After this PR, a clean interface for lock manager should be possible, and then ideally, we can have pluggable locking protocols.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7013

Test Plan: Run `transaction_test` and `optimistic_transaction_test`.

Reviewed By: ajkr

Differential Revision: D22163706

Pulled By: cheng-chang

fbshipit-source-id: f2860577b5334e31dd2994f5bc6d7c40d502b1b4

Summary:

`WalAddition`, `WalDeletion` are defined in `wal_version.h` and used in `VersionEdit`.

`WalAddition` is used to represent events of creating a new WAL (no size, just log number), or closing a WAL (with size).

`WalDeletion` is used to represent events of deleting or archiving a WAL, it means the WAL is no longer alive (won't be replayed during recovery).

`WalSet` is the set of alive WALs kept in `VersionSet`.

1. Why use `WalDeletion` instead of relying on `MinLogNumber` to identify outdated WALs

On recovery, we can compute `MinLogNumber()` based on the log numbers kept in MANIFEST, any log with number < MinLogNumber can be ignored. So it seems that we don't need to persist `WalDeletion` to MANIFEST, since we can ignore the WALs based on MinLogNumber.

But the `MinLogNumber()` is actually a lower bound, it does not exactly mean that logs starting from MinLogNumber must exist. This is because in a corner case, when a column family is empty and never flushed, its log number is set to the largest log number, but not persisted in MANIFEST. So let's say there are 2 column families, when creating the DB, the first WAL has log number 1, so it's persisted to MANIFEST for both column families. Then CF 0 is empty and never flushed, CF 1 is updated and flushed, so a new WAL with log number 2 is created and persisted to MANIFEST for CF 1. But CF 0's log number in MANIFEST is still 1. So on recovery, MinLogNumber is 1, but since log 1 only contains data for CF 1, and CF 1 is flushed, log 1 might have already been deleted from disk.

We can make `MinLogNumber()` be the exactly minimum log number that must exist, by persisting the most recent log number for empty column families that are not flushed. But if there are N such column families, then every time a new WAL is created, we need to add N records to MANIFEST.

In current design, a record is persisted to MANIFEST only when WAL is created, closed, or deleted/archived, so the number of WAL related records are bounded to 3x number of WALs.

2. Why keep `WalSet` in `VersionSet` instead of applying the `VersionEdit`s to `VersionStorageInfo`

`VersionEdit`s are originally designed to track the addition and deletion of SST files. The SST files are related to column families, each column family has a list of `Version`s, and each `Version` keeps the set of active SST files in `VersionStorageInfo`.

But WALs are a concept of DB, they are not bounded to specific column families. So logically it does not make sense to store WALs in a column family's `Version`s.

Also, `Version`'s purpose is to keep reference to SST / blob files, so that they are not deleted until there is no version referencing them. But a WAL is deleted regardless of version references.

So we keep the WALs in `VersionSet` for the purpose of writing out the DB state's snapshot when creating new MANIFESTs.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7164

Test Plan:

make version_edit_test && ./version_edit_test

make wal_edit_test && ./wal_edit_test

Reviewed By: ltamasi

Differential Revision: D22677936

Pulled By: cheng-chang

fbshipit-source-id: 5a3b6890140e572ffd79eb37e6e4c3c32361a859

Summary:

SST Partitioner interface that allows to split SST files during compactions.

It basically instruct compaction to create a new file when needed. When one is using well defined prefixes and prefixed way of defining tables it is good to define also partitioning so that promotion of some SST file does not cover huge key space on next level (worst case complete space).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6957

Reviewed By: ajkr

Differential Revision: D22461239

fbshipit-source-id: 9ce07bba08b3ba89c2d45630520368f704d1316e

Summary:

1. Add the wrapper classes FileSystemTracingWrapper, FSSequentialFileTracingWrapper, FSRandomAccessFileTracingWrapper, FSWritableFileTracingWrapper, FSRandomRWFileTracingWrapper that forward the calls to underlying storage system and then pass the file operation information to IOTracer. IOTracer dumps the record in binary format for tracing.

2. Add the wrapper classes FileSystemPtr, FSSequentialFilePtr, FSRandomAccessFilePtr, FSWritableFilePtr and FSRandomRWFilePtr that overload operator-> and return ptr to underlying storage system or Tracing wrapper class based on enabling/disabling of IO tracing. These classes are added to bypass Tracing Wrapper classes when we disable tracing.

3. Add enums in trace.h that distinguish which options need to be added for different file operations(Read, close, write etc) as part of tracing record.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7002

Test Plan: make check -j64

Reviewed By: anand1976

Differential Revision: D22127897

Pulled By: akankshamahajan15

fbshipit-source-id: 74cff58ce5661c9a3832dfaa52483f3b2d8565e0

Summary:

Cleans up some of the dependencies on test code in the Makefile while building tools:

- Moves the test::RandomString, DBBaseTest::RandomString into Random

- Moves the test::RandomHumanReadableString into Random

- Moves the DestroyDir method into file_utils

- Moves the SetupSyncPointsToMockDirectIO into sync_point.

- Moves the FaultInjection Env and FS classes under env

These changes allow all of the tools to build without dependencies on test_util, thereby simplifying the build dependencies. By moving the FaultInjection code, the dependency in db_stress on different libraries for debug vs release was eliminated.

Tested both release and debug builds via Make and CMake for both static and shared libraries.

More work remains to clean up how the tools are built and remove some unnecessary dependencies. There is also more work that should be done to get the Makefile and CMake to align in their builds -- what is in the libraries and the sizes of the executables are different.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7097

Reviewed By: riversand963

Differential Revision: D22463160

Pulled By: pdillinger

fbshipit-source-id: e19462b53324ab3f0b7c72459dbc73165cc382b2

Summary:

Change the linking of tests/tools to be against a library rather than a list of objects. This change substantially reduces the size of the objects produced.

peterd clean repo size: 264M

Before this change, with make all: 40G

After this change, with make all: 28G

With make LIB_MODE=shared all: 7.0G

The list of TESTS was changed from being hard-coded to generated from the test sources variable. Note that there are some test sources that are not built as tests (though the set of tests is identical to the previous version).

Added OBJ_DIR option to Makefile to allow objects to be placed in an alternative location. By default, OBJ_DIR is the same as before ("./").

This change is a precursor to being able to build/run the tests/tools linked against static libraries. Additionally, it should be possible to clean up and merge some of the rules for building tests and the like if so desired.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6660

Reviewed By: riversand963

Differential Revision: D22244463

Pulled By: pdillinger

fbshipit-source-id: db9c6341d81ed62c2270374f4ede02fb9604c754

Summary:

`BackupableDBOptions::new_naming_for_backup_files` is added. This option is false by default. When it is true, backup table filenames under directory shared_checksum are of the form `<file_number>_<crc32c>_<db_session_id>.sst`.

Note that when this option is true, it comes into effect only when both `share_files_with_checksum` and `share_table_files` are true.

Three new test cases are added.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6997

Test Plan: Passed make check.

Reviewed By: ajkr

Differential Revision: D22098895

Pulled By: gg814

fbshipit-source-id: a1d9145e7fe562d71cde7ac995e17cb24fd42e76

Summary:

1. As part of IOTracing project, Add a class IOTracer,

IOTraceReader and IOTracerWriter that writes the file operations

information in a binary file. IOTrace Record contains record information

and right now it contains access_timestamp, file_operation, file_name,

io_status, len, offset and later other options will be added when file

system APIs will be call IOTracer.

2. Add few unit test cases that verify that reading and writing to a IO

Trace file is working properly and before start trace and after ending

trace nothing is added to the binary file.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6958

Test Plan:

1. make check -j64

2. New testcases for IOTracer.

Reviewed By: anand1976

Differential Revision: D21943375

Pulled By: akankshamahajan15

fbshipit-source-id: 3532204e2a3eab0104bf411ab142e3fdd4fbce54

Summary:

This reverts commit 8d87e9cea1.

Based on offline discussions, it's too early to upgrade to gtest 1.10, as it prevents some developers from using an older version of gtest to integrate to some other systems. Revert it for now.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6923

Reviewed By: pdillinger

Differential Revision: D21864799

fbshipit-source-id: d0726b1ff649fc911b9378f1763316200bd363fc

Summary:

... so that we have freedom to upgrade it (see https://github.com/facebook/rocksdb/issues/6808).

As a side benefit, gtest will no longer be linked into main library in

buck build.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6858

Test Plan: fb internal build & link

Reviewed By: riversand963

Differential Revision: D21652061

Pulled By: pdillinger

fbshipit-source-id: 6018104af944debde576b5beda6c134e737acedb

Summary:

Currently, in direct IO mode, `MultiGet` retrieves the data blocks one by one instead of in parallel, see `BlockBasedTable::RetrieveMultipleBlocks`.

Since direct IO is supported in `RandomAccessFileReader::MultiRead` in https://github.com/facebook/rocksdb/pull/6446, this PR applies `MultiRead` to `MultiGet` so that the data blocks can be retrieved in parallel.

Also, in direct IO mode and when data blocks are compressed and need to uncompressed, this PR only allocates one continuous aligned buffer to hold the data blocks, and then directly uncompress the blocks to insert into block cache, there is no longer intermediate copies to scratch buffers.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6815

Test Plan:

1. added a new unit test `BlockBasedTableReaderTest::MultiGet`.

2. existing unit tests and stress tests contain tests against `MultiGet` in direct IO mode.

Reviewed By: anand1976

Differential Revision: D21426347

Pulled By: cheng-chang

fbshipit-source-id: b8446ae0e74152444ef9111e97f8e402ac31b24f

Summary:

Added functions for parsing, serializing, and comparing elements to OptionTypeInfo. These functions allow all of the special cases that could not be handled directly in the map of OptionTypeInfo to be moved into the map. Using these functions, every type can be handled via the map rather than special cased.

By adding these functions, the code for handling options can become more standardized (fewer special cases) and (eventually) handled completely by common classes.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6422

Test Plan: pass make check

Reviewed By: siying

Differential Revision: D21269005

Pulled By: zhichao-cao

fbshipit-source-id: 9ba71c721a38ebf9ee88259d60bd81b3282b9077

Summary:

In https://github.com/facebook/rocksdb/pull/6455, we modified the interface of `RandomAccessFileReader::Read` to be able to get rid of memcpy in direct IO mode.

This PR applies the new interface to `BlockFetcher` when reading blocks from SST files in direct IO mode.

Without this PR, in direct IO mode, when fetching and uncompressing compressed blocks, `BlockFetcher` will first copy the raw compressed block into `BlockFetcher::compressed_buf_` or `BlockFetcher::stack_buf_` inside `RandomAccessFileReader::Read` depending on the block size. then during uncompressing, it will copy the uncompressed block into `BlockFetcher::heap_buf_`.

In this PR, we get rid of the first memcpy and directly uncompress the block from `direct_io_buf_` to `heap_buf_`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6689

Test Plan: A new unit test `block_fetcher_test` is added.

Reviewed By: anand1976

Differential Revision: D21006729

Pulled By: cheng-chang

fbshipit-source-id: 2370b92c24075692423b81277415feb2aed5d980

Summary:

The methods in convenience.h are used to compare/convert objects to/from strings. There is a mishmash of parameters in use here with more needed in the future. This PR replaces those parameters with a single structure.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6389

Reviewed By: siying

Differential Revision: D21163707

Pulled By: zhichao-cao

fbshipit-source-id: f807b4cc7e2b0af3871536b69546b2604dfa81bd

Summary:

Towards making compaction logic compatible with user timestamp.

When computing boundaries and overlapping ranges for inputs of compaction, We need to compare SSTs by user key without timestamp.

Test plan (devserver):

```

make check

```

Several individual tests:

```

./version_set_test --gtest_filter=VersionStorageInfoTimestampTest.GetOverlappingInputs

./db_with_timestamp_compaction_test

./db_with_timestamp_basic_test

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6645

Reviewed By: ltamasi

Differential Revision: D20960012

Pulled By: riversand963

fbshipit-source-id: ad377fa9eb481bf7a8a3e1824aaade48cdc653a4

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

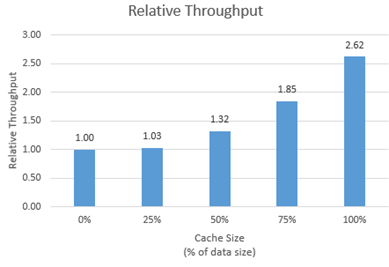

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

Summary:

Although there are tests related to locking in transaction_test, this new test directly tests against TransactionLockMgr.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6599

Test Plan: make transaction_lock_mgr_test && ./transaction_lock_mgr_test

Reviewed By: lth

Differential Revision: D20673749

Pulled By: cheng-chang

fbshipit-source-id: 1fa4a13218e68d785f5a99924556751a8c5c0f31

Summary:

Adding a simple timer support to schedule work at a fixed time.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6543

Test Plan: TODO: clean up the unit tests, and make them better.

Reviewed By: siying

Differential Revision: D20465390

Pulled By: sagar0

fbshipit-source-id: cba143f70b6339863e1d0f8b8bf92e51c2b3d678

Summary:

This PR adds support for pipelined & parallel compression optimization for `BlockBasedTableBuilder`. This optimization makes block building, block compression and block appending a pipeline, and uses multiple threads to accelerate block compression. Users can set `CompressionOptions::parallel_threads` greater than 1 to enable compression parallelism.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6262

Reviewed By: ajkr

Differential Revision: D20651306

fbshipit-source-id: 62125590a9c15b6d9071def9dc72589c1696a4cb

Summary:

The patch adds a couple of classes to represent metadata about

blob files: `SharedBlobFileMetaData` contains the information elements

that are immutable (once the blob file is closed), e.g. blob file number,

total number and size of blob files, checksum method/value, while

`BlobFileMetaData` contains attributes that can vary across versions like

the amount of garbage in the file. There is a single `SharedBlobFileMetaData`

for each blob file, which is jointly owned by the `BlobFileMetaData` objects

that point to it; `BlobFileMetaData` objects, in turn, are owned by `Version`s

and can also be shared if the (immutable _and_ mutable) state of the blob file

is the same in two versions.

In addition, the patch adds the blob file metadata to `VersionStorageInfo`, and extends

`VersionBuilder` so that it can apply blob file related `VersionEdit`s (i.e. those

containing `BlobFileAddition`s and/or `BlobFileGarbage`), and save blob file metadata

to a new `VersionStorageInfo`. Consistency checks are also extended to ensure

that table files point to blob files that are part of the `Version`, and that all blob files

that are part of any given `Version` have at least some _non_-garbage data in them.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6597

Test Plan: `make check`

Reviewed By: riversand963

Differential Revision: D20656803

Pulled By: ltamasi

fbshipit-source-id: f1f74d135045b3b42d0146f03ee576ef0a4bfd80

Summary:

There are situations when RocksDB tries to recover, but the db is in an inconsistent state due to SST files referenced in the MANIFEST being missing. In this case, previous RocksDB will just fail the recovery and return a non-ok status.

This PR enables another possibility. During recovery, RocksDB checks possible MANIFEST files, and try to recover to the most recent state without missing table file. `VersionSet::Recover()` applies version edits incrementally and "materializes" a version only when this version does not reference any missing table file. After processing the entire MANIFEST, the version created last will be the latest version.

`DBImpl::Recover()` calls `VersionSet::Recover()`. Afterwards, WAL replay will *not* be performed.

To use this capability, set `options.best_efforts_recovery = true` when opening the db. Best-efforts recovery is currently incompatible with atomic flush.

Test plan (on devserver):

```

$make check

$COMPILE_WITH_ASAN=1 make all && make check

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6334

Reviewed By: anand1976

Differential Revision: D19778960

Pulled By: riversand963

fbshipit-source-id: c27ea80f29bc952e7d3311ecf5ee9c54393b40a8

Summary:

By supporting direct IO in RandomAccessFileReader::MultiRead, the benefits of parallel IO (IO uring) and direct IO can be combined.

In direct IO mode, read requests are aligned and merged together before being issued to RandomAccessFile::MultiRead, so blocks in the original requests might share the same underlying buffer, the shared buffers are returned in `aligned_bufs`, which is a new parameter of the `MultiRead` API.

For example, suppose alignment requirement for direct IO is 4KB, one request is (offset: 1KB, len: 1KB), another request is (offset: 3KB, len: 1KB), then since they all belong to page (offset: 0, len: 4KB), `MultiRead` only reads the page with direct IO into a buffer on heap, and returns 2 Slices referencing regions in that same buffer. See `random_access_file_reader_test.cc` for more examples.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6446

Test Plan: Added a new test `random_access_file_reader_test.cc`.

Reviewed By: anand1976

Differential Revision: D20097518

Pulled By: cheng-chang

fbshipit-source-id: ca48a8faf9c3af146465c102ef6b266a363e78d1

Summary:

Right now block based table iterator is used as both of iterating data for block based table, and for the index iterator for partitioend index. This was initially convenient for introducing a new iterator and block type for new index format, while reducing code change. However, these two usage doesn't go with each other very well. For example, Prev() is never called for partitioned index iterator, and some other complexity is maintained in block based iterators, which is not needed for index iterator but maintainers will always need to reason about it. Furthermore, the template usage is not following Google C++ Style which we are following, and makes a large chunk of code tangled together. This commit separate the two iterators. Right now, here is what it is done:

1. Copy the block based iterator code into partitioned index iterator, and de-template them.

2. Remove some code not needed for partitioned index. The upper bound check and tricks are removed. We never tested performance for those tricks when partitioned index is enabled in the first place. It's unlikelyl to generate performance regression, as creating new partitioned index block is much rarer than data blocks.

3. Separate out the prefetch logic to a helper class and both classes call them.

This commit will enable future follow-ups. One direction is that we might separate index iterator interface for data blocks and index blocks, as they are quite different.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6531

Test Plan: build using make and cmake. And build release

Differential Revision: D20473108

fbshipit-source-id: e48011783b339a4257c204cc07507b171b834b0f

Summary:

In some of the test, db_basic_test may cause time out due to its long running time. Separate the timestamp related test from db_basic_test to avoid the potential issue.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6516

Test Plan: pass make asan_check

Differential Revision: D20423922

Pulled By: zhichao-cao

fbshipit-source-id: d6306f89a8de55b07bf57233e4554c09ef1fe23a

Summary:

block_based_table_reader.cc is a giant file, which makes it hard for users to navigate the code. Divide the files to multiple files.

Some class templates cannot be moved to .cc file. They are moved to .h files. It is still better than including them all in block_based_table_reader.cc.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6527

Test Plan: "make all check" and "make release". Also build using cmake.

Differential Revision: D20428455

fbshipit-source-id: ca713c698469f07f35bc0c271358c0874ed4eb28

Summary:

In Linux, when reopening DB with many SST files, profiling shows that 100% system cpu time spent for a couple of seconds for `GetLogicalBufferSize`. This slows down MyRocks' recovery time when site is down.

This PR introduces two new APIs:

1. `Env::RegisterDbPaths` and `Env::UnregisterDbPaths` lets `DB` tell the env when it starts or stops using its database directories . The `PosixFileSystem` takes this opportunity to set up a cache from database directories to the corresponding logical block sizes.

2. `LogicalBlockSizeCache` is defined only for OS_LINUX to cache the logical block sizes.

Other modifications:

1. rename `logical buffer size` to `logical block size` to be consistent with Linux terms.

2. declare `GetLogicalBlockSize` in `PosixHelper` to expose it to `PosixFileSystem`.

3. change the functions `IOError` and `IOStatus` in `env/io_posix.h` to have external linkage since they are used in other translation units too.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6457

Test Plan:

1. A new unit test is added for `LogicalBlockSizeCache` in `env/io_posix_test.cc`.

2. A new integration test is added for `DB` operations related to the cache in `db/db_logical_block_size_cache_test.cc`.

`make check`

Differential Revision: D20131243

Pulled By: cheng-chang

fbshipit-source-id: 3077c50f8065c0bffb544d8f49fb10bba9408d04

Summary:

It's never too soon to refactor something. The patch splits the recently

introduced (`VersionEdit` related) `BlobFileState` into two classes

`BlobFileAddition` and `BlobFileGarbage`. The idea is that once blob files

are closed, they are immutable, and the only thing that changes is the

amount of garbage in them. In the new design, `BlobFileAddition` contains

the immutable attributes (currently, the count and total size of all blobs, checksum

method, and checksum value), while `BlobFileGarbage` contains the mutable

GC-related information elements (count and total size of garbage blobs). This is a

better fit for the GC logic and is more consistent with how SST files are handled.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6502

Test Plan: `make check`

Differential Revision: D20348352

Pulled By: ltamasi

fbshipit-source-id: ff93f0121e80ab15e0e0a6525ba0d6af16a0e008

Summary:

In the current code base, we can use FaultInjectionTestEnv to simulate the env issue such as file write/read errors, which are used in most of the test. The PR https://github.com/facebook/rocksdb/issues/5761 introduce the File System as a new Env API. This PR implement the FaultInjectionTestFS, which can be used to simulate when File System has issues such as IO error. user can specify any IOStatus error as input, such that FS corresponding actions will return certain error to the caller.

A set of ErrorHandlerFSTests are introduced for testing

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6414

Test Plan: pass make asan_check, pass error_handler_fs_test.

Differential Revision: D20252421

Pulled By: zhichao-cao

fbshipit-source-id: e922038f8ce7e6d1da329fd0bba7283c4b779a21

Summary:

BlobDB currently does not keep track of blob files: no records are written to

the manifest when a blob file is added or removed, and upon opening a database,

the list of blob files is populated simply based on the contents of the blob directory.

This means that lost blob files cannot be detected at the moment. We plan to solve

this issue by making blob files a part of `Version`; as a first step, this patch makes

it possible to store information about blob files in `VersionEdit`. Currently, this information

includes blob file number, total number and size of all blobs, and total number and size

of garbage blobs. However, the format is extensible: new fields can be added in

both a forward compatible and a forward incompatible manner if needed (similarly

to `kNewFile4`).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6416

Test Plan: `make check`

Differential Revision: D19894234

Pulled By: ltamasi

fbshipit-source-id: f9753e1f2aedf6dadb70c09b345207cb9c58c329

Summary:

Add a utility class `Defer` to defer the execution of a function until the Defer object goes out of scope.

Used in VersionSet:: ProcessManifestWrites as an example.

The inline comments for class `Defer` have more details.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6382

Test Plan: `make defer_test version_set_test && ./defer_test && ./version_set_test`

Differential Revision: D19797538

Pulled By: cheng-chang

fbshipit-source-id: b1a9b7306e4fd4f48ec2ab55783caa561a315f0f

Summary:

In the current code base, RocksDB generate the checksum for each block and verify the checksum at usage. Current PR enable SST file checksum. After a SST file is generated by Flush or Compaction, RocksDB generate the SST file checksum and store the checksum value and checksum method name in the vs_info and MANIFEST as part for the FileMetadata.

Added the enable_sst_file_checksum to Options to enable or disable file checksum. Added sst_file_checksum to Options such that user can plugin their own SST file checksum calculate method via overriding the SstFileChecksum class. The checksum information inlcuding uint32_t checksum value and a checksum name (string). A new tool is added to LDB such that user can dump out a list of file checksum information from MANIFEST. If user enables the file checksum but does not provide the sst_file_checksum instance, RocksDB will use the default crc32checksum implemented in table/sst_file_checksum_crc32c.h

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6216

Test Plan: Added the testing case in table_test and ldb_cmd_test to verify checksum is correct in different level. Pass make asan_check.

Differential Revision: D19171461

Pulled By: zhichao-cao

fbshipit-source-id: b2e53479eefc5bb0437189eaa1941670e5ba8b87

Summary:

It's logically correct for PinnableSlice to support move semantics to transfer ownership of the pinned memory region. This PR adds both move constructor and move assignment to PinnableSlice.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6374

Test Plan:

A set of unit tests for the move semantics are added in slice_test.

So `make slice_test && ./slice_test`.

Differential Revision: D19739254

Pulled By: cheng-chang

fbshipit-source-id: f898bd811bb05b2d87384ec58b645e9915e8e0b1

Summary:

The current Env API encompasses both storage/file operations, as well as OS related operations. Most of the APIs return a Status, which does not have enough metadata about an error, such as whether its retry-able or not, scope (i.e fault domain) of the error etc., that may be required in order to properly handle a storage error. The file APIs also do not provide enough control over the IO SLA, such as timeout, prioritization, hinting about placement and redundancy etc.

This PR separates out the file/storage APIs from Env into a new FileSystem class. The APIs are updated to return an IOStatus with metadata about the error, as well as to take an IOOptions structure as input in order to allow more control over the IO.

The user can set both ```options.env``` and ```options.file_system``` to specify that RocksDB should use the former for OS related operations and the latter for storage operations. Internally, a ```CompositeEnvWrapper``` has been introduced that inherits from ```Env``` and redirects individual methods to either an ```Env``` implementation or the ```FileSystem``` as appropriate. When options are sanitized during ```DB::Open```, ```options.env``` is replaced with a newly allocated ```CompositeEnvWrapper``` instance if both env and file_system have been specified. This way, the rest of the RocksDB code can continue to function as before.

This PR also ports PosixEnv to the new API by splitting it into two - PosixEnv and PosixFileSystem. PosixEnv is defined as a sub-class of CompositeEnvWrapper, and threading/time functions are overridden with Posix specific implementations in order to avoid an extra level of indirection.

The ```CompositeEnvWrapper``` translates ```IOStatus``` return code to ```Status```, and sets the severity to ```kSoftError``` if the io_status is retryable. The error handling code in RocksDB can then recover the DB automatically.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5761

Differential Revision: D18868376

Pulled By: anand1976

fbshipit-source-id: 39efe18a162ea746fabac6360ff529baba48486f

Summary:

And clean up related code, especially in stress test.

(More clean up of db_stress_test_base.cc coming after this.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6154

Test Plan: make check, make blackbox_crash_test for a bit

Differential Revision: D18938180

Pulled By: pdillinger

fbshipit-source-id: 524d27621b8dbb25f6dff40f1081e7c00630357e

Summary:

db_stress_tool.cc now is a giant file. In order to main it easier to improve and maintain, break it down to multiple source files.

Most classes are turned into their own files. Separate .h and .cc files are created for gflag definiations. Another .h and .cc files are created for some common functions. Some test execution logic that is only loosely related to class StressTest is moved to db_stress_driver.h and db_stress_driver.cc. All the files are located under db_stress_tool/. The directory name is created as such because if we end it with either stress or test, .gitignore will ignore any file under it and makes it prone to issues in developements.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6134

Test Plan: Build under GCC7 with and without LITE on using GNU Make. Build with GCC 4.8. Build with cmake with -DWITH_TOOL=1

Differential Revision: D18876064

fbshipit-source-id: b25d0a7451840f31ac0f5ebb0068785f783fdf7d

Summary:

The parts that are used to implement FilterPolicy /

NewBloomFilterPolicy and not used other than for the block-based table

should be consolidated under table/block_based/filter_policy*.

This change is step 2 of 2:

mv util/bloom.cc table/block_based/filter_policy.cc

This gets its own PR so that git has the best chance of following the

rename for blame purposes. Note that low-level shared implementation

details of Bloom filters remain in util/bloom_impl.h, and

util/bloom_test.cc remains where it is for now.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5966

Test Plan: make check

Differential Revision: D18124930

Pulled By: pdillinger

fbshipit-source-id: 823bc09025b3395f092ef46a46aa5ba92a914d84