Summary:

RocksDB Makefile was assuming existence of 'python' command,

which is not present in CentOS 8. We avoid using 'python' if 'python3' is available.

Also added fancy logic to format-diff.sh to make clang-format-diff.py for Python2 work even with Python3 only (as some CentOS 8 FB machines come equipped)

Also, now use just 'python3' for PYTHON if not found so that an informative

"command not found" error will result rather than something weird.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6883

Test Plan: manually tried some variants, 'make check' on a fresh CentOS 8 machine without 'python' executable or Python2 but with clang-format-diff.py for Python2.

Reviewed By: gg814

Differential Revision: D21767029

Pulled By: pdillinger

fbshipit-source-id: 54761b376b140a3922407bdc462f3572f461d0e9

Summary:

* Print stack trace on status checked failure

* Make folly_synchronization_distributed_mutex_test a parallel test

* Disable ldb_test.py and rocksdb_dump_test.sh with

ASSERT_STATUS_CHECKED (broken)

* Fix shadow warning in random_access_file_reader.h reported by gcc

4.8.5 (ROCKSDB_NO_FBCODE), also https://github.com/facebook/rocksdb/issues/6866

* Work around compiler bug on max_align_t for gcc < 4.9

* Remove an apparently wrong comment in status.h

* Use check_some in Travis config (for proper diagnostic output)

* Fix ignored Status in loop in options_helper.cc

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6871

Test Plan: manual, CI

Reviewed By: ajkr

Differential Revision: D21706619

Pulled By: pdillinger

fbshipit-source-id: daf6364173d6689904eb394461a69a11f5bee2cb

Summary:

Fixed some option handling code that recently broke the

ASSERT_STATUS_CHECKED build for options_test.

Added all other existing tests that pass under ASSERT_STATUS_CHECKED to

the whitelist.

Added a Travis configuration to run all whitelisted tests with

ASSERT_STATUS_CHECKED. (Someday we might enable this check by default in

debug builds.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6870

Test Plan: ASSERT_STATUS_CHECKED=1 make check, Travis

Reviewed By: ajkr

Differential Revision: D21704374

Pulled By: pdillinger

fbshipit-source-id: 15daef98136a19d7a6843fa0c9ec08738c2ac693

Summary:

... so that we have freedom to upgrade it (see https://github.com/facebook/rocksdb/issues/6808).

As a side benefit, gtest will no longer be linked into main library in

buck build.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6858

Test Plan: fb internal build & link

Reviewed By: riversand963

Differential Revision: D21652061

Pulled By: pdillinger

fbshipit-source-id: 6018104af944debde576b5beda6c134e737acedb

Summary:

Currently, in direct IO mode, `MultiGet` retrieves the data blocks one by one instead of in parallel, see `BlockBasedTable::RetrieveMultipleBlocks`.

Since direct IO is supported in `RandomAccessFileReader::MultiRead` in https://github.com/facebook/rocksdb/pull/6446, this PR applies `MultiRead` to `MultiGet` so that the data blocks can be retrieved in parallel.

Also, in direct IO mode and when data blocks are compressed and need to uncompressed, this PR only allocates one continuous aligned buffer to hold the data blocks, and then directly uncompress the blocks to insert into block cache, there is no longer intermediate copies to scratch buffers.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6815

Test Plan:

1. added a new unit test `BlockBasedTableReaderTest::MultiGet`.

2. existing unit tests and stress tests contain tests against `MultiGet` in direct IO mode.

Reviewed By: anand1976

Differential Revision: D21426347

Pulled By: cheng-chang

fbshipit-source-id: b8446ae0e74152444ef9111e97f8e402ac31b24f

Summary:

Tried making Status object enforce that it is checked in some way. In cases it is not checked, `PermitUncheckedError()` must be called explicitly.

Added a way to run tests (`ASSERT_STATUS_CHECKED=1 make -j48 check`) on a

whitelist. The effort appears significant to get each test to pass with

this assertion, so I only fixed up enough to get one test (`options_test`)

working and added it to the whitelist.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6798

Reviewed By: pdillinger

Differential Revision: D21377404

Pulled By: ajkr

fbshipit-source-id: 73236f9c8df38f01cf24ecac4a6d1661b72d077e

Summary:

Add Github Action to perform some basic sanity check for PR, inclding the

following.

1) Buck TARGETS file.

On the one hand, The TARGETS file is used for internal buck, and we do not

manually update it. On the other hand, we need to run the buckifier scripts to

update TARGETS whenever new files are added, etc. With this Github Action, we

make sure that every PR does not forget this step. The GH Action uses

a Makefile target called check-buck-targets. Users can manually run `make

check-buck-targets` on local machine.

2) Code format

We use clang-format-diff.py to format our code. The GH Action in this PR makes

sure this step is not skipped. The checking script build_tools/format-diff.sh assumes that `clang-format-diff.py` is executable.

On host running GH Action, it is difficult to download `clang-format-diff.py` and make it

executable. Therefore, we modified build_tools/format-diff.sh to handle the case in which there is a non-executable clang-format-diff.py file in the top-level rocksdb repo directory.

Test Plan (Github and devserver):

Watch for Github Action result in the `Checks` tab.

On dev server

```

make check-format

make check-buck-targets

make check

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6761

Test Plan: Watch for Github Action result in the `Checks` tab.

Reviewed By: pdillinger

Differential Revision: D21260209

Pulled By: riversand963

fbshipit-source-id: c646e2f37c6faf9f0614b68aa0efc818cff96787

Summary:

Some common build variables like USE_CLANG and

COMPILE_WITH_UBSAN did not work if specified as make variables, as in

`make USE_CLANG=1 check` etc. rather than (in theory less hygienic)

`USE_CLANG=1 make check`. This patches Makefile to export some commonly

used ones to build_detect_platform so that they work. (I'm skeptical of

a broad `export` in Makefile because it's hard to predict how random

make variables might affect various invoked tools.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6740

Test Plan: manual / CI

Reviewed By: siying

Differential Revision: D21229011

Pulled By: pdillinger

fbshipit-source-id: b00c69b23eb2a13105bc8d860ce2d1e61ac5a355

Summary:

In https://github.com/facebook/rocksdb/pull/6455, we modified the interface of `RandomAccessFileReader::Read` to be able to get rid of memcpy in direct IO mode.

This PR applies the new interface to `BlockFetcher` when reading blocks from SST files in direct IO mode.

Without this PR, in direct IO mode, when fetching and uncompressing compressed blocks, `BlockFetcher` will first copy the raw compressed block into `BlockFetcher::compressed_buf_` or `BlockFetcher::stack_buf_` inside `RandomAccessFileReader::Read` depending on the block size. then during uncompressing, it will copy the uncompressed block into `BlockFetcher::heap_buf_`.

In this PR, we get rid of the first memcpy and directly uncompress the block from `direct_io_buf_` to `heap_buf_`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6689

Test Plan: A new unit test `block_fetcher_test` is added.

Reviewed By: anand1976

Differential Revision: D21006729

Pulled By: cheng-chang

fbshipit-source-id: 2370b92c24075692423b81277415feb2aed5d980

Summary:

Updates the version of bzip2 used for RocksJava static builds.

Please, can we also get this cherry-picked to:

1. 6.7.fb

2. 6.8.fb

3. 6.9.fb

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6714

Reviewed By: cheng-chang

Differential Revision: D21067233

Pulled By: pdillinger

fbshipit-source-id: 8164b7eb99c5ca7b2021ab8c371ba9ded4cb4f7e

Summary:

This PR implements a fault injection mechanism for injecting errors in reads in db_stress. The FaultInjectionTestFS is used for this purpose. A thread local structure is used to track the errors, so that each db_stress thread can independently enable/disable error injection and verify observed errors against expected errors. This is initially enabled only for Get and MultiGet, but can be extended to iterator as well once its proven stable.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6538

Test Plan:

crash_test

make check

Reviewed By: riversand963

Differential Revision: D20714347

Pulled By: anand1976

fbshipit-source-id: d7598321d4a2d72bda0ced57411a337a91d87dc7

Summary:

Towards making compaction logic compatible with user timestamp.

When computing boundaries and overlapping ranges for inputs of compaction, We need to compare SSTs by user key without timestamp.

Test plan (devserver):

```

make check

```

Several individual tests:

```

./version_set_test --gtest_filter=VersionStorageInfoTimestampTest.GetOverlappingInputs

./db_with_timestamp_compaction_test

./db_with_timestamp_basic_test

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6645

Reviewed By: ltamasi

Differential Revision: D20960012

Pulled By: riversand963

fbshipit-source-id: ad377fa9eb481bf7a8a3e1824aaade48cdc653a4

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

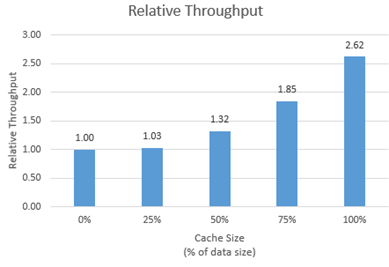

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

Summary:

Although there are tests related to locking in transaction_test, this new test directly tests against TransactionLockMgr.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6599

Test Plan: make transaction_lock_mgr_test && ./transaction_lock_mgr_test

Reviewed By: lth

Differential Revision: D20673749

Pulled By: cheng-chang

fbshipit-source-id: 1fa4a13218e68d785f5a99924556751a8c5c0f31

Summary:

Adding a simple timer support to schedule work at a fixed time.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6543

Test Plan: TODO: clean up the unit tests, and make them better.

Reviewed By: siying

Differential Revision: D20465390

Pulled By: sagar0

fbshipit-source-id: cba143f70b6339863e1d0f8b8bf92e51c2b3d678

Summary:

When Travis times out, it's hard to determine whether

the last executing thing took an excessively long time or the

sum of all the work just exceeded the time limit. This

change inserts some timestamps in the output that should

make this easier to determine.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6643

Test Plan: CI (Travis mostly)

Reviewed By: anand1976

Differential Revision: D20843901

Pulled By: pdillinger

fbshipit-source-id: e7aae5434b0c609931feddf238ce4355964488b7

Summary:

This PR adds support for pipelined & parallel compression optimization for `BlockBasedTableBuilder`. This optimization makes block building, block compression and block appending a pipeline, and uses multiple threads to accelerate block compression. Users can set `CompressionOptions::parallel_threads` greater than 1 to enable compression parallelism.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6262

Reviewed By: ajkr

Differential Revision: D20651306

fbshipit-source-id: 62125590a9c15b6d9071def9dc72589c1696a4cb

Summary:

By supporting direct IO in RandomAccessFileReader::MultiRead, the benefits of parallel IO (IO uring) and direct IO can be combined.

In direct IO mode, read requests are aligned and merged together before being issued to RandomAccessFile::MultiRead, so blocks in the original requests might share the same underlying buffer, the shared buffers are returned in `aligned_bufs`, which is a new parameter of the `MultiRead` API.

For example, suppose alignment requirement for direct IO is 4KB, one request is (offset: 1KB, len: 1KB), another request is (offset: 3KB, len: 1KB), then since they all belong to page (offset: 0, len: 4KB), `MultiRead` only reads the page with direct IO into a buffer on heap, and returns 2 Slices referencing regions in that same buffer. See `random_access_file_reader_test.cc` for more examples.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6446

Test Plan: Added a new test `random_access_file_reader_test.cc`.

Reviewed By: anand1976

Differential Revision: D20097518

Pulled By: cheng-chang

fbshipit-source-id: ca48a8faf9c3af146465c102ef6b266a363e78d1

Summary:

In some of the test, db_basic_test may cause time out due to its long running time. Separate the timestamp related test from db_basic_test to avoid the potential issue.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6516

Test Plan: pass make asan_check

Differential Revision: D20423922

Pulled By: zhichao-cao

fbshipit-source-id: d6306f89a8de55b07bf57233e4554c09ef1fe23a

Summary:

In the `.travis.yml` file the `jdk: openjdk7` element is ignored when `language: cpp`. So whatever version of the JDK that was installed in the Travis container was used - typically JDK 11.

To ensure our RocksJava builds are working, we now instead install and use OpenJDK 8. Ideally we would use OpenJDK 7, as RocksJava supports Java 7, but many of the newer Travis containers don't support Java 7, so Java 8 is the next best thing.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6512

Differential Revision: D20388296

Pulled By: pdillinger

fbshipit-source-id: 8bbe6b59b70cfab7fe81ff63867d907fefdd2df1

Summary:

In Linux, when reopening DB with many SST files, profiling shows that 100% system cpu time spent for a couple of seconds for `GetLogicalBufferSize`. This slows down MyRocks' recovery time when site is down.

This PR introduces two new APIs:

1. `Env::RegisterDbPaths` and `Env::UnregisterDbPaths` lets `DB` tell the env when it starts or stops using its database directories . The `PosixFileSystem` takes this opportunity to set up a cache from database directories to the corresponding logical block sizes.

2. `LogicalBlockSizeCache` is defined only for OS_LINUX to cache the logical block sizes.

Other modifications:

1. rename `logical buffer size` to `logical block size` to be consistent with Linux terms.

2. declare `GetLogicalBlockSize` in `PosixHelper` to expose it to `PosixFileSystem`.

3. change the functions `IOError` and `IOStatus` in `env/io_posix.h` to have external linkage since they are used in other translation units too.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6457

Test Plan:

1. A new unit test is added for `LogicalBlockSizeCache` in `env/io_posix_test.cc`.

2. A new integration test is added for `DB` operations related to the cache in `db/db_logical_block_size_cache_test.cc`.

`make check`

Differential Revision: D20131243

Pulled By: cheng-chang

fbshipit-source-id: 3077c50f8065c0bffb544d8f49fb10bba9408d04

Summary:

* **macOS version:** 10.15.2 (Catalina)

* **XCode/Clang version:** Apple clang version 11.0.0 (clang-1100.0.33.16)

Before this bugfix the error generated is:

```

In file included from ./util/compression.h:23:

./snappy-1.1.7/snappy.h:76:59: error: unknown type name 'string'; did you mean 'std::string'?

size_t Compress(const char* input, size_t input_length, string* output);

^~~~~~

std::string

/Library/Developer/CommandLineTools/usr/bin/../include/c++/v1/iosfwd:211:65: note: 'std::string' declared here

typedef basic_string<char, char_traits<char>, allocator<char> > string;

^

In file included from db/builder.cc:10:

In file included from ./db/builder.h:12:

In file included from ./db/range_tombstone_fragmenter.h:15:

In file included from ./db/pinned_iterators_manager.h:12:

In file included from ./table/internal_iterator.h:13:

In file included from ./table/format.h:25:

In file included from ./options/cf_options.h:14:

In file included from ./util/compression.h:23:

./snappy-1.1.7/snappy.h:85:19: error: unknown type name 'string'; did you mean 'std::string'?

string* uncompressed);

^~~~~~

std::string

/Library/Developer/CommandLineTools/usr/bin/../include/c++/v1/iosfwd:211:65: note: 'std::string' declared here

typedef basic_string<char, char_traits<char>, allocator<char> > string;

^

2 errors generated.

make: *** [jls/db/builder.o] Error 1

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6496

Differential Revision: D20389254

Pulled By: pdillinger

fbshipit-source-id: 2864245c8d0dba7b2ab81294241a62f2adf02e20

Summary:

It's never too soon to refactor something. The patch splits the recently

introduced (`VersionEdit` related) `BlobFileState` into two classes

`BlobFileAddition` and `BlobFileGarbage`. The idea is that once blob files

are closed, they are immutable, and the only thing that changes is the

amount of garbage in them. In the new design, `BlobFileAddition` contains

the immutable attributes (currently, the count and total size of all blobs, checksum

method, and checksum value), while `BlobFileGarbage` contains the mutable

GC-related information elements (count and total size of garbage blobs). This is a

better fit for the GC logic and is more consistent with how SST files are handled.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6502

Test Plan: `make check`

Differential Revision: D20348352

Pulled By: ltamasi

fbshipit-source-id: ff93f0121e80ab15e0e0a6525ba0d6af16a0e008

Summary:

In the current code base, we can use FaultInjectionTestEnv to simulate the env issue such as file write/read errors, which are used in most of the test. The PR https://github.com/facebook/rocksdb/issues/5761 introduce the File System as a new Env API. This PR implement the FaultInjectionTestFS, which can be used to simulate when File System has issues such as IO error. user can specify any IOStatus error as input, such that FS corresponding actions will return certain error to the caller.

A set of ErrorHandlerFSTests are introduced for testing

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6414

Test Plan: pass make asan_check, pass error_handler_fs_test.

Differential Revision: D20252421

Pulled By: zhichao-cao

fbshipit-source-id: e922038f8ce7e6d1da329fd0bba7283c4b779a21

Summary:

BlobDB currently does not keep track of blob files: no records are written to

the manifest when a blob file is added or removed, and upon opening a database,

the list of blob files is populated simply based on the contents of the blob directory.

This means that lost blob files cannot be detected at the moment. We plan to solve

this issue by making blob files a part of `Version`; as a first step, this patch makes

it possible to store information about blob files in `VersionEdit`. Currently, this information

includes blob file number, total number and size of all blobs, and total number and size

of garbage blobs. However, the format is extensible: new fields can be added in

both a forward compatible and a forward incompatible manner if needed (similarly

to `kNewFile4`).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6416

Test Plan: `make check`

Differential Revision: D19894234

Pulled By: ltamasi

fbshipit-source-id: f9753e1f2aedf6dadb70c09b345207cb9c58c329

Summary:

Add a utility class `Defer` to defer the execution of a function until the Defer object goes out of scope.

Used in VersionSet:: ProcessManifestWrites as an example.

The inline comments for class `Defer` have more details.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6382

Test Plan: `make defer_test version_set_test && ./defer_test && ./version_set_test`

Differential Revision: D19797538

Pulled By: cheng-chang

fbshipit-source-id: b1a9b7306e4fd4f48ec2ab55783caa561a315f0f

Summary:

It's logically correct for PinnableSlice to support move semantics to transfer ownership of the pinned memory region. This PR adds both move constructor and move assignment to PinnableSlice.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6374

Test Plan:

A set of unit tests for the move semantics are added in slice_test.

So `make slice_test && ./slice_test`.

Differential Revision: D19739254

Pulled By: cheng-chang

fbshipit-source-id: f898bd811bb05b2d87384ec58b645e9915e8e0b1

Summary:

I set up a mirror of our Java deps on github so we can download

them through github URLs rather than maven.org, which is proving

terribly unreliable from Travis builds.

Also sanitized calls to curl, so they are easier to read and

appropriately fail on download failure.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6348

Test Plan: CI

Differential Revision: D19633621

Pulled By: pdillinger

fbshipit-source-id: 7eb3f730953db2ead758dc94039c040f406790f3

Summary:

Both changes are related to RocksJava:

1. Allow dependencies that are already present on the host system due to Maven to be reused in Docker builds.

2. Extend the `make clean-not-downloaded` target to RocksJava, so that libraries needed as dependencies for the test suite are not deleted and re-downloaded unnecessarily.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6318

Differential Revision: D19608742

Pulled By: pdillinger

fbshipit-source-id: 25e25649e3e3212b537ac4512b40e2e53dc02ae7

Summary:

Clang analyzer was falsely reporting on use of txn=nullptr.

Added a new const variable so that it can properly prune impossible

control flows.

Also, 'make analyze' previously required setting USE_CLANG=1 as an

environment variable, not a make variable, or else compilation errors

like

g++: error: unrecognized command line option ‘-Wshorten-64-to-32’

Now USE_CLANG is not required for 'make analyze' (it's implied) and you

can do an incremental analysis (recompile what has changed) with

'USE_CLANG=1 make analyze_incremental'

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6244

Test Plan: 'make -j24 analyze', 'make crash_test'

Differential Revision: D19225950

Pulled By: pdillinger

fbshipit-source-id: 14f4039aa552228826a2de62b2671450e0fed3cb

Summary:

Currently, db_stress performs verification by calling `VerifyDb()` at the end of test and optionally before tests start. In case of corruption or incorrect result, it will be too late. This PR adds more verification in two ways.

1. For cf consistency test, each test thread takes a snapshot and verifies every N ops. N is configurable via `-verify_db_one_in`. This option is not supported in other stress tests.

2. For cf consistency test, we use another background thread in which a secondary instance periodically tails the primary (interval is configurable). We verify the secondary. Once an error is detected, we terminate the test and report. This does not affect other stress tests.

Test plan (devserver)

```

$./db_stress -test_cf_consistency -verify_db_one_in=0 -ops_per_thread=100000 -continuous_verification_interval=100

$./db_stress -test_cf_consistency -verify_db_one_in=1000 -ops_per_thread=10000 -continuous_verification_interval=0

$make crash_test

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6173

Differential Revision: D19047367

Pulled By: riversand963

fbshipit-source-id: aeed584ad71f9310c111445f34975e5ab47a0615

Summary:

Adds a python script to syntax check all python files in the

repository and report any errors.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6209

Test Plan:

'make check' with and without seeded syntax errors. Also look

for "No syntax errors in 34 .py files" on success, and in java_test CI output

Differential Revision: D19166756

Pulled By: pdillinger

fbshipit-source-id: 537df464b767260d66810b4cf4c9808a026c58a4

Summary:

And clean up related code, especially in stress test.

(More clean up of db_stress_test_base.cc coming after this.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6154

Test Plan: make check, make blackbox_crash_test for a bit

Differential Revision: D18938180

Pulled By: pdillinger

fbshipit-source-id: 524d27621b8dbb25f6dff40f1081e7c00630357e

Summary:

Error message when running `make` on Mac OS with master branch (v6.6.0):

```

$ make

$DEBUG_LEVEL is 1

Makefile:168: Warning: Compiling in debug mode. Don't use the resulting binary in production

third-party/folly/folly/synchronization/WaitOptions.cpp:6:10: fatal error: 'folly/synchronization/WaitOptions.h' file not found

#include <folly/synchronization/WaitOptions.h>

^

1 error generated.

third-party/folly/folly/synchronization/ParkingLot.cpp:6:10: fatal error: 'folly/synchronization/ParkingLot.h' file not found

#include <folly/synchronization/ParkingLot.h>

^

1 error generated.

third-party/folly/folly/synchronization/DistributedMutex.cpp:6:10: fatal error: 'folly/synchronization/DistributedMutex.h' file not found

#include <folly/synchronization/DistributedMutex.h>

^

1 error generated.

third-party/folly/folly/synchronization/AtomicNotification.cpp:6:10: fatal error: 'folly/synchronization/AtomicNotification.h' file not found

#include <folly/synchronization/AtomicNotification.h>

^

1 error generated.

third-party/folly/folly/detail/Futex.cpp:6:10: fatal error: 'folly/detail/Futex.h' file not found

#include <folly/detail/Futex.h>

^

1 error generated.

GEN util/build_version.cc

$DEBUG_LEVEL is 1

Makefile:168: Warning: Compiling in debug mode. Don't use the resulting binary in production

third-party/folly/folly/synchronization/WaitOptions.cpp:6:10: fatal error: 'folly/synchronization/WaitOptions.h' file not found

#include <folly/synchronization/WaitOptions.h>

^

1 error generated.

third-party/folly/folly/synchronization/ParkingLot.cpp:6:10: fatal error: 'folly/synchronization/ParkingLot.h' file not found

#include <folly/synchronization/ParkingLot.h>

^

1 error generated.

third-party/folly/folly/synchronization/DistributedMutex.cpp:6:10: fatal error: 'folly/synchronization/DistributedMutex.h' file not found

#include <folly/synchronization/DistributedMutex.h>

^

1 error generated.

third-party/folly/folly/synchronization/AtomicNotification.cpp:6:10: fatal error: 'folly/synchronization/AtomicNotification.h' file not found

#include <folly/synchronization/AtomicNotification.h>

^

1 error generated.

third-party/folly/folly/detail/Futex.cpp:6:10: fatal error: 'folly/detail/Futex.h' file not found

#include <folly/detail/Futex.h>

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6145

Differential Revision: D18910812

fbshipit-source-id: 5a4475466c2d0601657831a0b48d34316b2f0816

Summary:

db_stress_tool.cc now is a giant file. In order to main it easier to improve and maintain, break it down to multiple source files.

Most classes are turned into their own files. Separate .h and .cc files are created for gflag definiations. Another .h and .cc files are created for some common functions. Some test execution logic that is only loosely related to class StressTest is moved to db_stress_driver.h and db_stress_driver.cc. All the files are located under db_stress_tool/. The directory name is created as such because if we end it with either stress or test, .gitignore will ignore any file under it and makes it prone to issues in developements.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6134

Test Plan: Build under GCC7 with and without LITE on using GNU Make. Build with GCC 4.8. Build with cmake with -DWITH_TOOL=1

Differential Revision: D18876064

fbshipit-source-id: b25d0a7451840f31ac0f5ebb0068785f783fdf7d

Summary:

Before this fix, `make all` will emit full compilation command when building

object files in the third-party/folly directory even if default verbosity is

0 (AM_DEFAULT_VERBOSITY).

Test Plan (devserver):

```

$make all | tee build.log

$make check

```

Check build.log to verify.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6120

Differential Revision: D18795621

Pulled By: riversand963

fbshipit-source-id: 04641a8359cd4fd55034e6e797ed85de29ee2fe2

Summary:

Add the jni library for musl-libc, specifically for incorporating into Alpine based docker images. The classifier is `musl64`.

I have signed the CLA electronically.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/3143

Differential Revision: D18719372

fbshipit-source-id: 6189d149310b6436d6def7d808566b0234b23313

Summary:

**NOTE**: This also needs to be back-ported to be 6.4.6

Fix a regression introduced in f2bf0b2 by https://github.com/facebook/rocksdb/pull/5674 whereby the compiled library would get the wrong name on PPC64LE platforms.

On PPC64LE, the regression caused the library to be named `librocksdbjni-linux64.so` instead of `librocksdbjni-linux-ppc64le.so`.

This PR corrects the name back to `librocksdbjni-linux-ppc64le.so` and also corrects the ordering of conditional arguments in the Makefile to match the expected order as defined in the documentation for Make.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6080

Differential Revision: D18710351

fbshipit-source-id: d4db87ef378263b57de7f9edce1b7d15644cf9de

Summary:

* We can reuse downloaded 3rd-party libraries

* We can isolate the build to a Docker volume. This is useful for investigating failed builds, as we can examine the volume by assigning it a name during the build.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6079

Differential Revision: D18710263

fbshipit-source-id: 93f456ba44b49e48941c43b0c4d53995ecc1f404

Summary:

Had complications with LITE build and valgrind test.

Reverts/fixes small parts of PR https://github.com/facebook/rocksdb/issues/6007

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6036

Test Plan:

make LITE=1 all check

and

ROCKSDB_VALGRIND_RUN=1 DISABLE_JEMALLOC=1 make -j24 db_bloom_filter_test && ROCKSDB_VALGRIND_RUN=1 DISABLE_JEMALLOC=1 ./db_bloom_filter_test

Differential Revision: D18512238

Pulled By: pdillinger

fbshipit-source-id: 37213cf0d309edf11c483fb4b2fb6c02c2cf2b28

Summary:

Adds an improved, replacement Bloom filter implementation (FastLocalBloom) for full and partitioned filters in the block-based table. This replacement is faster and more accurate, especially for high bits per key or millions of keys in a single filter.

Speed

The improved speed, at least on recent x86_64, comes from

* Using fastrange instead of modulo (%)

* Using our new hash function (XXH3 preview, added in a previous commit), which is much faster for large keys and only *slightly* slower on keys around 12 bytes if hashing the same size many thousands of times in a row.

* Optimizing the Bloom filter queries with AVX2 SIMD operations. (Added AVX2 to the USE_SSE=1 build.) Careful design was required to support (a) SIMD-optimized queries, (b) compatible non-SIMD code that's simple and efficient, (c) flexible choice of number of probes, and (d) essentially maximized accuracy for a cache-local Bloom filter. Probes are made eight at a time, so any number of probes up to 8 is the same speed, then up to 16, etc.

* Prefetching cache lines when building the filter. Although this optimization could be applied to the old structure as well, it seems to balance out the small added cost of accumulating 64 bit hashes for adding to the filter rather than 32 bit hashes.

Here's nominal speed data from filter_bench (200MB in filters, about 10k keys each, 10 bits filter data / key, 6 probes, avg key size 24 bytes, includes hashing time) on Skylake DE (relatively low clock speed):

$ ./filter_bench -quick -impl=2 -net_includes_hashing # New Bloom filter

Build avg ns/key: 47.7135

Mixed inside/outside queries...

Single filter net ns/op: 26.2825

Random filter net ns/op: 150.459

Average FP rate %: 0.954651

$ ./filter_bench -quick -impl=0 -net_includes_hashing # Old Bloom filter

Build avg ns/key: 47.2245

Mixed inside/outside queries...

Single filter net ns/op: 63.2978

Random filter net ns/op: 188.038

Average FP rate %: 1.13823

Similar build time but dramatically faster query times on hot data (63 ns to 26 ns), and somewhat faster on stale data (188 ns to 150 ns). Performance differences on batched and skewed query loads are between these extremes as expected.

The only other interesting thing about speed is "inside" (query key was added to filter) vs. "outside" (query key was not added to filter) query times. The non-SIMD implementations are substantially slower when most queries are "outside" vs. "inside". This goes against what one might expect or would have observed years ago, as "outside" queries only need about two probes on average, due to short-circuiting, while "inside" always have num_probes (say 6). The problem is probably the nastily unpredictable branch. The SIMD implementation has few branches (very predictable) and has pretty consistent running time regardless of query outcome.

Accuracy

The generally improved accuracy (re: Issue https://github.com/facebook/rocksdb/issues/5857) comes from a better design for probing indices

within a cache line (re: Issue https://github.com/facebook/rocksdb/issues/4120) and improved accuracy for millions of keys in a single filter from using a 64-bit hash function (XXH3p). Design details in code comments.

Accuracy data (generalizes, except old impl gets worse with millions of keys):

Memory bits per key: FP rate percent old impl -> FP rate percent new impl

6: 5.70953 -> 5.69888

8: 2.45766 -> 2.29709

10: 1.13977 -> 0.959254

12: 0.662498 -> 0.411593

16: 0.353023 -> 0.0873754

24: 0.261552 -> 0.0060971

50: 0.225453 -> ~0.00003 (less than 1 in a million queries are FP)

Fixes https://github.com/facebook/rocksdb/issues/5857

Fixes https://github.com/facebook/rocksdb/issues/4120

Unlike the old implementation, this implementation has a fixed cache line size (64 bytes). At 10 bits per key, the accuracy of this new implementation is very close to the old implementation with 128-byte cache line size. If there's sufficient demand, this implementation could be generalized.

Compatibility

Although old releases would see the new structure as corrupt filter data and read the table as if there's no filter, we've decided only to enable the new Bloom filter with new format_version=5. This provides a smooth path for automatic adoption over time, with an option for early opt-in.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6007

Test Plan: filter_bench has been used thoroughly to validate speed, accuracy, and correctness. Unit tests have been carefully updated to exercise new and old implementations, as well as the logic to select an implementation based on context (format_version).

Differential Revision: D18294749

Pulled By: pdillinger

fbshipit-source-id: d44c9db3696e4d0a17caaec47075b7755c262c5f

Summary:

From bzip2's official [download page](http://www.bzip.org/downloads.html), we could download it from sourceforge. This source would be more credible than previous web archive.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5995

Differential Revision: D18377662

fbshipit-source-id: e8353f83d5d6ea6067f78208b7bfb7f0d5b49c05

Summary:

include db_stress_tool in rocksdb tools lib

Test Plan (on devserver):

```

$make db_stress

$./db_stress

$make all && make check

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5950

Differential Revision: D18044399

Pulled By: riversand963

fbshipit-source-id: 895585abbbdfd8b954965921dba4b1400b7af1b1

Summary:

expose db stress test by providing db_stress_tool.h in public header.

This PR does the following:

- adds a new header, db_stress_tool.h, in include/rocksdb/

- renames db_stress.cc to db_stress_tool.cc

- adds a db_stress.cc which simply invokes a test function.

- update Makefile accordingly.

Test Plan (dev server):

```

make db_stress

./db_stress

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5937

Differential Revision: D17997647

Pulled By: riversand963

fbshipit-source-id: 1a8d9994f89ce198935566756947c518f0052410

Summary:

Example: using the tool before and after PR https://github.com/facebook/rocksdb/issues/5784 shows that

the refactoring, presumed performance-neutral, actually sped up SST

filters by about 3% to 8% (repeatable result):

Before:

- Dry run ns/op: 22.4725

- Single filter ns/op: 51.1078

- Random filter ns/op: 120.133

After:

+ Dry run ns/op: 22.2301

+ Single filter run ns/op: 47.4313

+ Random filter ns/op: 115.9

Only tests filters for the block-based table (full filters and

partitioned filters - same implementation; not block-based filters),

which seems to be the recommended format/implementation.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5825

Differential Revision: D17804987

Pulled By: pdillinger

fbshipit-source-id: 0f18a9c254c57f7866030d03e7fa4ba503bac3c5

Summary:

Make class ObsoleteFilesTest inherit from DBTestBase.

Test plan (on devserver):

```

$COMPILE_WITH_ASAN=1 make obsolete_files_test

$./obsolete_files_test

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5820

Differential Revision: D17452348

Pulled By: riversand963

fbshipit-source-id: b09f4581a18022ca2bfd79f2836c0bf7083f5f25

Summary:

Refactoring to consolidate implementation details of legacy

Bloom filters. This helps to organize and document some related,

obscure code.

Also added make/cpp var TEST_CACHE_LINE_SIZE so that it's easy to

compile and run unit tests for non-native cache line size. (Fixed a

related test failure in db_properties_test.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5784

Test Plan:

make check, including Recently added Bloom schema unit tests

(in ./plain_table_db_test && ./bloom_test), and including with

TEST_CACHE_LINE_SIZE=128U and TEST_CACHE_LINE_SIZE=256U. Tested the

schema tests with temporary fault injection into new implementations.

Some performance testing with modified unit tests suggest a small to moderate

improvement in speed.

Differential Revision: D17381384

Pulled By: pdillinger

fbshipit-source-id: ee42586da996798910fc45ac0b6289147f16d8df

Summary:

This will allow us to fix history by having the code changes for PR#5784 properly attributed to it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5810

Differential Revision: D17400231

Pulled By: pdillinger

fbshipit-source-id: 2da8b1cdf2533cfedb35b5526eadefb38c291f09

Summary:

AtomicFlushStressTest is a powerful test, but right now we only run it for atomic_flush=true + disable_wal=true. We further extend it to the case where atomic_flush=false + disable_wal = false. All the workload generation and validation can stay the same.

Atomic flush crash test is also changed to switch between the two test scenarios. It makes the name "atomic flush crash test" out of sync from what it really does. We leave it as it is to avoid troubles with continous test set-up.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5729

Test Plan: Run "CRASH_TEST_KILL_ODD=188 TEST_TMPDIR=/dev/shm/ USE_CLANG=1 make whitebox_crash_test_with_atomic_flush", observe the settings used and see it passed.

Differential Revision: D16969791

fbshipit-source-id: 56e37487000ae631e31b0100acd7bdc441c04163

Summary:

Right now VerifyChecksum() doesn't do read-ahead. In some use cases, users won't be able to achieve good performance. With this change, by default, RocksDB will do a default readahead, and users will be able to overwrite the readahead size by passing in a ReadOptions.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5713

Test Plan: Add a new unit test.

Differential Revision: D16860874

fbshipit-source-id: 0cff0fe79ac855d3d068e6ccd770770854a68413

Summary:

This ports `folly::DistributedMutex` into RocksDB. The PR includes everything else needed to compile and use DistributedMutex as a component within folly. Most files are unchanged except for some portability stuff and includes.

For now, I've put this under `rocksdb/third-party`, but if there is a better folder to put this under, let me know. I also am not sure how or where to put unit tests for third-party stuff like this. It seems like gtest is included already, but I need to link with it from another third-party folder.

This also includes some other common components from folly

- folly/Optional

- folly/ScopeGuard (In particular `SCOPE_EXIT`)

- folly/synchronization/ParkingLot (A portable futex-like interface)

- folly/synchronization/AtomicNotification (The standard C++ interface for futexes)

- folly/Indestructible (For singletons that don't get destroyed without allocations)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5642

Differential Revision: D16544439

fbshipit-source-id: 179b98b5dcddc3075926d31a30f92fd064245731

Summary:

This is a new API added to db.h to allow for fetching all merge operands associated with a Key. The main motivation for this API is to support use cases where doing a full online merge is not necessary as it is performance sensitive. Example use-cases:

1. Update subset of columns and read subset of columns -

Imagine a SQL Table, a row is encoded as a K/V pair (as it is done in MyRocks). If there are many columns and users only updated one of them, we can use merge operator to reduce write amplification. While users only read one or two columns in the read query, this feature can avoid a full merging of the whole row, and save some CPU.

2. Updating very few attributes in a value which is a JSON-like document -

Updating one attribute can be done efficiently using merge operator, while reading back one attribute can be done more efficiently if we don't need to do a full merge.

----------------------------------------------------------------------------------------------------

API :

Status GetMergeOperands(

const ReadOptions& options, ColumnFamilyHandle* column_family,

const Slice& key, PinnableSlice* merge_operands,

GetMergeOperandsOptions* get_merge_operands_options,

int* number_of_operands)

Example usage :

int size = 100;

int number_of_operands = 0;

std::vector<PinnableSlice> values(size);

GetMergeOperandsOptions merge_operands_info;

db_->GetMergeOperands(ReadOptions(), db_->DefaultColumnFamily(), "k1", values.data(), merge_operands_info, &number_of_operands);

Description :

Returns all the merge operands corresponding to the key. If the number of merge operands in DB is greater than merge_operands_options.expected_max_number_of_operands no merge operands are returned and status is Incomplete. Merge operands returned are in the order of insertion.

merge_operands-> Points to an array of at-least merge_operands_options.expected_max_number_of_operands and the caller is responsible for allocating it. If the status returned is Incomplete then number_of_operands will contain the total number of merge operands found in DB for key.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5604

Test Plan:

Added unit test and perf test in db_bench that can be run using the command:

./db_bench -benchmarks=getmergeoperands --merge_operator=sortlist

Differential Revision: D16657366

Pulled By: vjnadimpalli

fbshipit-source-id: 0faadd752351745224ee12d4ae9ef3cb529951bf

Summary:

If a test is one of parallel tests, then it should also be one of the 'tests'.

Otherwise, `make all` won't build the binaries. For examle,

```

$COMPILE_WITH_ASAN=1 make -j32 all

```

Then if you do

```

$make check

```

The second command will invoke the compilation and building for db_bloom_test

and file_reader_writer_test **without** the `COMPILE_WITH_ASAN=1`, causing the

command to fail.

Test plan (on devserver):

```

$make -j32 all

```

Verify all binaries are built so that `make check` won't have to compile any

thing.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5672

Differential Revision: D16655834

Pulled By: riversand963

fbshipit-source-id: 050131412b5313496f85ae3deeeeb8d28af75746

Summary:

This PR implements cache eviction using reinforcement learning. It includes two implementations:

1. An implementation of Thompson Sampling for the Bernoulli Bandit [1].

2. An implementation of LinUCB with disjoint linear models [2].

The idea is that a cache uses multiple eviction policies, e.g., MRU, LRU, and LFU. The cache learns which eviction policy is the best and uses it upon a cache miss.

Thompson Sampling is contextless and does not include any features.

LinUCB includes features such as level, block type, caller, column family id to decide which eviction policy to use.

[1] Daniel J. Russo, Benjamin Van Roy, Abbas Kazerouni, Ian Osband, and Zheng Wen. 2018. A Tutorial on Thompson Sampling. Found. Trends Mach. Learn. 11, 1 (July 2018), 1-96. DOI: https://doi.org/10.1561/2200000070

[2] Lihong Li, Wei Chu, John Langford, and Robert E. Schapire. 2010. A contextual-bandit approach to personalized news article recommendation. In Proceedings of the 19th international conference on World wide web (WWW '10). ACM, New York, NY, USA, 661-670. DOI=http://dx.doi.org/10.1145/1772690.1772758

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5610

Differential Revision: D16435067

Pulled By: HaoyuHuang

fbshipit-source-id: 6549239ae14115c01cb1e70548af9e46d8dc21bb

Summary:

This test frequently times out under TSAN; parallelizing it should fix

this issue.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5632

Test Plan:

make check

buck test mode/dev-tsan internal_repo_rocksdb/repo:db_bloom_filter_test

Differential Revision: D16519399

Pulled By: ltamasi

fbshipit-source-id: 66e05a644d6f79c6d544255ffcf6de195d2d62fe

Summary:

current `clean` target in Makefile does not remove parallel test

binaries. Fix this.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5629

Test Plan:

(on devserver)

Take file_reader_writer_test for instance.

```

$make -j32 file_reader_writer_test

$make clean

```

Verify that binary file 'file_reader_writer_test' is delete by `make clean`.

Differential Revision: D16513176

Pulled By: riversand963

fbshipit-source-id: 70acb9f56c928a494964121b86aacc0090f31ff6

Summary:

Refresh of the earlier change here - https://github.com/facebook/rocksdb/issues/5135

This is a review request for code change needed for - https://github.com/facebook/rocksdb/issues/3469

"Add support for taking snapshot of a column family and creating column family from a given CF snapshot"

We have an implementation for this that we have been testing internally. We have two new APIs that together provide this functionality.

(1) ExportColumnFamily() - This API is modelled after CreateCheckpoint() as below.

// Exports all live SST files of a specified Column Family onto export_dir,

// returning SST files information in metadata.

// - SST files will be created as hard links when the directory specified

// is in the same partition as the db directory, copied otherwise.

// - export_dir should not already exist and will be created by this API.

// - Always triggers a flush.

virtual Status ExportColumnFamily(ColumnFamilyHandle* handle,

const std::string& export_dir,

ExportImportFilesMetaData** metadata);

Internally, the API will DisableFileDeletions(), GetColumnFamilyMetaData(), Parse through

metadata, creating links/copies of all the sst files, EnableFileDeletions() and complete the call by

returning the list of file metadata.

(2) CreateColumnFamilyWithImport() - This API is modeled after IngestExternalFile(), but invoked only during a CF creation as below.

// CreateColumnFamilyWithImport() will create a new column family with

// column_family_name and import external SST files specified in metadata into

// this column family.

// (1) External SST files can be created using SstFileWriter.

// (2) External SST files can be exported from a particular column family in

// an existing DB.

// Option in import_options specifies whether the external files are copied or

// moved (default is copy). When option specifies copy, managing files at

// external_file_path is caller's responsibility. When option specifies a

// move, the call ensures that the specified files at external_file_path are

// deleted on successful return and files are not modified on any error

// return.

// On error return, column family handle returned will be nullptr.

// ColumnFamily will be present on successful return and will not be present

// on error return. ColumnFamily may be present on any crash during this call.

virtual Status CreateColumnFamilyWithImport(

const ColumnFamilyOptions& options, const std::string& column_family_name,

const ImportColumnFamilyOptions& import_options,

const ExportImportFilesMetaData& metadata,

ColumnFamilyHandle** handle);

Internally, this API creates a new CF, parses all the sst files and adds it to the specified column family, at the same level and with same sequence number as in the metadata. Also performs safety checks with respect to overlaps between the sst files being imported.

If incoming sequence number is higher than current local sequence number, local sequence

number is updated to reflect this.

Note, as the sst files is are being moved across Column Families, Column Family name in sst file

will no longer match the actual column family on destination DB. The API does not modify Column

Family name or id in the sst files being imported.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5495

Differential Revision: D16018881

fbshipit-source-id: 9ae2251025d5916d35a9fc4ea4d6707f6be16ff9

Summary:

Crc32c Parallel computation optimization:

Algorithm comes from Intel whitepaper: [crc-iscsi-polynomial-crc32-instruction-paper](https://www.intel.com/content/dam/www/public/us/en/documents/white-papers/crc-iscsi-polynomial-crc32-instruction-paper.pdf)

Input data is divided into three equal-sized blocks

Three parallel blocks (crc0, crc1, crc2) for 1024 Bytes

One Block: 42(BLK_LENGTH) * 8(step length: crc32c_u64) bytes

1. crc32c_test:

```

[==========] Running 4 tests from 1 test case.

[----------] Global test environment set-up.

[----------] 4 tests from CRC

[ RUN ] CRC.StandardResults

[ OK ] CRC.StandardResults (1 ms)

[ RUN ] CRC.Values

[ OK ] CRC.Values (0 ms)

[ RUN ] CRC.Extend

[ OK ] CRC.Extend (0 ms)

[ RUN ] CRC.Mask

[ OK ] CRC.Mask (0 ms)

[----------] 4 tests from CRC (1 ms total)

[----------] Global test environment tear-down

[==========] 4 tests from 1 test case ran. (1 ms total)

[ PASSED ] 4 tests.

```

2. RocksDB benchmark: db_bench --benchmarks="crc32c"

```

Linear Arm crc32c:

crc32c: 1.005 micros/op 995133 ops/sec; 3887.2 MB/s (4096 per op)

```

```

Parallel optimization with Armv8 crypto extension:

crc32c: 0.419 micros/op 2385078 ops/sec; 9316.7 MB/s (4096 per op)

```

It gets ~2.4x speedup compared to linear Arm crc32c instructions.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5494

Differential Revision: D16340806

fbshipit-source-id: 95dae9a5b646fd20a8303671d82f17b2e162e945

Summary:

This PR adds a ghost cache for admission control. Specifically, it admits an entry on its second access.

It also adds a hybrid row-block cache that caches the referenced key-value pairs of a Get/MultiGet request instead of its blocks.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5534

Test Plan: make clean && COMPILE_WITH_ASAN=1 make check -j32

Differential Revision: D16101124

Pulled By: HaoyuHuang

fbshipit-source-id: b99edda6418a888e94eb40f71ece45d375e234b1

Summary:

Current PosixLogger performs IO operations using posix calls. Thus the

current implementation will not work for non-posix env. Created a new

logger class EnvLogger that uses env specific WritableFileWriter for IO operations.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5491

Test Plan: make check

Differential Revision: D15909002

Pulled By: ggaurav28

fbshipit-source-id: 13a8105176e8e42db0c59798d48cb6a0dbccc965

Summary:

This PR adds a BlockCacheTraceSimulator that reports the miss ratios given different cache configurations. A cache configuration contains "cache_name,num_shard_bits,cache_capacities". For example, "lru, 1, 1K, 2K, 4M, 4G".

When we replay the trace, we also perform lookups and inserts on the simulated caches.

In the end, it reports the miss ratio for each tuple <cache_name, num_shard_bits, cache_capacity> in a output file.

This PR also adds a main source block_cache_trace_analyzer so that we can run the analyzer in command line.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5449

Test Plan:

Added tests for block_cache_trace_analyzer.

COMPILE_WITH_ASAN=1 make check -j32.

Differential Revision: D15797073

Pulled By: HaoyuHuang

fbshipit-source-id: aef0c5c2e7938f3e8b6a10d4a6a50e6928ecf408

Summary:

This PR continues the work in https://github.com/facebook/rocksdb/pull/4748 and https://github.com/facebook/rocksdb/pull/4535 by adding a new DBOption `persist_stats_to_disk` which instructs RocksDB to persist stats history to RocksDB itself. When statistics is enabled, and both options `stats_persist_period_sec` and `persist_stats_to_disk` are set, RocksDB will periodically write stats to a built-in column family in the following form: key -> (timestamp in microseconds)#(stats name), value -> stats value. The existing API `GetStatsHistory` will detect the current value of `persist_stats_to_disk` and either read from in-memory data structure or from the hidden column family on disk.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5046

Differential Revision: D15863138

Pulled By: miasantreble

fbshipit-source-id: bb82abdb3f2ca581aa42531734ac799f113e931b

Summary:

This PR contains the first commit for block cache trace analyzer. It reads a block cache trace file and prints statistics of the traces.

We will extend this class to provide more functionalities.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5425

Differential Revision: D15709580

Pulled By: HaoyuHuang

fbshipit-source-id: 2f43bd2311f460ab569880819d95eeae217c20bb

Summary:

This PR adds a help class block cache tracer to read/write block cache accesses. It uses the trace reader/writer to perform this task.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5410

Differential Revision: D15612843

Pulled By: HaoyuHuang

fbshipit-source-id: f30fd1e1524355ca87db5d533a5c086728b141ea

Summary:

Many logging related source files are under util/. It will be more structured if they are together.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5387

Differential Revision: D15579036

Pulled By: siying

fbshipit-source-id: 3850134ed50b8c0bb40a0c8ae1f184fa4081303f

Summary:

There are too many types of files under util/. Some test related files don't belong to there or just are just loosely related. Mo

ve them to a new directory test_util/, so that util/ is cleaner.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5377

Differential Revision: D15551366

Pulled By: siying

fbshipit-source-id: 0f5c8653832354ef8caa31749c0143815d719e2c

Summary:

util/ means for lower level libraries, so it's a good idea to move the files which requires knowledge to DB out. Create a file/ and move some files there.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5375

Differential Revision: D15550935

Pulled By: siying

fbshipit-source-id: 61a9715dcde5386eebfb43e93f847bba1ae0d3f2

Summary:

This allows debug releases of RocksJava to be build with the Docker release targets.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5274

Differential Revision: D15185067

Pulled By: sagar0

fbshipit-source-id: f3988e472f281f5844d9a07098344a827b1e7eb1

Summary:

This PR allows RocksDB to run in single-primary, multi-secondary process mode.

The writer is a regular RocksDB (e.g. an `DBImpl`) instance playing the role of a primary.

Multiple `DBImplSecondary` processes (secondaries) share the same set of SST files, MANIFEST, WAL files with the primary. Secondaries tail the MANIFEST of the primary and apply updates to their own in-memory state of the file system, e.g. `VersionStorageInfo`.

This PR has several components:

1. (Originally in #4745). Add a `PathNotFound` subcode to `IOError` to denote the failure when a secondary tries to open a file which has been deleted by the primary.

2. (Similar to #4602). Add `FragmentBufferedReader` to handle partially-read, trailing record at the end of a log from where future read can continue.

3. (Originally in #4710 and #4820). Add implementation of the secondary, i.e. `DBImplSecondary`.

3.1 Tail the primary's MANIFEST during recovery.

3.2 Tail the primary's MANIFEST during normal processing by calling `ReadAndApply`.

3.3 Tailing WAL will be in a future PR.

4. Add an example in 'examples/multi_processes_example.cc' to demonstrate the usage of secondary RocksDB instance in a multi-process setting. Instructions to run the example can be found at the beginning of the source code.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4899

Differential Revision: D14510945

Pulled By: riversand963

fbshipit-source-id: 4ac1c5693e6012ad23f7b4b42d3c374fecbe8886

Summary:

This is my latest round of changes to add missing items to RocksJava. More to come in future PRs.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4833

Differential Revision: D14152266

Pulled By: sagar0

fbshipit-source-id: d6cff67e26da06c131491b5cf6911a8cd0db0775

Summary:

Currently crash test covers cases with and without atomic flush, but takes too

long to finish. Therefore it may be a better idea to put crash test with atomic

flush in a separate set of tests.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4945

Differential Revision: D13947548

Pulled By: riversand963

fbshipit-source-id: 177c6de865290fd650b0103408339eaa3f801d8c

Summary:

as title. For people who continue to need Lua compaction filter, you

can copy the include/rocksdb/utilities/rocks_lua/lua_compaction_filter.h and

utilities/lua/rocks_lua_compaction_filter.cc to your own codebase.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4971

Differential Revision: D14047468

Pulled By: riversand963

fbshipit-source-id: 9ad1a6484a7c94e478f1e108127a3184e4069f70

Summary:

We used to call `printf $(t_run)` and later feed the result to GNU parallel in the recipe of target `check_0`. However, this approach is problematic when the length of $(t_run) exceeds the

maximum length of a command and the `printf` command cannot be executed. Instead we use 'find -print' to avoid generating an overly long command.

**This PR is actually the last commit of #4916. Prefer to merge this PR separately.**

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4922

Differential Revision: D13845883

Pulled By: riversand963

fbshipit-source-id: b56de7f7af43337c6ec89b931de843c9667cb679

Summary:

False-negative about path not existing. The regex is ignoring the "." in front of a path.

Example: "./path/to/file"

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4682

Differential Revision: D13777110

Pulled By: sagar0

fbshipit-source-id: 9f8173b7581407555fdc055580732aeab37d4ade

Summary:

Remove some components that we never heard people using them.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4101

Differential Revision: D8825431

Pulled By: siying

fbshipit-source-id: 97a12ad3cad4ab12c82741a5ba49669aaa854180

Summary:

This PR contains the following fixes:

1. Fixing Makefile to support non-default locations of developer tools

2. Fixing compile error using a patch from https://github.com/facebook/rocksdb/pull/4007

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4687

Differential Revision: D13287263

Pulled By: riversand963

fbshipit-source-id: 4525eb42ba7b6f82af5f9bfb8e52fa4024e27ccc

Summary:

Note that Snappy now requires CMake to build it, so I added a note about RocksJava to the README.md file.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4761

Differential Revision: D13403811

Pulled By: ajkr

fbshipit-source-id: 8fcd7e3dc7f7152080364a374d3065472f417eff

Summary:

Now that v2 is fully functional, the v1 aggregator is removed.

The v2 aggregator has been renamed.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4778

Differential Revision: D13495930

Pulled By: abhimadan

fbshipit-source-id: 9d69500a60a283e79b6c4fa938fc68a8aa4d40d6

Summary:

Avoids re-downloading the .tar.gz files for the static build of RocksJava if they are already present.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4769

Differential Revision: D13491919

Pulled By: ajkr

fbshipit-source-id: 9265f577e049838dc40335d54f1ff2b4f972c38c

Summary:

A user friendly sst file reader is useful when we want to access sst

files outside of RocksDB. For example, we can generate an sst file

with SstFileWriter and send it to other places, then use SstFileReader

to read the file and process the entries in other ways.

Also rename the original SstFileReader to SstFileDumper because of

name conflict, and seems SstFileDumper is more appropriate for tools.

TODO: there is only a very simple test now, because I want to get some feedback first.

If the changes look good, I will add more tests soon.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4717

Differential Revision: D13212686

Pulled By: ajkr

fbshipit-source-id: 737593383264c954b79e63edaf44aaae0d947e56

Summary:

The old RangeDelAggregator did expensive pre-processing work

to create a collapsed, binary-searchable representation of range

tombstones. With FragmentedRangeTombstoneIterator, much of this work is

now unnecessary. RangeDelAggregatorV2 takes advantage of this by seeking

in each iterator to find a covering tombstone in ShouldDelete, while

doing minimal work in AddTombstones. The old RangeDelAggregator is still

used during flush/compaction for now, though RangeDelAggregatorV2 will

support those uses in a future PR.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4649

Differential Revision: D13146964

Pulled By: abhimadan

fbshipit-source-id: be29a4c020fc440500c137216fcc1cf529571eb3

Summary:

In #4652 we are setting -Os for lite builds only when LITE=1 is specified. But currently almost all the users invoke lite build via OPT="-DROCKSDB_LITE=1". So this diff tries to set LITE=1 when users already pass in -DROCKSDB_LITE=1 via the command line.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4671

Differential Revision: D13033801

Pulled By: sagar0

fbshipit-source-id: e7b506cee574f9e3f42221ee6647915011c78d78

Summary:

When running `make shared_lib` under fbcode, there's liblua link error: https://gist.github.com/yiwu-arbug/b796bff6b3d46d90c1ed878d983de50d

This is because we link liblua.a when building shared lib. If we want to link with liblua, we need to link with liblua_pic.a instead. Fixing by simply not link with lua.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4651

Differential Revision: D12964798

Pulled By: yiwu-arbug

fbshipit-source-id: 18d6cee94afe20748068822b76e29ef255cdb04d

Summary:

Previously, range tombstones were accumulated from every level, which

was necessary if a range tombstone in a higher level covered a key in a lower

level. However, RangeDelAggregator::AddTombstones's complexity is based on

the number of tombstones that are currently stored in it, which is wasteful in

the Get case, where we only need to know the highest sequence number of range

tombstones that cover the key from higher levels, and compute the highest covering

sequence number at the current level. This change introduces this optimization, and

removes the use of RangeDelAggregator from the Get path.

In the benchmark results, the following command was used to initialize the database:

```

./db_bench -db=/dev/shm/5k-rts -use_existing_db=false -benchmarks=filluniquerandom -write_buffer_size=1048576 -compression_type=lz4 -target_file_size_base=1048576 -max_bytes_for_level_base=4194304 -value_size=112 -key_size=16 -block_size=4096 -level_compaction_dynamic_level_bytes=true -num=5000000 -max_background_jobs=12 -benchmark_write_rate_limit=20971520 -range_tombstone_width=100 -writes_per_range_tombstone=100 -max_num_range_tombstones=50000 -bloom_bits=8

```

...and the following command was used to measure read throughput:

```

./db_bench -db=/dev/shm/5k-rts/ -use_existing_db=true -benchmarks=readrandom -disable_auto_compactions=true -num=5000000 -reads=100000 -threads=32

```

The filluniquerandom command was only run once, and the resulting database was used

to measure read performance before and after the PR. Both binaries were compiled with

`DEBUG_LEVEL=0`.

Readrandom results before PR:

```

readrandom : 4.544 micros/op 220090 ops/sec; 16.9 MB/s (63103 of 100000 found)

```

Readrandom results after PR:

```

readrandom : 11.147 micros/op 89707 ops/sec; 6.9 MB/s (63103 of 100000 found)

```

So it's actually slower right now, but this PR paves the way for future optimizations (see #4493).

----

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4449

Differential Revision: D10370575

Pulled By: abhimadan

fbshipit-source-id: 9a2e152be1ef36969055c0e9eb4beb0d96c11f4d

Summary:

In fbcode when we build with clang7++, although -faligned-new is available in compile phase, we link with an older version of libstdc++.a and it doesn't come with aligned-new support (e.g. `nm libstdc++.a | grep align_val_t` return empty). In this case the previous -faligned-new detection can pass but will end up with link error. Fixing it by only have the detection for non-fbcode build.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4576

Differential Revision: D10500008

Pulled By: yiwu-arbug

fbshipit-source-id: b375de4fbb61d2a08e54ab709441aa8e7b4b08cf

Summary:

Introduce `RepeatableThread` utility to run task periodically in a separate thread. It is basically the same as the the same class in fbcode, and in addition provide a helper method to let tests mock time and trigger execution one at a time.

We can use this class to replace `TimerQueue` in #4382 and `BlobDB`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4423

Differential Revision: D10020932

Pulled By: yiwu-arbug

fbshipit-source-id: 3616bef108c39a33c92eedb1256de424b7c04087

Summary:

To measure the results of upcoming DeleteRange v2 work, this commit adds

simple benchmarks for RangeDelAggregator. It measures the average time

for AddTombstones and ShouldDelete calls.

Using this to compare the results before #4014 and on the latest master (using the default arguments) produces the following results:

Before #4014:

```

=======================

Results:

=======================

AddTombstones: 1356.28 us

ShouldDelete: 0.401732 us

```

Latest master:

```

=======================

Results:

=======================

AddTombstones: 740.82 us

ShouldDelete: 0.383271 us

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4363

Differential Revision: D9881676

Pulled By: abhimadan

fbshipit-source-id: 793e7d61aa4b9d47eb917bbcc03f08695b5e5442

Summary:

Before the fix:

On a PowerPC machine, run the following

```

$ make jtest

```

The command will fail due to "undefined symbol: crc32c_ppc". It was caused by

'rocksdbjava' Makefile target not including crc32c_ppc object files when

generating the shared lib. The fix is simple.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4357

Differential Revision: D9779474

Pulled By: riversand963

fbshipit-source-id: 3c5ec9068c2b9c796e6500f71cd900267064fd51

Summary:

Currently unity-test is failing because both trace_replay.cc and trace_analyzer_tool.cc defined `DecodeCFAndKey` under anonymous namespace. It is supposed to be fine except unity test will dump all source files together and now we have a conflict.

Another issue with trace_analyzer_tool.cc is that it is using some utility functions from ldb_cmd which is not included in Makefile for unity_test, I chose to update TESTHARNESS to include LIBOBJECTS. Feel free to comment if there is a less intrusive way to solve this.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4323

Differential Revision: D9599170

Pulled By: miasantreble

fbshipit-source-id: 38765b11f8e7de92b43c63bdcf43ea914abdc029

Summary:

Since bzip.org is no longer maintained, download the bzip2 packages from a snapshot taken by the internet archive until we figure out a more credible source.

Fixes issue: #4305

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4306

Differential Revision: D9514868

Pulled By: sagar0

fbshipit-source-id: 57c6a141a62e652f94377efc7ca9916b458e68d5

Summary:

Previously, the trace_analyzer_tool will be complied with other libobjects, which let the GFLAGS of trace_analyzer appear in other tools (e.g., db_bench, rocksdb_dump, and etc.). When using '--help', the help information of trace_analyzer will appear in other tool help information, which will cause confusion issues.

Currently, trace_analyzer_tool is built and used only by trace_analyzer and trace_analyzer_test to avoid the issues.

Tested with make asan_check.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4290

Differential Revision: D9413163

Pulled By: zhichao-cao

fbshipit-source-id: ed5d20c4575a53ca15ff62a2ffe601d5cf278cc4

Summary:

A framework of trace analyzing for RocksDB

After collecting the trace by using the tool of [PR #3837](https://github.com/facebook/rocksdb/pull/3837). User can use the Trace Analyzer to interpret, analyze, and characterize the collected workload.

**Input:**

1. trace file

2. Whole keys space file

**Statistics:**

1. Access count of each operation (Get, Put, Delete, SingleDelete, DeleteRange, Merge) in each column family.

2. Key hotness (access count) of each one

3. Key space separation based on given prefix

4. Key size distribution

5. Value size distribution if appliable

6. Top K accessed keys

7. QPS statistics including the average QPS and peak QPS

8. Top K accessed prefix

9. The query correlation analyzing, output the number of X after Y and the corresponding average time

intervals

**Output:**

1. key access heat map (either in the accessed key space or whole key space)

2. trace sequence file (interpret the raw trace file to line base text file for future use)

3. Time serial (The key space ID and its access time)

4. Key access count distritbution

5. Key size distribution

6. Value size distribution (in each intervals)

7. whole key space separation by the prefix

8. Accessed key space separation by the prefix

9. QPS of each operation and each column family

10. Top K QPS and their accessed prefix range

**Test:**

1. Added the unit test of analyzing Get, Put, Delete, SingleDelete, DeleteRange, Merge

2. Generated the trace and analyze the trace

**Implemented but not tested (due to the limitation of trace_replay):**

1. Analyzing Iterator, supporting Seek() and SeekForPrev() analyzing