Summary:

Various tests had disabled valgrind due to it slowing down and timing

out (as is the case right now) the CI runs. Where a test was disabled with no comment,

I assumed slowness was the cause. For these tests that were slow under

valgrind, as well as the ones identified in https://github.com/facebook/rocksdb/issues/8352, this PR moves them

behind the compiler flag `-DROCKSDB_FULL_VALGRIND_RUN`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8475

Test Plan: running `make full_valgrind_test`, `make valgrind_test`, `make check`; will verify they appear working correctly

Reviewed By: jay-zhuang

Differential Revision: D29504843

Pulled By: ajkr

fbshipit-source-id: 2aac90749cfbd30d5ce11cb29a07a1b9314eeea7

Summary:

Previously, the following command:

```USE_CLANG=1 TEST_TMPDIR=/dev/shm/rocksdb OPT=-g make -j$(nproc) analyze```

was raising an error/warning the new_mem could potentially be a `nullptr`. This error appeared due to code changes from https://github.com/facebook/rocksdb/issues/8454, including an if-statement containing "`... && new_mem != nullptr && ...`", which made the analyzer believe that past this `if`-statement, a `new_mem==nullptr` was a possible scenario.

This code patch simply introduces `assert`s and removes this condition in the `if`-statement.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8492

Reviewed By: jay-zhuang

Differential Revision: D29571275

Pulled By: bjlemaire

fbshipit-source-id: 75d72246b70ebbbae7dea11ccb5778686d8bcbea

Summary:

We ended up using a different approach for tracking the amount of

garbage in blob files (see e.g. https://github.com/facebook/rocksdb/pull/8450),

so the ability to apply only a range of table property collectors is

now unnecessary. The patch reverts this part of

https://github.com/facebook/rocksdb/pull/8298 while keeping the cleanup done

in that PR.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8465

Test Plan: `make check`

Reviewed By: jay-zhuang

Differential Revision: D29399921

Pulled By: ltamasi

fbshipit-source-id: af64816c357d0829b9d7ba8ca1477038138f6f0a

Summary:

Implement an experimental feature called "MemPurge", which consists in purging "garbage" bytes out of a memtable and reuse the memtable struct instead of making it immutable and eventually flushing its content to storage.

The prototype is by default deactivated and is not intended for use. It is intended for correctness and validation testing. At the moment, the "MemPurge" feature can be switched on by using the `options.experimental_allow_mempurge` flag. For this early stage, when the allow_mempurge flag is set to `true`, all the flush operations will be rerouted to perform a MemPurge. This is a temporary design decision that will give us the time to explore meaningful heuristics to use MemPurge at the right time for relevant workloads . Moreover, the current MemPurge operation only supports `Puts`, `Deletes`, `DeleteRange` operations, and handles `Iterators` as well as `CompactionFilter`s that are invoked at flush time .

Three unit tests are added to `db_flush_test.cc` to test if MemPurge works correctly (and checks that the previously mentioned operations are fully supported thoroughly tested).

One noticeable design decision is the timing of the MemPurge operation in the memtable workflow: for this prototype, the mempurge happens when the memtable is switched (and usually made immutable). This is an inefficient process because it implies that the entirety of the MemPurge operation happens while holding the db_mutex. Future commits will make the MemPurge operation a background task (akin to the regular flush operation) and aim at drastically enhancing the performance of this operation. The MemPurge is also not fully "WAL-compatible" yet, but when the WAL is full, or when the regular MemPurge operation fails (or when the purged memtable still needs to be flushed), a regular flush operation takes place. Later commits will also correct these behaviors.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8454

Reviewed By: anand1976

Differential Revision: D29433971

Pulled By: bjlemaire

fbshipit-source-id: 6af48213554e35048a7e03816955100a80a26dc5

Summary:

Change the job_id for remote compaction interface, which will include

both internal compaction job_id, also a sub_compaction_job_id. It is not

a backward compatible change. The user needs to update interface during

upgrade. (We will avoid backward incompatible change after the feature is

not experimental.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8364

Reviewed By: ajkr

Differential Revision: D28917301

Pulled By: jay-zhuang

fbshipit-source-id: 6d72a21f652bb517ad6954d0387b496797fc4e11

Summary:

Added BlobMetaData to ColumnFamilyMetaData and LiveBlobMetaData and DB API GetLiveBlobMetaData to retrieve it.

First pass at struct. More tests and maybe fields to come...

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8273

Reviewed By: ltamasi

Differential Revision: D29102400

Pulled By: mrambacher

fbshipit-source-id: 8a2383a4446328be6b91dced9841fdd3dfc80b73

Summary:

In PR https://github.com/facebook/rocksdb/issues/7523 , checksum handoff is introduced in RocksDB for WAL, Manifest, and SST files. When user enable checksum handoff for a certain type of file, before the data is written to the lower layer storage system, we calculate the checksum (crc32c) of each piece of data and pass the checksum down with the data, such that data verification can be down by the lower layer storage system if it has the capability. However, it cannot cover the whole lifetime of the data in the memory and also it potentially introduces extra checksum calculation overhead.

In this PR, we introduce a new interface in WritableFileWriter::Append, which allows the caller be able to pass the data and the checksum (crc32c) together. In this way, WritableFileWriter can directly use the pass-in checksum (crc32c) to generate the checksum of data being passed down to the storage system. It saves the calculation overhead and achieves higher protection coverage. When a new checksum is added with the data, we use Crc32cCombine https://github.com/facebook/rocksdb/issues/8305 to combine the existing checksum and the new checksum. To avoid the segmenting of data by rate-limiter before it is stored, rate-limiter is called enough times to accumulate enough credits for a certain write. This design only support Manifest and WAL which use log_writer in the current stage.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8412

Test Plan: make check, add new testing cases.

Reviewed By: anand1976

Differential Revision: D29151545

Pulled By: zhichao-cao

fbshipit-source-id: 75e2278c5126cfd58393c67b1efd18dcc7a30772

Summary:

Provide support for Merge operation with base values during

Compaction in IntegratedBlobDB.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8445

Test Plan: Add new unit test

Reviewed By: ltamasi

Differential Revision: D29343949

Pulled By: akankshamahajan15

fbshipit-source-id: 844f6f02f93388a11e6e08bda7bb3a2a28e47c70

Summary:

The patch builds on `BlobGarbageMeter` and `BlobCountingIterator`

(introduced in https://github.com/facebook/rocksdb/issues/8426 and

https://github.com/facebook/rocksdb/issues/8443 respectively)

and ties it all together. It measures the amount of garbage

generated by a compaction and logs the corresponding `BlobFileGarbage`

records as part of the compaction job's `VersionEdit`. Note: in order

to have accurate results, `kRemoveAndSkipUntil` for compaction filters

is implemented using iteration.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8450

Test Plan: Ran `make check` and the crash test script.

Reviewed By: jay-zhuang

Differential Revision: D29338207

Pulled By: ltamasi

fbshipit-source-id: 4381c432ac215139439f6d6fb801a6c0e4d8c128

Summary:

`VersionSet::VerifyCompactionFileConsistency` was superseded by the LSM tree

consistency checks introduced in https://github.com/facebook/rocksdb/pull/6901,

which are more comprehensive, more efficient, and are performed unconditionally

even in release builds.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8449

Test Plan: `make check`

Reviewed By: ajkr

Differential Revision: D29337441

Pulled By: ltamasi

fbshipit-source-id: a05324f88e3400e27e6a00406c878a6276e0c9cc

Summary:

Follow-up to https://github.com/facebook/rocksdb/issues/8426 .

The patch adds a new kind of `InternalIterator` that wraps another one and

passes each key-value encountered to `BlobGarbageMeter` as inflow.

This iterator will be used as an input iterator for compactions when the input

SSTs reference blob files.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8443

Test Plan: `make check`

Reviewed By: jay-zhuang

Differential Revision: D29311987

Pulled By: ltamasi

fbshipit-source-id: b4493b4c0c0c2e3c2ecc33c8969a5ef02de5d9d8

Summary:

This reverts commit 25be1ed66a.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8438

Test Plan: Run the impacted mysql test 40 times

Reviewed By: ajkr

Differential Revision: D29286247

Pulled By: jay-zhuang

fbshipit-source-id: d3bd056971a19a8b012d5d0295fa045c012b3c04

Summary:

Currently, blob file checksums are incorrectly dumped as raw bytes

in the `ldb manifest_dump` output (i.e. they are not printed as hex).

The patch fixes this and also updates some test cases to reflect that

the checksum value field in `BlobFileAddition` and `SharedBlobFileMetaData`

contains the raw checksum and not a hex string.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8437

Test Plan:

`make check`

Tested using `ldb manifest_dump`

Reviewed By: akankshamahajan15

Differential Revision: D29284170

Pulled By: ltamasi

fbshipit-source-id: d11cfb3435b14cd73c8a3d3eb14fa0f9fa1d2228

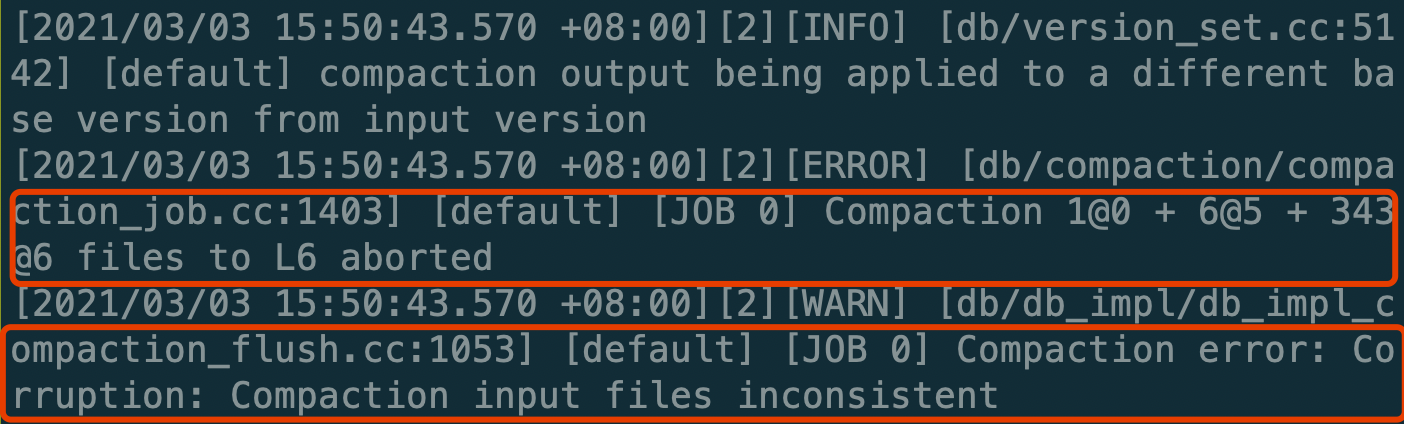

Summary:

`DeleteFilesInRange()` marks deleting files to `being_compacted`

before deleting, which may cause ongoing compactions report corruption

exception or ASSERT for debug build.

Adding the missing `ComputeCompactionScore()` when `being_compacted` is set.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8434

Test Plan: Unittest

Reviewed By: ajkr

Differential Revision: D29276127

Pulled By: jay-zhuang

fbshipit-source-id: f5b223e3c1fc6d821e100e3f3442bc70c1d50cf7

Summary:

This is part of an alternative approach to https://github.com/facebook/rocksdb/issues/8316.

Unlike that approach, this one relies on key-values getting processed one by one

during compaction, and does not involve persistence.

Specifically, the patch adds a class `BlobGarbageMeter` that can track the number

and total size of blobs in a (sub)compaction's input and output on a per-blob file

basis. This information can then be used to compute the amount of additional

garbage generated by the compaction for any given blob file by subtracting the

"outflow" from the "inflow."

Note: this patch only adds `BlobGarbageMeter` and associated unit tests. I plan to

hook up this class to the input and output of `CompactionIterator` in a subsequent PR.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8426

Test Plan: `make check`

Reviewed By: jay-zhuang

Differential Revision: D29242250

Pulled By: ltamasi

fbshipit-source-id: 597e50ad556540e413a50e804ba15bc044d809bb

Summary:

Tracing the MultiGet information including timestamp, keys, and CF_IDs to the trace file for analyzing and replay.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8421

Test Plan: make check, add test to trace_analyzer_test

Reviewed By: anand1976

Differential Revision: D29221195

Pulled By: zhichao-cao

fbshipit-source-id: 30c677d6c39ab31ef4bbdf7e0d1fa1fd79f295ff

Summary:

**Summary**:

2 new statistics counters are added to RocksDB: `MEMTABLE_PAYLOAD_BYTES_AT_FLUSH` and `MEMTABLE_GARBAGE_BYTES_AT_FLUSH`. The former tracks how many raw bytes of useful data are present on the memtable at flush time, whereas the latter is tracks how many of these raw bytes are considered garbage, meaning that they ended up not being imported on the SSTables resulting from the flush operations.

**Unit test**: run `make db_flush_test -j$(nproc); ./db_flush_test` to run the unit test.

This executable includes 3 tests, that test support and correct stat calculations for workloads with inserts, deletes, and DeleteRanges. The parameters are set such that the workloads are performed on a single memtable, and a single SSTable is created as a result of the flush operation. The flush operation is manually called in the test file. The tests verify that the values of these 2 statistics counters introduced in this PR can be exactly predicted, showing that we have a full understanding of the underlying operations.

**Performance testing**:

`./db_bench -statistics -benchmarks=fillrandom -num=10000000` repeated 10 times.

Timing done using "date" function in a bash script.

_Results_:

Original Rocksdb fork: mean 66.6 sec, std 1.18 sec.

This feature branch: mean 67.4 sec, std 1.35 sec.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8411

Reviewed By: akankshamahajan15

Differential Revision: D29150629

Pulled By: bjlemaire

fbshipit-source-id: 7b3c2e86d50c6aa34fa50fd134282eacb543a5b1

Summary:

This PR prepopulates warm/hot data blocks which are already in memory

into block cache at the time of flush. On a flush, the data block that is

in memory (in memtables) get flushed to the device. If using Direct IO,

additional IO is incurred to read this data back into memory again, which

is avoided by enabling newly added option.

Right now, this is enabled only for flush for data blocks. We plan to

expand this option to cover compactions in the future and for other types

of blocks.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8242

Test Plan: Add new unit test

Reviewed By: anand1976

Differential Revision: D28521703

Pulled By: akankshamahajan15

fbshipit-source-id: 7219d6958821cedce689a219c3963a6f1a9d5f05

Summary:

RocksDB logs a warning if WAL truncation on DB open fails. Its possible that on some file systems, truncation is not required and they would return ```Status::NotSupported()``` for ```ReopenWritableFile```. Don't log a warning in such cases.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8414

Reviewed By: akankshamahajan15

Differential Revision: D29181738

Pulled By: anand1976

fbshipit-source-id: 6e01e9117e1e4c1d67daa4dcee7fa59d06e057a7

Summary:

This is the next part of the ImmutableOptions cleanup. After changing the use of ImmutableCFOptions to ImmutableOptions, there were places in the code that had did something like "ImmutableOptions* immutable_cf_options", where "cf" referred to the "old" type.

This change simply renames the variables to match the current type. No new functionality is introduced.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8409

Reviewed By: pdillinger

Differential Revision: D29166248

Pulled By: mrambacher

fbshipit-source-id: 96de97f8e743f5c5160f02246e3ed8269556dc6f

Summary:

This reverts commit 9167ece586.

It was found to reliably trip a compaction picking conflict assertion in a MyRocks unit test. We don't understand why yet so reverting in the meantime.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8410

Test Plan: `make check -j48`

Reviewed By: jay-zhuang

Differential Revision: D29150300

Pulled By: ajkr

fbshipit-source-id: 2de8664f355d6da015e84e5fec2e3f90f49741c8

Summary:

- Added CreateFromString method to Env and FilesSystem to replace LoadEnv/Load. This method/signature is a precursor to making these classes extend Customizable.

- Added CreateFromSystem to Env. This method standardizes creating an Env from the environment variables. Previously, some places would check TEST_ENV_URI and others would also check TEST_FS_URI. Now the code is more command/standardized.

- Added CreateFromFlags to Env. These method allows Env to be create from string options (such as GFLAGS options) in a more standard way.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8174

Reviewed By: zhichao-cao

Differential Revision: D28999603

Pulled By: mrambacher

fbshipit-source-id: 88e6911e7e91f908458a7fe10a20e93ecbc275fb

Summary:

(1)Make CompactionService derived from Customizable by defining two extra functions that are needed, as described in customizable.h comment section

(2)Revise the MyTestCompactionService class in compaction_service_test.cc to satisfy the class inheritance requirement

(3)Specify namespace of ToString() in compaction_service_test.cc to avoid function collision with CompactionService's ancestor classes

Test did:

make -j24 compaction_service_test

./compaction_service_test

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8395

Reviewed By: jay-zhuang

Differential Revision: D29076068

Pulled By: hx235

fbshipit-source-id: c130100fa466939b3137e917f5fdc4b2ae8e37d4

Summary:

If the block Cache is full with strict_capacity_limit=false,

then our CacheEntryStatsCollector could be immediately evicted on

release, so iterating through column families with shared block cache

could trigger re-scan for each CF. This change fixes that problem by

pinning the CacheEntryStatsCollector from InternalStats so that it's not

evicted.

I had originally thought that this object could participate in LRU like

everything else, but even though a re-load+re-scan only touches memory,

it can be orders of magnitude more expensive than other cache misses.

One service in Facebook has scans that take ~20s over 100GB block cache

that is mostly 4KB entries. (The up-side of this bug and https://github.com/facebook/rocksdb/issues/8369 is that

we had a natural experiment on the effect on some service metrics even

with block cache scans running continuously in the background--a kind

of worst case scenario. Metrics like latency were not affected enough

to trigger warnings.)

Other smaller fixes:

20s is already a sizable portion of 600s stats dump period, or 180s

default max age to force re-scan, so added logic to ensure that (for

each block cache) we don't spend more than 0.2% of our background thread

time scanning it. Nevertheless, "foreground" requests for cache entry

stats (calls to `db->GetMapProperty(DB::Properties::kBlockCacheEntryStats)`)

are permitted to consume more CPU.

Renamed field to cache_entry_stats_ to match code style.

This change is intended for patching in 6.21 release.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8385

Test Plan:

unit test expanded to cover new logic (detect regression),

some manual testing with db_bench

Reviewed By: ajkr

Differential Revision: D29042759

Pulled By: pdillinger

fbshipit-source-id: 236faa902397f50038c618f50fbc8cf3f277308c

Summary:

Recalculate the total size after generate new sst files.

New generated files might have different size as the previous time which

could cause the test failed.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8396

Test Plan:

```

gtest-parallel ./db_compaction_test

--gtest_filter=DBCompactionTest.ManualCompactionMax -r 1000 -w 100

```

Reviewed By: akankshamahajan15

Differential Revision: D29083299

Pulled By: jay-zhuang

fbshipit-source-id: 49d4bd619cefc0f9a1f452f8759ff4c2ba1b6fdb

Summary:

The subcompaction boundary picking logic does not currently guarantee

that all user keys that differ only by timestamp get processed by the same

subcompaction. This can cause issues with the `CompactionIterator` state

machine: for instance, one subcompaction that processes a subset of such KVs

might drop a tombstone based on the KVs it sees, while in reality the

tombstone might not have been eligible to be optimized out.

(See also https://github.com/facebook/rocksdb/issues/6645, which adjusted the way compaction inputs are picked for the

same reason.)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8393

Test Plan: Ran `make check` and the crash test script with timestamps enabled.

Reviewed By: jay-zhuang

Differential Revision: D29071635

Pulled By: ltamasi

fbshipit-source-id: f6c72442122b4e581871e096fabe3876a9e8a5a6

Summary:

DBImpl::DumpStats is supposed to do this:

Dump DB stats to LOG

For each CF, dump CFStatsNoFileHistogram to LOG

For each CF, dump CFFileHistogram to LOG

Instead, due to a longstanding bug from 2017 (https://github.com/facebook/rocksdb/issues/2126), it would dump

CFStats, which includes both CFStatsNoFileHistogram and CFFileHistogram,

in both loops, resulting in near-duplicate output.

This fixes the bug.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8380

Test Plan: Manual inspection of LOG after db_bench

Reviewed By: jay-zhuang

Differential Revision: D29017535

Pulled By: pdillinger

fbshipit-source-id: 3010604c4a629a80347f129cd746ce9b0d0cbda6

Summary:

In the current logic, any IO Error with retryable flag == true will be handled by the special logic and in most cases, StartRecoverFromRetryableBGIOError will be called to do the auto resume. If the NoSpace error with retryable flag is set during WAL write, it is mapped as a hard error, which will trigger the auto recovery. During the recover process, if write continues and append to the WAL, the write process sees that bg_error is set to HardError and it calls WriteStatusCheck(), which calls SetBGError() with Status (not IOStatus). This will redirect to the regular SetBGError interface, in which recovery_error_ will be set to the corresponding error. With the recovery_error_ set, the auto resume thread created in StartRecoverFromRetryableBGIOError will keep failing as long as user keeps trying to write.

To fix this issue. All the NoSpace error (no matter retryable flag is set or not) will be redirect to the regular SetBGError, and RecoverFromNoSpace() will do the recovery job which calls SstFileManager::StartErrorRecovery().

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8376

Test Plan: make check and added the new testing case

Reviewed By: anand1976

Differential Revision: D29071828

Pulled By: zhichao-cao

fbshipit-source-id: 7171d7e14cc4620fdab49b7eff7a2fe9a89942c2

Summary:

This PR add support for Merge operation in Integrated BlobDB with base values(i.e DB::Put). Merged values can be retrieved through DB::Get, DB::MultiGet, DB::GetMergeOperands and Iterator operation.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8292

Test Plan: Add new unit tests

Reviewed By: ltamasi

Differential Revision: D28415896

Pulled By: akankshamahajan15

fbshipit-source-id: e9b3478bef51d2f214fb88c31ed3c8d2f4a531ff

Summary:

Changed fprintf function to fputc in ApplyVersionEdit, and replaced null characters with whitespaces.

Added unit test in ldb_test.py - verifies that manifest_dump --verbose output is correct when keys and values containing null characters are inserted.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8378

Reviewed By: pdillinger

Differential Revision: D29034584

Pulled By: bjlemaire

fbshipit-source-id: 50833687a8a5f726e247c38457eadc3e6dbab862

Summary:

Currently, we either use the file system inode or a monotonically incrementing runtime ID as the block cache key prefix. However, if we use a monotonically incrementing runtime ID (in the case that the file system does not support inode id generation), in some cases, it cannot ensure uniqueness (e.g., we have secondary cache migrated from host to host). We use DbSessionID (20 bytes) + current file number (at most 10 bytes) as the new cache block key prefix when the secondary cache is enabled. So can accommodate scenarios such as transfer of cache state across hosts.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8360

Test Plan: add the test to lru_cache_test

Reviewed By: pdillinger

Differential Revision: D29006215

Pulled By: zhichao-cao

fbshipit-source-id: 6cff686b38d83904667a2bd39923cd030df16814

Summary:

Logically, subcompactions process a key range [start, end); however, the way

this is currently implemented is that the `CompactionIterator` for any given

subcompaction keeps processing key-values until it actually outputs a key that

is out of range, which is then discarded. Instead of doing this, the patch

introduces a new type of internal iterator called `ClippingIterator` which wraps

another internal iterator and "clips" its range of key-values so that any KVs

returned are strictly in the [start, end) interval. This does eliminate a (minor)

inefficiency by stopping processing in subcompactions exactly at the limit;

however, the main motivation is related to BlobDB: namely, we need this to be

able to measure the amount of garbage generated by a subcompaction

precisely and prevent off-by-one errors.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8327

Test Plan: `make check`

Reviewed By: siying

Differential Revision: D28761541

Pulled By: ltamasi

fbshipit-source-id: ee0e7229f04edabbc7bed5adb51771fbdc287f69

Summary:

In final polishing of https://github.com/facebook/rocksdb/issues/8297 (after most manual testing), I

broke my own caching layer by sanitizing an input parameter with

std::min(0, x) instead of std::max(0, x). I resisted unit testing the

timing part of the result caching because historically, these test

are either flaky or difficult to write, and this was not a correctness

issue. This bug is essentially unnoticeable with a small number

of column families but can explode background work with a

large number of column families.

This change fixes the logical error, removes some unnecessary related

optimization, and adds mock time/sleeps to the unit test to ensure we

can cache hit within the age limit.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8369

Test Plan: added time testing logic to existing unit test

Reviewed By: ajkr

Differential Revision: D28950892

Pulled By: pdillinger

fbshipit-source-id: e79cd4ff3eec68fd0119d994f1ed468c38026c3b

Summary:

Added the ability to cancel an in-progress range compaction by storing to an atomic "canceled" variable pointed to within the CompactRangeOptions structure.

Tested via two tests added to db_tests2.cc.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8351

Reviewed By: ajkr

Differential Revision: D28808894

Pulled By: ddevec

fbshipit-source-id: cb321361c9e23b084b188bb203f11c375a22c2dd

Summary:

This is a duplicate of https://github.com/facebook/rocksdb/issues/4948 by mzhaom to fix tests after rebase.

This change is a follow-up to https://github.com/facebook/rocksdb/issues/4927, which made this possible by allowing tombstone dropping/seqnum zeroing optimizations on the last key in the compaction. Now the `largest_seqno != 0` condition suffices to prevent snapshot release triggered compaction from entering an infinite loop.

The issues caused by the extraneous condition `level_and_file.second->num_deletions > 1` are:

- files could have `largest_seqno > 0` forever making it impossible to tell they cannot contain any covering keys

- it doesn't trigger compaction when there are many overwritten keys. Some MyRocks use case actually doesn't use Delete but instead calls Put with empty value to "delete" keys, so we'd like to be able to trigger compaction in this case too.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8357

Test Plan: - make check

Reviewed By: jay-zhuang

Differential Revision: D28855340

Pulled By: ajkr

fbshipit-source-id: a261b51eecafec492499e6d01e8e43112f801798

Summary:

I noticed ```openat``` system call with ```O_WRONLY``` flag and ```sync_file_range``` and ```truncate``` on WAL file when using ```rocksdb::DB::OpenForReadOnly``` by way of ```db_bench --readonly=true --benchmarks=readseq --use_existing_db=1 --num=1 ...```

Noticed in ```strace``` after seeing the last modification time of the WAL file change after each run (with ```--readonly=true```).

I think introduced by 7d7f14480e from https://github.com/facebook/rocksdb/pull/8122

I added a test to catch the WAL file being truncated and the modification time on it changing.

I am not sure if a mock filesystem with mock clock could be used to avoid having to sleep 1.1s.

The test could also check the set of files is the same and that the sizes are also unchanged.

Before:

```

[ RUN ] DBBasicTest.ReadOnlyReopenMtimeUnchanged

db/db_basic_test.cc:182: Failure

Expected equality of these values:

file_mtime_after_readonly_reopen

Which is: 1621611136

file_mtime_before_readonly_reopen

Which is: 1621611135

file is: 000010.log

[ FAILED ] DBBasicTest.ReadOnlyReopenMtimeUnchanged (1108 ms)

```

After:

```

[ RUN ] DBBasicTest.ReadOnlyReopenMtimeUnchanged

[ OK ] DBBasicTest.ReadOnlyReopenMtimeUnchanged (1108 ms)

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8313

Reviewed By: pdillinger

Differential Revision: D28656925

Pulled By: jay-zhuang

fbshipit-source-id: ea9e215cb53e7c830e76bc5fc75c45e21f12a1d6

Summary:

https://github.com/facebook/rocksdb/pull/8288 introduces a bug: SequenceIterWrapper should do next for seek key using internal key comparator rather than user comparator. Fix it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8328

Test Plan: Pass all existing tests

Reviewed By: ltamasi

Differential Revision: D28647263

fbshipit-source-id: 4081d684fd8a86d248c485ef8a1563c7af136447

Summary:

Error:

```

db/db_compaction_test.cc:5211:47: warning: The left operand of '*' is a garbage value

uint64_t total = (l1_avg_size + l2_avg_size * 10) * 10;

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8325

Test Plan: `$ make analyze`

Reviewed By: pdillinger

Differential Revision: D28620916

Pulled By: jay-zhuang

fbshipit-source-id: f6d58ab84eefbcc905cda45afb9522b0c6d230f8

Summary:

With Ribbon filter work and possible variance in actual bits

per key (or prefix; general term "entry") to achieve certain FP rates,

I've received a request to be able to track actual bits per key in

generated filters. This change adds a num_filter_entries table

property, which can be combined with filter_size to get bits per key

(entry).

This can vary from num_entries in at least these ways:

* Different versions of same key are only counted once in filters.

* With prefix filters, several user keys map to the same filter entry.

* A single filter can include both prefixes and user keys.

Note that FilterBlockBuilder::NumAdded() didn't do anything useful

except distinguish empty from non-empty.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8323

Test Plan: basic unit test included, others updated

Reviewed By: jay-zhuang

Differential Revision: D28596210

Pulled By: pdillinger

fbshipit-source-id: 529a111f3c84501e5a470bc84705e436ee68c376

Summary:

Fix a bug that for manual compaction, `max_compaction_bytes` is only

limit the SST files from input level, but not overlapped files on output

level.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8269

Test Plan: `make check`

Reviewed By: ajkr

Differential Revision: D28231044

Pulled By: jay-zhuang

fbshipit-source-id: 9d7d03004f30cc4b1b9819830141436907554b7c

Summary:

When a memtable is flushed, it will validate number of entries it reads, and compare the number with how many entries inserted into memtable. This serves as one sanity c\

heck against memory corruption. This change will also allow more counters to be added in the future for better validation.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8288

Test Plan: Pass all existing tests

Reviewed By: ajkr

Differential Revision: D28369194

fbshipit-source-id: 7ff870380c41eab7f99eee508550dcdce32838ad

Summary:

The test want to make sure these's no compaction during `AddFile`

(between `DBImpl::AddFile:MutexLock` and `DBImpl::AddFile:MutexUnlock`)

but the mutex could be unlocked by `EnterUnbatched()`.

Move the lock start point after bumping the ingest file number.

Also fix the dead lock when ASSERT fails.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8307

Reviewed By: ajkr

Differential Revision: D28479849

Pulled By: jay-zhuang

fbshipit-source-id: b3c50f66aa5d5f59c5c27f815bfea189c4cd06cb

Summary:

This change gathers and publishes statistics about the

kinds of items in block cache. This is especially important for

profiling relative usage of cache by index vs. filter vs. data blocks.

It works by iterating over the cache during periodic stats dump

(InternalStats, stats_dump_period_sec) or on demand when

DB::Get(Map)Property(kBlockCacheEntryStats), except that for

efficiency and sharing among column families, saved data from

the last scan is used when the data is not considered too old.

The new information can be seen in info LOG, for example:

Block cache LRUCache@0x7fca62229330 capacity: 95.37 MB collections: 8 last_copies: 0 last_secs: 0.00178 secs_since: 0

Block cache entry stats(count,size,portion): DataBlock(7092,28.24 MB,29.6136%) FilterBlock(215,867.90 KB,0.888728%) FilterMetaBlock(2,5.31 KB,0.00544%) IndexBlock(217,180.11 KB,0.184432%) WriteBuffer(1,256.00 KB,0.262144%) Misc(1,0.00 KB,0%)

And also through DB::GetProperty and GetMapProperty (here using

ldb just for demonstration):

$ ./ldb --db=/dev/shm/dbbench/ get_property rocksdb.block-cache-entry-stats

rocksdb.block-cache-entry-stats.bytes.data-block: 0

rocksdb.block-cache-entry-stats.bytes.deprecated-filter-block: 0

rocksdb.block-cache-entry-stats.bytes.filter-block: 0

rocksdb.block-cache-entry-stats.bytes.filter-meta-block: 0

rocksdb.block-cache-entry-stats.bytes.index-block: 178992

rocksdb.block-cache-entry-stats.bytes.misc: 0

rocksdb.block-cache-entry-stats.bytes.other-block: 0

rocksdb.block-cache-entry-stats.bytes.write-buffer: 0

rocksdb.block-cache-entry-stats.capacity: 8388608

rocksdb.block-cache-entry-stats.count.data-block: 0

rocksdb.block-cache-entry-stats.count.deprecated-filter-block: 0

rocksdb.block-cache-entry-stats.count.filter-block: 0

rocksdb.block-cache-entry-stats.count.filter-meta-block: 0

rocksdb.block-cache-entry-stats.count.index-block: 215

rocksdb.block-cache-entry-stats.count.misc: 1

rocksdb.block-cache-entry-stats.count.other-block: 0

rocksdb.block-cache-entry-stats.count.write-buffer: 0

rocksdb.block-cache-entry-stats.id: LRUCache@0x7f3636661290

rocksdb.block-cache-entry-stats.percent.data-block: 0.000000

rocksdb.block-cache-entry-stats.percent.deprecated-filter-block: 0.000000

rocksdb.block-cache-entry-stats.percent.filter-block: 0.000000

rocksdb.block-cache-entry-stats.percent.filter-meta-block: 0.000000

rocksdb.block-cache-entry-stats.percent.index-block: 2.133751

rocksdb.block-cache-entry-stats.percent.misc: 0.000000

rocksdb.block-cache-entry-stats.percent.other-block: 0.000000

rocksdb.block-cache-entry-stats.percent.write-buffer: 0.000000

rocksdb.block-cache-entry-stats.secs_for_last_collection: 0.000052

rocksdb.block-cache-entry-stats.secs_since_last_collection: 0

Solution detail - We need some way to flag what kind of blocks each

entry belongs to, preferably without changing the Cache API.

One of the complications is that Cache is a general interface that could

have other users that don't adhere to whichever convention we decide

on for keys and values. Or we would pay for an extra field in the Handle

that would only be used for this purpose.

This change uses a back-door approach, the deleter, to indicate the

"role" of a Cache entry (in addition to the value type, implicitly).

This has the added benefit of ensuring proper code origin whenever we

recognize a particular role for a cache entry; if the entry came from

some other part of the code, it will use an unrecognized deleter, which

we simply attribute to the "Misc" role.

An internal API makes for simple instantiation and automatic

registration of Cache deleters for a given value type and "role".

Another internal API, CacheEntryStatsCollector, solves the problem of

caching the results of a scan and sharing them, to ensure scans are

neither excessive nor redundant so as not to harm Cache performance.

Because code is added to BlocklikeTraits, it is pulled out of

block_based_table_reader.cc into its own file.

This is a reformulation of https://github.com/facebook/rocksdb/issues/8276, without the type checking option

(could still be added), and with actual stat gathering.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8297

Test Plan: manual testing with db_bench, and a couple of basic unit tests

Reviewed By: ltamasi

Differential Revision: D28488721

Pulled By: pdillinger

fbshipit-source-id: 472f524a9691b5afb107934be2d41d84f2b129fb

Summary:

Some file systems (especially distributed FS) do not support reopening a file for writing. The ExternalSstFileIngestionJob calls ReopenWritableFile in order to sync the ingested file, which typically makes sense only on a local file system with a page cache (i.e Posix). So this change tries to sync the ingested file only if ReopenWritableFile doesn't return Status::NotSupported().

Tests:

Add a new unit test in external_sst_file_basic_test

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8296

Reviewed By: jay-zhuang

Differential Revision: D28420865

Pulled By: anand1976

fbshipit-source-id: 380e7f5ff95324997f7a59864a9ac96ebbd0100c

Summary:

This patch does two things:

1) Introduces some aliases in order to eliminate/prevent long-winded type names

w/r/t the internal table property collectors (see e.g.

`std::vector<std::unique_ptr<IntTblPropCollectorFactory>>`).

2) Makes it possible to apply only a subrange of table property collectors during

table building by turning `TableBuilderOptions::int_tbl_prop_collector_factories`

from a pointer to a `vector` into a range (i.e. a pair of iterators).

Rationale: I plan to introduce a BlobDB related table property collector, which

should only be applied during table creation if blob storage is enabled at the moment

(which can be changed dynamically). This change will make it possible to include/

exclude the BlobDB related collector as needed without having to introduce

a second `vector` of collectors in `ColumnFamilyData` with pretty much the same

contents.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8298

Test Plan: `make check`

Reviewed By: jay-zhuang

Differential Revision: D28430910

Pulled By: ltamasi

fbshipit-source-id: a81d28f2c59495865300f43deb2257d2e6977c8e

Summary:

As a part of tiered storage, writing tempeature information to manifest is needed so that after DB recovery, RocksDB still has the tiering information, to implement some further necessary functionalities.

Also fix some issues in simulated hybrid FS.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8284

Test Plan: Add a new unit test to validate that the information is indeed written and read back.

Reviewed By: zhichao-cao

Differential Revision: D28335801

fbshipit-source-id: 56aeb2e6ea090be0200181dd968c8a7278037def

Summary:

Defined the abstract interface for a secondary cache in include/rocksdb/secondary_cache.h, and updated LRUCacheOptions to take a std::shared_ptr<SecondaryCache>. An item is initially inserted into the LRU (primary) cache. When it ages out and evicted from memory, its inserted into the secondary cache. On a LRU cache miss and successful lookup in the secondary cache, the item is promoted to the LRU cache. Only support synchronous lookup currently. The secondary cache would be used to implement a persistent (flash cache) or compressed cache.

Tests:

Results from cache_bench and db_bench don't show any regression due to these changes.

cache_bench results before and after this change -

Command

```./cache_bench -ops_per_thread=10000000 -threads=1```

Before

```Complete in 40.688 s; QPS = 245774```

```Complete in 40.486 s; QPS = 246996```

```Complete in 42.019 s; QPS = 237989```

After

```Complete in 40.672 s; QPS = 245869```

```Complete in 44.622 s; QPS = 224107```

```Complete in 42.445 s; QPS = 235599```

db_bench results before this change, and with this change + https://github.com/facebook/rocksdb/issues/8213 and https://github.com/facebook/rocksdb/issues/8191 -

Commands

```./db_bench --benchmarks="fillseq,compact" -num=30000000 -key_size=32 -value_size=256 -use_direct_io_for_flush_and_compaction=true -db=/home/anand76/nvm_cache/db -partition_index_and_filters=true```

```./db_bench -db=/home/anand76/nvm_cache/db -use_existing_db=true -benchmarks=readrandom -num=30000000 -key_size=32 -value_size=256 -use_direct_reads=true -cache_size=1073741824 -cache_numshardbits=6 -cache_index_and_filter_blocks=true -read_random_exp_range=17 -statistics -partition_index_and_filters=true -threads=16 -duration=300```

Before

```

DB path: [/home/anand76/nvm_cache/db]

readrandom : 80.702 micros/op 198104 ops/sec; 54.4 MB/s (3708999 of 3708999 found)

```

```

DB path: [/home/anand76/nvm_cache/db]

readrandom : 87.124 micros/op 183625 ops/sec; 50.4 MB/s (3439999 of 3439999 found)

```

After

```

DB path: [/home/anand76/nvm_cache/db]

readrandom : 77.653 micros/op 206025 ops/sec; 56.6 MB/s (3866999 of 3866999 found)

```

```

DB path: [/home/anand76/nvm_cache/db]

readrandom : 84.962 micros/op 188299 ops/sec; 51.7 MB/s (3535999 of 3535999 found)

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8271

Reviewed By: zhichao-cao

Differential Revision: D28357511

Pulled By: anand1976

fbshipit-source-id: d1cfa236f00e649a18c53328be10a8062a4b6da2

Summary:

The functions will be used for remote compaction parameter

input and result.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8247

Test Plan: `make check`

Reviewed By: ajkr

Differential Revision: D28104680

Pulled By: jay-zhuang

fbshipit-source-id: c0a5178e6277125118384278efea2acbf90aa6cb

Summary:

Adds a new Cache::ApplyToAllEntries API that we expect to use

(in follow-up PRs) for efficiently gathering block cache statistics.

Notable features vs. old ApplyToAllCacheEntries:

* Includes key and deleter (in addition to value and charge). We could

have passed in a Handle but then more virtual function calls would be

needed to get the "fields" of each entry. We expect to use the 'deleter'

to identify the origin of entries, perhaps even more.

* Heavily tuned to minimize latency impact on operating cache. It

does this by iterating over small sections of each cache shard while

cycling through the shards.

* Supports tuning roughly how many entries to operate on for each

lock acquire and release, to control the impact on the latency of other

operations without excessive lock acquire & release. The right balance

can depend on the cost of the callback. Good default seems to be

around 256.

* There should be no need to disable thread safety. (I would expect

uncontended locks to be sufficiently fast.)

I have enhanced cache_bench to validate this approach:

* Reports a histogram of ns per operation, so we can look at the

ditribution of times, not just throughput (average).

* Can add a thread for simulated "gather stats" which calls

ApplyToAllEntries at a specified interval. We also generate a histogram

of time to run ApplyToAllEntries.

To make the iteration over some entries of each shard work as cleanly as

possible, even with resize between next set of entries, I have

re-arranged which hash bits are used for sharding and which for indexing

within a shard.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8225

Test Plan:

A couple of unit tests are added, but primary validation is manual, as

the primary risk is to performance.

The primary validation is using cache_bench to ensure that neither

the minor hashing changes nor the simulated stats gathering

significantly impact QPS or latency distribution. Note that adding op

latency histogram seriously impacts the benchmark QPS, so for a

fair baseline, we need the cache_bench changes (except remove simulated

stat gathering to make it compile). In short, we don't see any

reproducible difference in ops/sec or op latency unless we are gathering

stats nearly continuously. Test uses 10GB block cache with

8KB values to be somewhat realistic in the number of items to iterate

over.

Baseline typical output:

```

Complete in 92.017 s; Rough parallel ops/sec = 869401

Thread ops/sec = 54662

Operation latency (ns):

Count: 80000000 Average: 11223.9494 StdDev: 29.61

Min: 0 Median: 7759.3973 Max: 9620500

Percentiles: P50: 7759.40 P75: 14190.73 P99: 46922.75 P99.9: 77509.84 P99.99: 217030.58

------------------------------------------------------

[ 0, 1 ] 68 0.000% 0.000%

( 2900, 4400 ] 89 0.000% 0.000%

( 4400, 6600 ] 33630240 42.038% 42.038% ########

( 6600, 9900 ] 18129842 22.662% 64.700% #####

( 9900, 14000 ] 7877533 9.847% 74.547% ##

( 14000, 22000 ] 15193238 18.992% 93.539% ####

( 22000, 33000 ] 3037061 3.796% 97.335% #

( 33000, 50000 ] 1626316 2.033% 99.368%

( 50000, 75000 ] 421532 0.527% 99.895%

( 75000, 110000 ] 56910 0.071% 99.966%

( 110000, 170000 ] 16134 0.020% 99.986%

( 170000, 250000 ] 5166 0.006% 99.993%

( 250000, 380000 ] 3017 0.004% 99.996%

( 380000, 570000 ] 1337 0.002% 99.998%

( 570000, 860000 ] 805 0.001% 99.999%

( 860000, 1200000 ] 319 0.000% 100.000%

( 1200000, 1900000 ] 231 0.000% 100.000%

( 1900000, 2900000 ] 100 0.000% 100.000%

( 2900000, 4300000 ] 39 0.000% 100.000%

( 4300000, 6500000 ] 16 0.000% 100.000%

( 6500000, 9800000 ] 7 0.000% 100.000%

```

New, gather_stats=false. Median thread ops/sec of 5 runs:

```

Complete in 92.030 s; Rough parallel ops/sec = 869285

Thread ops/sec = 54458

Operation latency (ns):

Count: 80000000 Average: 11298.1027 StdDev: 42.18

Min: 0 Median: 7722.0822 Max: 6398720

Percentiles: P50: 7722.08 P75: 14294.68 P99: 47522.95 P99.9: 85292.16 P99.99: 228077.78

------------------------------------------------------

[ 0, 1 ] 109 0.000% 0.000%

( 2900, 4400 ] 793 0.001% 0.001%

( 4400, 6600 ] 34054563 42.568% 42.569% #########

( 6600, 9900 ] 17482646 21.853% 64.423% ####

( 9900, 14000 ] 7908180 9.885% 74.308% ##

( 14000, 22000 ] 15032072 18.790% 93.098% ####

( 22000, 33000 ] 3237834 4.047% 97.145% #

( 33000, 50000 ] 1736882 2.171% 99.316%

( 50000, 75000 ] 446851 0.559% 99.875%

( 75000, 110000 ] 68251 0.085% 99.960%

( 110000, 170000 ] 18592 0.023% 99.983%

( 170000, 250000 ] 7200 0.009% 99.992%

( 250000, 380000 ] 3334 0.004% 99.997%

( 380000, 570000 ] 1393 0.002% 99.998%

( 570000, 860000 ] 700 0.001% 99.999%

( 860000, 1200000 ] 293 0.000% 100.000%

( 1200000, 1900000 ] 196 0.000% 100.000%

( 1900000, 2900000 ] 69 0.000% 100.000%

( 2900000, 4300000 ] 32 0.000% 100.000%

( 4300000, 6500000 ] 10 0.000% 100.000%

```

New, gather_stats=true, 1 second delay between scans. Scans take about

1 second here so it's spending about 50% time scanning. Still the effect on

ops/sec and latency seems to be in the noise. Median thread ops/sec of 5 runs:

```

Complete in 91.890 s; Rough parallel ops/sec = 870608

Thread ops/sec = 54551

Operation latency (ns):

Count: 80000000 Average: 11311.2629 StdDev: 45.28

Min: 0 Median: 7686.5458 Max: 10018340

Percentiles: P50: 7686.55 P75: 14481.95 P99: 47232.60 P99.9: 79230.18 P99.99: 232998.86

------------------------------------------------------

[ 0, 1 ] 71 0.000% 0.000%

( 2900, 4400 ] 291 0.000% 0.000%

( 4400, 6600 ] 34492060 43.115% 43.116% #########

( 6600, 9900 ] 16727328 20.909% 64.025% ####

( 9900, 14000 ] 7845828 9.807% 73.832% ##

( 14000, 22000 ] 15510654 19.388% 93.220% ####

( 22000, 33000 ] 3216533 4.021% 97.241% #

( 33000, 50000 ] 1680859 2.101% 99.342%

( 50000, 75000 ] 439059 0.549% 99.891%

( 75000, 110000 ] 60540 0.076% 99.967%

( 110000, 170000 ] 14649 0.018% 99.985%

( 170000, 250000 ] 5242 0.007% 99.991%

( 250000, 380000 ] 3260 0.004% 99.995%

( 380000, 570000 ] 1599 0.002% 99.997%

( 570000, 860000 ] 1043 0.001% 99.999%

( 860000, 1200000 ] 471 0.001% 99.999%

( 1200000, 1900000 ] 275 0.000% 100.000%

( 1900000, 2900000 ] 143 0.000% 100.000%

( 2900000, 4300000 ] 60 0.000% 100.000%

( 4300000, 6500000 ] 27 0.000% 100.000%

( 6500000, 9800000 ] 7 0.000% 100.000%

( 9800000, 14000000 ] 1 0.000% 100.000%

Gather stats latency (us):

Count: 46 Average: 980387.5870 StdDev: 60911.18

Min: 879155 Median: 1033777.7778 Max: 1261431

Percentiles: P50: 1033777.78 P75: 1120666.67 P99: 1261431.00 P99.9: 1261431.00 P99.99: 1261431.00

------------------------------------------------------

( 860000, 1200000 ] 45 97.826% 97.826% ####################

( 1200000, 1900000 ] 1 2.174% 100.000%

Most recent cache entry stats:

Number of entries: 1295133

Total charge: 9.88 GB

Average key size: 23.4982

Average charge: 8.00 KB

Unique deleters: 3

```

Reviewed By: mrambacher

Differential Revision: D28295742

Pulled By: pdillinger

fbshipit-source-id: bbc4a552f91ba0fe10e5cc025c42cef5a81f2b95

Summary:

This change enables a couple of things:

- Different ConfigOptions can have different registry/factory associated with it, thereby allowing things like a "Test" ConfigOptions versus a "Production"

- The ObjectRegistry is created fewer times and can be re-used

The ConfigOptions can also be initialized/constructed from a DBOptions, in which case it will grab some of its settings (Env, Logger) from the DBOptions.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8166

Reviewed By: zhichao-cao

Differential Revision: D27657952

Pulled By: mrambacher

fbshipit-source-id: ae1d6200bb7ab127405cdeefaba43c7fe694dfdd

Summary:

The WBWI has two differing modes of operation dependent on the value

of the constructor parameter `overwrite_key`.

Currently, regardless of the parameter, neither mode performs as

expected when using Merge. This PR remedies this by correctly invoking

the appropriate Merge Operator before returning results from the WBWI.

Examples of issues that exist which are solved by this PR:

## Example 1 with `overwrite_key=false`

Currently, from an empty database, the following sequence:

```

Put('k1', 'v1')

Merge('k1', 'v2')

Get('k1')

```

Incorrectly yields `v2`, that is to say that the Merge behaves like a Put.

## Example 2 with o`verwrite_key=true`

Currently, from an empty database, the following sequence:

```

Put('k1', 'v1')

Merge('k1', 'v2')

Get('k1')

```

Incorrectly yields `ERROR: kMergeInProgress`.

## Example 3 with `overwrite_key=false`

Currently, with a database containing `('k1' -> 'v1')`, the following sequence:

```

Merge('k1', 'v2')

GetFromBatchAndDB('k1')

```

Incorrectly yields `v1,v2`

## Example 4 with `overwrite_key=true`

Currently, with a database containing `('k1' -> 'v1')`, the following sequence:

```

Merge('k1', 'v1')

GetFromBatchAndDB('k1')

```

Incorrectly yields `ERROR: kMergeInProgress`.

## Example 5 with `overwrite_key=false`

Currently, from an empty database, the following sequence:

```

Put('k1', 'v1')

Merge('k1', 'v2')

GetFromBatchAndDB('k1')

```

Incorrectly yields `v1,v2`

## Example 6 with `overwrite_key=true`

Currently, from an empty database, `('k1' -> 'v1')`, the following sequence:

```

Put('k1', 'v1')

Merge('k1', 'v2')

GetFromBatchAndDB('k1')

```

Incorrectly yields `ERROR: kMergeInProgress`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8135

Reviewed By: pdillinger

Differential Revision: D27657938

Pulled By: mrambacher

fbshipit-source-id: 0fbda6bbc66bedeba96a84786d90141d776297df

Summary:

From HISTORY.md release note:

- Allow `CompactionFilter`s to apply in more table file creation scenarios such as flush and recovery. For compatibility, `CompactionFilter`s by default apply during compaction. Users can customize this behavior by overriding `CompactionFilterFactory::ShouldFilterTableFileCreation()`.

- Removed unused structure `CompactionFilterContext`

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8243

Test Plan: added unit tests

Reviewed By: pdillinger

Differential Revision: D28088089

Pulled By: ajkr

fbshipit-source-id: 0799be7908e3b39fea09fc3f1ab00e13ad817fae

Summary:

Larger arena block size does provide the benefit of reducing allocation overhead, however it may cause other troubles. For example, allocator is more likely not to allocate them to physical memory and trigger page fault. Weighing the risk, we cap the arena block size to 1MB. Users can always use a larger value if they want.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7907

Test Plan: Run all existing tests

Reviewed By: pdillinger

Differential Revision: D26135269

fbshipit-source-id: b7f55afd03e6ee1d8715f90fa11b6c33944e9ea8

Summary:

Refactor kill point to one single class, rather than several extern variables. The intention was to drop unflushed data before killing to simulate some job, and I tried to a pointer to fault ingestion fs to the killing class, but it ended up with harder than I thought. Perhaps we'll need to do this in another way. But I thought the refactoring itself is good so I send it out.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8241

Test Plan: make release and run crash test for a while.

Reviewed By: anand1976

Differential Revision: D28078486

fbshipit-source-id: f9182c1455f52e6851c13f88a21bade63bcec45f

Summary:

The ImmutableCFOptions contained a bunch of fields that belonged to the ImmutableDBOptions. This change cleans that up by introducing an ImmutableOptions struct. Following the pattern of Options struct, this class inherits from the DB and CFOption structs (of the Immutable form).

Only one structural change (the ImmutableCFOptions::fs was changed to a shared_ptr from a raw one) is in this PR. All of the other changes involve moving the member variables from the ImmutableCFOptions into the ImmutableOptions and changing member variables or function parameters as required for compilation purposes.

Follow-on PRs may do a further clean-up of the code, such as renaming variables (such as "ImmutableOptions cf_options") and potentially eliminating un-needed function parameters (there is no longer a need to pass both an ImmutableDBOptions and an ImmutableOptions to a function).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8262

Reviewed By: pdillinger

Differential Revision: D28226540

Pulled By: mrambacher

fbshipit-source-id: 18ae71eadc879dedbe38b1eb8e6f9ff5c7147dbf

Summary:

Previously the shutdown process did not properly wait for all

`compaction_thread_limiter` tokens to be released before proceeding to

delete the DB's C++ objects. When this happened, we saw tests like

"DBCompactionTest.CompactionLimiter" flake with the following error:

```

virtual

rocksdb::ConcurrentTaskLimiterImpl::~ConcurrentTaskLimiterImpl():

Assertion `outstanding_tasks_ == 0' failed.

```

There is a case where a token can still be alive even after the shutdown

process has waited for BG work to complete. In particular, this happens

because the shutdown process only waits for flush/compaction scheduled/unscheduled counters to all

reach zero. These counters are decremented in `BackgroundCallCompaction()`

functions. However, tokens are released in `BGWork*Compaction()` functions, which

actually wrap the `BackgroundCallCompaction()` function.

A simple sleep could repro the race condition:

```

$ diff --git a/db/db_impl/db_impl_compaction_flush.cc

b/db/db_impl/db_impl_compaction_flush.cc

index 806bc548a..ba59efa89 100644

--- a/db/db_impl/db_impl_compaction_flush.cc

+++ b/db/db_impl/db_impl_compaction_flush.cc

@@ -2442,6 +2442,7 @@ void DBImpl::BGWorkCompaction(void* arg) {

static_cast<PrepickedCompaction*>(ca.prepicked_compaction);

static_cast_with_check<DBImpl>(ca.db)->BackgroundCallCompaction(

prepicked_compaction, Env::Priority::LOW);

+ sleep(1);

delete prepicked_compaction;

}

$ ./db_compaction_test --gtest_filter=DBCompactionTest.CompactionLimiter

db_compaction_test: util/concurrent_task_limiter_impl.cc:24: virtual rocksdb::ConcurrentTaskLimiterImpl::~ConcurrentTaskLimiterImpl(): Assertion `outstanding_tasks_ == 0' failed.

Received signal 6 (Aborted)

#0 /usr/local/fbcode/platform007/lib/libc.so.6(gsignal+0xcf) [0x7f02673c30ff] ?? ??:0

https://github.com/facebook/rocksdb/issues/1 /usr/local/fbcode/platform007/lib/libc.so.6(abort+0x134) [0x7f02673ac934] ?? ??:0

...

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8253

Test Plan: sleeps to expose race conditions

Reviewed By: akankshamahajan15

Differential Revision: D28168064

Pulled By: ajkr

fbshipit-source-id: 9e5167c74398d323e7975980c5cc00f450631160

Summary:

Previously we saw flakes on platforms like arm on CircleCI, such as the following:

```

Note: Google Test filter = DBTest.L0L1L2AndUpHitCounter

[==========] Running 1 test from 1 test case.

[----------] Global test environment set-up.

[----------] 1 test from DBTest

[ RUN ] DBTest.L0L1L2AndUpHitCounter

db/db_test.cc:5345: Failure

Expected: (TestGetTickerCount(options, GET_HIT_L0)) > (100), actual: 30 vs 100

[ FAILED ] DBTest.L0L1L2AndUpHitCounter (150 ms)

[----------] 1 test from DBTest (150 ms total)

[----------] Global test environment tear-down

[==========] 1 test from 1 test case ran. (150 ms total)

[ PASSED ] 0 tests.

[ FAILED ] 1 test, listed below:

[ FAILED ] DBTest.L0L1L2AndUpHitCounter

```

The test was totally non-deterministic, e.g., flush/compaction timing would affect how many files on each level. Furthermore, it depended heavily on platform-specific details, e.g., by having a 32KB memtable, it could become full with a very different number of entries depending on the platform.

This PR rewrites the test to build a deterministic LSM with one file per level.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8259

Reviewed By: mrambacher

Differential Revision: D28178100

Pulled By: ajkr

fbshipit-source-id: 0a03b26e8d23c29d8297c1bccb1b115dce33bdcd

Summary:

As the first part of the effort of having placing different files on different storage types, this change introduces several things:

(1) An experimental interface in FileSystem that specify temperature to a new file created.

(2) A test FileSystemWrapper, SimulatedHybridFileSystem, that simulates HDD for a file of "warm" temperature.

(3) A simple experimental feature ColumnFamilyOptions.bottommost_temperature. RocksDB would pass this value to FileSystem when creating any bottommost file.

(4) A db_bench parameter that applies the (2) and (3) to db_bench.

The motivation of the change is to introduce minimal changes that allow us to evolve tiered storage development.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8222

Test Plan:

./db_bench --benchmarks=fillrandom --write_buffer_size=2000000 -max_bytes_for_level_base=20000000 -level_compaction_dynamic_level_bytes --reads=100 -compaction_readahead_size=20000000 --reads=100000 -num=10000000

followed by

./db_bench --benchmarks=readrandom,stats --write_buffer_size=2000000 -max_bytes_for_level_base=20000000 -simulate_hybrid_fs_file=/tmp/warm_file_list -level_compaction_dynamic_level_bytes -compaction_readahead_size=20000000 --reads=500 --threads=16 -use_existing_db --num=10000000

and see results as expected.

Reviewed By: ajkr

Differential Revision: D28003028

fbshipit-source-id: 4724896d5205730227ba2f17c3fecb11261744ce

Summary:

Add `num_levels`, `is_bottommost`, and table file creation

`reason` to `FilterBuildingContext`, in anticipation of more powerful

Bloom-like filter support.

To support this, added `is_bottommost` and `reason` to

`TableBuilderOptions`, which allowed removing `reason` parameter from

`rocksdb::BuildTable`.

I attempted to remove `skip_filters` from `TableBuilderOptions`, because

filter construction decisions should arise from options, not one-off

parameters. I could not completely remove it because the public API for

SstFileWriter takes a `skip_filters` parameter, and translating this

into an option change would mean awkwardly replacing the table_factory

if it is BlockBasedTableFactory with new filter_policy=nullptr option.

I marked this public skip_filters option as deprecated because of this

oddity. (skip_filters on the read side probably makes sense.)

At least `skip_filters` is now largely hidden for users of

`TableBuilderOptions` and is no longer used for implementing the

optimize_filters_for_hits option. Bringing the logic for that option

closer to handling of FilterBuildingContext makes it more obvious that

hese two are using the same notion of "bottommost." (Planned:

configuration options for Bloom-like filters that generalize

`optimize_filters_for_hits`)

Recommended follow-up: Try to get away from "bottommost level" naming of

things, which is inaccurate (see

VersionStorageInfo::RangeMightExistAfterSortedRun), and move to

"bottommost run" or just "bottommost."

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8246

Test Plan:

extended an existing unit test to exercise and check various

filter building contexts. Also, existing tests for

optimize_filters_for_hits validate some of the "bottommost" handling,

which is now closely connected to FilterBuildingContext::is_bottommost

through TableBuilderOptions::is_bottommost

Reviewed By: mrambacher

Differential Revision: D28099346

Pulled By: pdillinger

fbshipit-source-id: 2c1072e29c24d4ac404c761a7b7663292372600a

Summary:

Greatly reduced the not-quite-copy-paste giant parameter lists

of rocksdb::NewTableBuilder, rocksdb::BuildTable,

BlockBasedTableBuilder::Rep ctor, and BlockBasedTableBuilder ctor.

Moved weird separate parameter `uint32_t column_family_id` of

TableFactory::NewTableBuilder into TableBuilderOptions.

Re-ordered parameters to TableBuilderOptions ctor, so that `uint64_t

target_file_size` is not randomly placed between uint64_t timestamps

(was easy to mix up).

Replaced a couple of fields of BlockBasedTableBuilder::Rep with a

FilterBuildingContext. The motivation for this change is making it

easier to pass along more data into new fields in FilterBuildingContext

(follow-up PR).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8240

Test Plan: ASAN make check

Reviewed By: mrambacher

Differential Revision: D28075891

Pulled By: pdillinger

fbshipit-source-id: fddb3dbb8260a0e8bdcbb51b877ebabf9a690d4f

Summary:

Don't call ```rocksdb_cache_disown_data()``` as it causes the memory allocated for ```shards_``` to be leaked.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8237

Reviewed By: jay-zhuang

Differential Revision: D28039061

Pulled By: anand1976

fbshipit-source-id: c3464efe2c006b93b4be87030116a12a124598c4

Summary:

Renaming ImmutableCFOptions::info_log and statistics to logger and stats. This is stage 2 in creating an ImmutableOptions class. It is necessary because the names match those in ImmutableOptions and have different types.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8227

Reviewed By: jay-zhuang

Differential Revision: D28000967

Pulled By: mrambacher

fbshipit-source-id: 3bf2aa04e8f1e8724d825b7deacf41080c14420b

Summary:

Add new C APIs to create the JemallocNodumpAllocator and set it on a Cache object.

`make test` passes with and without `DISABLE_JEMALLOC=1`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8178

Reviewed By: jay-zhuang

Differential Revision: D27944631

Pulled By: ajkr

fbshipit-source-id: 2531729aa285a8985c58f22f093c4d53029c4a7b

Summary:

This PR is a first step at attempting to clean up some of the Mutable/Immutable Options code. With this change, a DBOption and a ColumnFamilyOption can be reconstructed from their Mutable and Immutable equivalents, respectively.

readrandom tests do not show any performance degradation versus master (though both are slightly slower than the current 6.19 release).

There are still fields in the ImmutableCFOptions that are not CF options but DB options. Eventually, I would like to move those into an ImmutableOptions (= ImmutableDBOptions+ImmutableCFOptions). But that will be part of a future PR to minimize changes and disruptions.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8176

Reviewed By: pdillinger

Differential Revision: D27954339

Pulled By: mrambacher

fbshipit-source-id: ec6b805ba9afe6e094bffdbd76246c2d99aa9fad

Summary:

Add compaction API for secondary instance, which compact the files to a secondary DB path without installing to the LSM tree.

The API will be used to remote compaction.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8171

Test Plan: `make check`

Reviewed By: ajkr

Differential Revision: D27694545

Pulled By: jay-zhuang

fbshipit-source-id: 8ff3ec1bffdb2e1becee994918850c8902caf731

Summary:

In current RocksDB, in recover the information form WAL, we do the consistency check for each column family when one WAL file is corrupted and PointInTimeRecovery is set. However, it will report a false positive alert on "SST file is ahead of WALs" when one of the CF current log number is greater than the corrupted WAL number (CF contains the data beyond the corrupted WAl) due to a new column family creation during flush. In this case, a new WAL is created (it is empty) during a flush. Also, due to some reason (e.g., storage issue or crash happens before SyncCloseLog is called), the old WAL is corrupted. The new CF has no data, therefore, it does not have the consistency issue.

Fix: when checking cfd->GetLogNumber() > corrupted_wal_number also check cfd->GetLiveSstFilesSize() > 0. So the CFs with no SST file data will skip the check here.

Note potential ignored inconsistency caused due to fix: empty CF can also be caused by write+delete. In this case, after flush, there is no SST files being generated. However, this CF still have the log in the WAL. When the WAL is corrupted, the DB might be inconsistent.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8207

Test Plan: added unit test, make crash_test

Reviewed By: riversand963

Differential Revision: D27898839

Pulled By: zhichao-cao

fbshipit-source-id: 931fc2d8b92dd00b4169bf84b94e712fd688a83e

Summary:

RocksDB allows user-specified custom comparators which may not be known to `ldb`,

a built-in tool for checking/mutating the database. Therefore, column family comparator

names mismatch encountered during manifest dump should not prevent the dumping from

proceeding.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8216

Test Plan:

```

make check

```

Also manually do the following

```

KEEP_DB=1 ./db_with_timestamp_basic_test

./ldb --db=<db> manifest_dump --verbose

```

The ldb should succeed and print something like:

```

...

--------------- Column family "default" (ID 0) --------------

log number: 6

comparator: <TestComparator>, but the comparator object is not available.

...

```

Reviewed By: ltamasi

Differential Revision: D27927581

Pulled By: riversand963

fbshipit-source-id: f610b2c842187d17f575362070209ee6b74ec6d4

Summary:

Add comment to DisableManualCompaction() which was missing.

Also explictly return from DBImpl::CompactRange() to avoid memtable flush when manual compaction is disabled.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8186

Test Plan: Run existing unit tests.

Reviewed By: jay-zhuang

Differential Revision: D27744517

fbshipit-source-id: 449548a48905903b888dc9612bd17480f6596a71

Summary:

When WriteBufferManager is shared across DBs and column families

to maintain memory usage under a limit, OOMs have been observed when flush cannot

finish but writes continuously insert to memtables.

In order to avoid OOMs, when memory usage goes beyond buffer_limit_ and DBs tries to write,

this change will stall incoming writers until flush is completed and memory_usage

drops.

Design: Stall condition: When total memory usage exceeds WriteBufferManager::buffer_size_

(memory_usage() >= buffer_size_) WriterBufferManager::ShouldStall() returns true.

DBImpl first block incoming/future writers by calling write_thread_.BeginWriteStall()

(which adds dummy stall object to the writer's queue).

Then DB is blocked on a state State::Blocked (current write doesn't go

through). WBStallInterface object maintained by every DB instance is added to the queue of

WriteBufferManager.

If multiple DBs tries to write during this stall, they will also be

blocked when check WriteBufferManager::ShouldStall() returns true.

End Stall condition: When flush is finished and memory usage goes down, stall will end only if memory

waiting to be flushed is less than buffer_size/2. This lower limit will give time for flush

to complete and avoid continous stalling if memory usage remains close to buffer_size.

WriterBufferManager::EndWriteStall() is called,

which removes all instances from its queue and signal them to continue.

Their state is changed to State::Running and they are unblocked. DBImpl

then signal all incoming writers of that DB to continue by calling

write_thread_.EndWriteStall() (which removes dummy stall object from the

queue).

DB instance creates WBMStallInterface which is an interface to block and

signal DBs during stall.

When DB needs to be blocked or signalled by WriteBufferManager,

state_for_wbm_ state is changed accordingly (RUNNING or BLOCKED).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7898

Test Plan: Added a new test db/db_write_buffer_manager_test.cc

Reviewed By: anand1976

Differential Revision: D26093227

Pulled By: akankshamahajan15

fbshipit-source-id: 2bbd982a3fb7033f6de6153aa92a221249861aae

Summary:

Fixes https://github.com/facebook/rocksdb/issues/6245.

Adapted from https://github.com/facebook/rocksdb/issues/8201 and https://github.com/facebook/rocksdb/issues/8205.

Previously we were writing the ingested file's smallest/largest internal keys

with sequence number zero, or `kMaxSequenceNumber` in case of range

tombstone. The former (sequence number zero) is incorrect and can lead

to files being incorrectly ordered. The fix in this PR is to overwrite

boundary keys that have sequence number zero with the ingested file's assigned

sequence number.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8209

Test Plan: repro unit test

Reviewed By: riversand963

Differential Revision: D27885678

Pulled By: ajkr

fbshipit-source-id: 4a9f2c6efdfff81c3a9923e915ea88b250ee7b6a

Summary:

Unittest reports no space from time to time, which can be reproduced on a small memory machine with SHM. It's caused by large WAL files generated during the test, which is preallocated, but didn't truncate during close(). Adding the missing APIs to set preallocation.

It added arm test as nightly build, as the test runs more than 1 hour.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8204

Test Plan: test on small memory arm machine

Reviewed By: mrambacher

Differential Revision: D27873145

Pulled By: jay-zhuang

fbshipit-source-id: f797c429d6bc13cbcc673bc03fcc72adda55f506

Summary:

In a distributed environment, a file `rename()` operation can succeed on server (remote)

side, but the client can somehow return non-ok status to RocksDB. Possible reasons include

network partition, connection issue, etc. This happens in `rocksdb::SetCurrentFile()`, which

can be called in `LogAndApply() -> ProcessManifestWrites()` if RocksDB tries to switch to a

new MANIFEST. We currently always delete the new MANIFEST if an error occurs.

This is problematic in distributed world. If the server-side successfully updates the CURRENT

file via renaming, then a subsequent `DB::Open()` will try to look for the new MANIFEST and fail.

As a fix, we can track the execution result of IO operations on the new MANIFEST.

- If IO operations on the new MANIFEST fail, then we know the CURRENT must point to the original

MANIFEST. Therefore, it is safe to remove the new MANIFEST.

- If IO operations on the new MANIFEST all succeed, but somehow we end up in the clean up

code block, then we do not know whether CURRENT points to the new or old MANIFEST. (For local

POSIX-compliant FS, it should still point to old MANIFEST, but it does not matter if we keep the

new MANIFEST.) Therefore, we keep the new MANIFEST.

- Any future `LogAndApply()` will switch to a new MANIFEST and update CURRENT.

- If process reopens the db immediately after the failure, then the CURRENT file can point

to either the new MANIFEST or the old one, both of which exist. Therefore, recovery can

succeed and ignore the other.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8192

Test Plan: make check

Reviewed By: zhichao-cao

Differential Revision: D27804648

Pulled By: riversand963

fbshipit-source-id: 9c16f2a5ce41bc6aadf085e48449b19ede8423e4

Summary:

Historically, the DB properties `rocksdb.cur-size-active-mem-table`,

`rocksdb.cur-size-all-mem-tables`, and `rocksdb.size-all-mem-tables` called

the method `MemTable::ApproximateMemoryUsage` for mutable memtables,

which is not safe without synchronization. This resulted in data races with

memtable inserts. The patch changes the code handling these properties

to use `MemTable::ApproximateMemoryUsageFast` instead, which returns a

cached value backed by an atomic variable. Two test cases had to be updated

for this change. `MemoryTest.MemTableAndTableReadersTotal` was fixed by