Summary:

**Summary**

Remove the extraneous newline when using ldb tool. For example, the subcommand list_column_families will print an empty line to stderr even if there are no errors.

**Test plan**

Passed make check; manually tested a few ldb subcommands.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6897

Reviewed By: pdillinger

Differential Revision: D21819352

Pulled By: gg814

fbshipit-source-id: 5a16a6431bb96684fe97647f4d3ac5bf0ec7fc90

Summary:

RocksDB Makefile was assuming existence of 'python' command,

which is not present in CentOS 8. We avoid using 'python' if 'python3' is available.

Also added fancy logic to format-diff.sh to make clang-format-diff.py for Python2 work even with Python3 only (as some CentOS 8 FB machines come equipped)

Also, now use just 'python3' for PYTHON if not found so that an informative

"command not found" error will result rather than something weird.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6883

Test Plan: manually tried some variants, 'make check' on a fresh CentOS 8 machine without 'python' executable or Python2 but with clang-format-diff.py for Python2.

Reviewed By: gg814

Differential Revision: D21767029

Pulled By: pdillinger

fbshipit-source-id: 54761b376b140a3922407bdc462f3572f461d0e9

Summary:

Under MacOS when running with make -j 8 check, the temporary directory generated was > 100 characters. This caused the tests to do nothing under MacOS. Most of them still reported success for doing nothing, but ReadaheadSize was expecting the test to run.

By making the option name longer, the tests will no run successfully (and do something!)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6846

Reviewed By: ajkr

Differential Revision: D21576032

fbshipit-source-id: b089cde0d598137b572aa8527cc5459085252af7

Summary:

sst_dump can issue many file reads from the file system. This doesn't work well with file systems without a OS cache, especially remote file systems. In order to mitigate this problem, several improvements are done:

1. --readahead_size is added, so that users can specify readahead size when scanning the data.

2. Force a 512KB tail readahead, which prevents three I/Os for footer, meta index and property blocks and hopefully index and filter blocks too.

3. Consoldiate SSTDump's I/Os before opening the file for read. Use the same file prefetch buffer.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6836

Test Plan: Add a test that covers this new feature.

Reviewed By: pdillinger

Differential Revision: D21516607

fbshipit-source-id: 3ae43526286f67b2f4a5bdedfbc92719d579b87e

Summary:

When using ldb, users cannot turn on force consistency check in most commands, while they cannot use checksonsistnecy with --try_load_options. The change fixes both by:

1. checkconsistency now calls OpenDB() so that it gets all the options loading and sanitized options logic

2. use options.check_consistency_checks = true by default, and add a --disable_consistency_checks to turn it off.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6802

Test Plan: Add a new unit test. Some manual tests with corrupted DBs.

Reviewed By: pdillinger

Differential Revision: D21388051

fbshipit-source-id: 8d122732d391b426e3982a1c3232a8e3763ffad0

Summary:

"compressio_parallel_threads" caused several test failure tests. To keep crash test clean, disable it for now.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6816

Test Plan: "make crash_test" to make sure the python script doesn't break

Reviewed By: zhichao-cao

Differential Revision: D21462112

fbshipit-source-id: 9eecc764800da82cd19665dc8b167eacead3310b

Summary:

Fix issues for reproducing synthetic ZippyDB workloads in the FAST20' paper using db_bench. Details changes as follows.

1, add a separate random mode in MixGraph to produce all_random workload.

2, fix power inverse function for generating prefix_dist workload.

3, make sure key_offset in prefix mode is always unsigned.

note: Need to carefully choose key_dist_a/b to avoid aliasing. Power inverse function range should be close to overall key space.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6795

Reviewed By: akankshamahajan15

Differential Revision: D21371095

Pulled By: zhichao-cao

fbshipit-source-id: 80744381e242392c8c7cf8ac3d68fe67fe876048

Summary:

This commit adds an `compression_parallel_threads` option in

db_stress. It also fixes the naming of parallel compression

option in db_bench to keep it aligned with others.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6722

Reviewed By: pdillinger

Differential Revision: D21091385

fbshipit-source-id: c9ba8c4e5cc327ff9e6094a6dc6a15fcff70f100

Summary:

The dynamic_cast in the filter benchmark causes release mode to fail due to

no-rtti. Replace with static_cast_with_check.

Signed-off-by: Derrick Pallas <derrick@pallas.us>

Addition by peterd: Remove unnecessary 2nd template arg on all static_cast_with_check

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6732

Reviewed By: ltamasi

Differential Revision: D21304260

Pulled By: pdillinger

fbshipit-source-id: 6e8eb437c4ca5a16dbbfa4053d67c4ad55f1608c

Summary:

Summary : 1. Add two arguments --compression_level_from and --compression_level_to to check

the compression size with different compression level in the given range. Users must

specify one compression type else it will error out. Both from and to levels must

also be specified together.

2. Display the time taken to compress each file with different compressions by default.

Test Plan : make -j64 check

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6634

Test Plan: make -j64 check

Reviewed By: anand1976

Differential Revision: D20810282

Pulled By: akankshamahajan15

fbshipit-source-id: ac9098d3c079a1fad098f6678dbedb4d888a791b

Summary:

In crash test, the db directory might be set to /dev/shm or /tmp, in certain environments such as internal testing infrastructure, neither of these directories support direct IO, so direct IO is never enabled in crash test.

This PR sets up SyncPoints in direct IO related code paths to disable O_DIRECT flag in calls to `open`, so the direct IO code paths will be executed, all direct IO related assertions will be checked, but no real direct IO request will be issued to the file system.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6727

Test Plan:

export CRASH_TEST_EXT_ARGS="--use_direct_reads=1 --mmap_read=0"

make -j24 crash_test

Reviewed By: zhichao-cao

Differential Revision: D21139250

Pulled By: cheng-chang

fbshipit-source-id: db9adfe78d91aa4759835b1af91c5db7b27b62ee

Summary:

The methods in convenience.h are used to compare/convert objects to/from strings. There is a mishmash of parameters in use here with more needed in the future. This PR replaces those parameters with a single structure.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6389

Reviewed By: siying

Differential Revision: D21163707

Pulled By: zhichao-cao

fbshipit-source-id: f807b4cc7e2b0af3871536b69546b2604dfa81bd

Summary:

Based on https://github.com/facebook/rocksdb/issues/6648 (CLA Signed), but heavily modified / extended:

* Implicit capture of this via [=] deprecated in C++20, and [=,this] not standard before C++20 -> now using explicit capture lists

* Implicit copy operator deprecated in gcc 9 -> add explicit '= default' definition

* std::random_shuffle deprecated in C++17 and removed in C++20 -> migrated to a replacement in RocksDB random.h API

* Add the ability to build with different std version though -DCMAKE_CXX_STANDARD=11/14/17/20 on the cmake command line

* Minimal rebuild flag of MSVC is deprecated and is forbidden with /std:c++latest (C++20)

* Added MSVC 2019 C++11 & MSVC 2019 C++20 in AppVeyor

* Added GCC 9 C++11 & GCC9 C++20 in Travis

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6697

Test Plan: make check and CI

Reviewed By: cheng-chang

Differential Revision: D21020318

Pulled By: pdillinger

fbshipit-source-id: 12311be5dbd8675a0e2c817f7ec50fa11c18ab91

Summary:

Recently index_type kBinarySearchWithFirstKey is improved so that the API guarantee is exactly the same as other types and it is ready for wide production. We should cover it in crash tst.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6721

Test Plan: Run crash_test

Reviewed By: anand1976

Differential Revision: D21099781

fbshipit-source-id: fda91eba831d9eacbb140c703e9768bb1701f935

Summary:

RocksDB behavior is different while max_open_files is small or large. Add the coverage to small max_open_files.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6719

Test Plan: Run crash_test

Reviewed By: pdillinger

Differential Revision: D21081021

fbshipit-source-id: e3e211761a9bd25d93d19a61c1f7b62d48cf5e3c

Summary:

Options.avoid_flush_during_recovery is uncovered in crash_test. Add the coverage with a chance of 1/8, as it is a less frequently used options.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6712

Test Plan: Run crash_test and see the option can be used or not used by chance.

Reviewed By: ltamasi

Differential Revision: D21056566

fbshipit-source-id: c3b1521517cfc204786e6ef8c6acd7fffda64793

Summary:

Add env_fault_injection argument to db_stress. When enabled,

FaultInjectionTestEnv will be used instead. Currently this

option does not support running with other env setting.

This will allow

us to later manually produce error when running db_crashtest.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6687

Test Plan:

make db_stress -j32

./db_stress --env_fault_injection

./db_stress --env_fault_injection --hdfs // expect error message

Reviewed By: ajkr

Differential Revision: D21014683

Pulled By: yhchiang

fbshipit-source-id: 0724aeac37efd57adb72a37defe6dbd3bfa8106a

Summary:

Context: Index type `kBinarySearchWithFirstKey` added the ability for sst file iterator to sometimes report a key from index without reading the corresponding data block. This is useful when sst blocks are cut at some meaningful boundaries (e.g. one block per key prefix), and many seeks land between blocks (e.g. for each prefix, the ranges of keys in different sst files are nearly disjoint, so a typical seek needs to read a data block from only one file even if all files have the prefix). But this added a new error condition, which rocksdb code was really not equipped to deal with: `InternalIterator::value()` may fail with an IO error or Status::Incomplete, but it's just a method returning a Slice, with no way to report error instead. Before this PR, this type of error wasn't handled at all (an empty slice was returned), and kBinarySearchWithFirstKey implementation was considered a prototype.

Now that we (LogDevice) have experimented with kBinarySearchWithFirstKey for a while and confirmed that it's really useful, this PR is adding the missing error handling.

It's a pretty inconvenient situation implementation-wise. The error needs to be reported from InternalIterator when trying to access value. But there are ~700 call sites of `InternalIterator::value()`, most of which either can't hit the error condition (because the iterator is reading from memtable or from index or something) or wouldn't benefit from the deferred loading of the value (e.g. compaction iterator that reads all values anyway). Adding error handling to all these call sites would needlessly bloat the code. So instead I made the deferred value loading optional: only the call sites that may use deferred loading have to call the new method `PrepareValue()` before calling `value()`. The feature is enabled with a new bool argument `allow_unprepared_value` to a bunch of methods that create iterators (it wouldn't make sense to put it in ReadOptions because it's completely internal to iterators, with virtually no user-visible effect). Lmk if you have better ideas.

Note that the deferred value loading only happens for *internal* iterators. The user-visible iterator (DBIter) always prepares the value before returning from Seek/Next/etc. We could go further and add an API to defer that value loading too, but that's most likely not useful for LogDevice, so it doesn't seem worth the complexity for now.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6621

Test Plan: make -j5 check . Will also deploy to some logdevice test clusters and look at stats.

Reviewed By: siying

Differential Revision: D20786930

Pulled By: al13n321

fbshipit-source-id: 6da77d918bad3780522e918f17f4d5513d3e99ee

Summary:

This was causing db_crashtest.py to wrongly assume an error by parsing the output. Hopefully this will stabilize the crash tests.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6705

Test Plan: make blackbox_crash_test

Reviewed By: ltamasi

Differential Revision: D21043335

Pulled By: anand1976

fbshipit-source-id: 5cddd112b124d4e2ebd11724a17d4ef0f50c1cf8

Summary:

Improve it in two ways:

1. tools/check_format_compatible.sh is not friendly to run outside FB environment. remove the hard-coded http proxy setting. Instead, move it to Legocastle configuration

2. Always disable warning as error, so that older build is more likely to pass.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6702

Test Plan: Run the test and make sure at least it doesn't break.

Reviewed By: riversand963

Differential Revision: D21033329

fbshipit-source-id: 88b4ec1ec49547b772790050a165466bdc4a62a0

Summary:

Add NewFileChecksumGenCrc32cFactory to file checksum public interface such that applications can use the build in crc32 checksum factory.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6688

Test Plan: pass make asan_check

Reviewed By: riversand963

Differential Revision: D21006859

Pulled By: zhichao-cao

fbshipit-source-id: ea8a45196a8b77c310728ab05f6cc0f49f3baef0

Summary:

This PR implements a fault injection mechanism for injecting errors in reads in db_stress. The FaultInjectionTestFS is used for this purpose. A thread local structure is used to track the errors, so that each db_stress thread can independently enable/disable error injection and verify observed errors against expected errors. This is initially enabled only for Get and MultiGet, but can be extended to iterator as well once its proven stable.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6538

Test Plan:

crash_test

make check

Reviewed By: riversand963

Differential Revision: D20714347

Pulled By: anand1976

fbshipit-source-id: d7598321d4a2d72bda0ced57411a337a91d87dc7

Summary:

When investigating https://github.com/facebook/rocksdb/issues/6666, we encounter an error for sst_dump to dump an ingested SST file with global seqno.

```

Corruption: An external sst file with version 2 have global seqno property with value ��/, while largest seqno in the file is 0)

```

Same as https://github.com/facebook/rocksdb/pull/5097, it is due to SstFileReader don't know the largest seqno of a file, it will fail this check when it open a file with global seqno. ca89ac2ba9/table/block_based_table_reader.cc (L730)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6673

Test Plan: run it manually

Reviewed By: cheng-chang

Differential Revision: D20937546

Pulled By: ajkr

fbshipit-source-id: c3fd04d60916a738533ee1885f3ea844669a9479

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

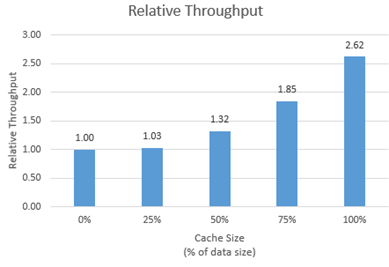

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

Summary:

This commit is fixing a bug that readrandom test returns many NotFound in db_bench from Version 6.2.

Pull Request resolved: https://github.com/facebook/rocksdb/issues/6664

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6665

Reviewed By: cheng-chang

Differential Revision: D20911298

Pulled By: ajkr

fbshipit-source-id: c2658d4dbb35798ccbf67dff6e64923fb731ef81

Summary:

This PR adds support for pipelined & parallel compression optimization for `BlockBasedTableBuilder`. This optimization makes block building, block compression and block appending a pipeline, and uses multiple threads to accelerate block compression. Users can set `CompressionOptions::parallel_threads` greater than 1 to enable compression parallelism.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6262

Reviewed By: ajkr

Differential Revision: D20651306

fbshipit-source-id: 62125590a9c15b6d9071def9dc72589c1696a4cb

Summary:

In the current implementation, sst file checksum is calculated by a shared checksum function object, which may make some checksum function hard to be applied here such as SHA1. In this implementation, each sst file will have its own checksum generator obejct, created by FileChecksumGenFactory. User needs to implement its own FilechecksumGenerator and Factory to plugin the in checksum calculation method.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6600

Test Plan: tested with make asan_check

Reviewed By: riversand963

Differential Revision: D20717670

Pulled By: zhichao-cao

fbshipit-source-id: 2a74c1c280ac11a07a1980185b43b671acaa71c6

Summary:

Forward compatibility with new defaults only starts from 5.16

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6598

Test Plan: facebook automated test (so much easier than running myself)

Reviewed By: riversand963

Differential Revision: D20665553

Pulled By: pdillinger

fbshipit-source-id: b846bfaccf4d0946f92d323a3b4ee6e3e548df93

Summary:

And add releases that should have been added before (6.6 - 6.8)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6594

Test Plan: facebook automated test (so much easier than running myself)

Reviewed By: riversand963

Differential Revision: D20649106

Pulled By: pdillinger

fbshipit-source-id: 78832449d9295580282cebf117e3968362fbdc69

Summary:

The current Env/FileSystem API separation has a couple of issues -

1. It requires the user to specify 2 options - ```Options::env``` and ```Options::file_system``` - which means they have to make code changes to benefit from the new APIs. Furthermore, there is a risk of accessing the same APIs in two different ways, through Env in the old way and through FileSystem in the new way. The two may not always match, for example, if env is ```PosixEnv``` and FileSystem is a custom implementation. Any stray RocksDB calls to env will use the ```PosixEnv``` implementation rather than the file_system implementation.

2. There needs to be a simple way for the FileSystem developer to instantiate an Env for backward compatibility purposes.

This PR solves the above issues and simplifies the migration in the following ways -

1. Embed a shared_ptr to the ```FileSystem``` in the ```Env```, and remove ```Options::file_system``` as a configurable option. This way, no code changes will be required in application code to benefit from the new API. The default Env constructor uses a ```LegacyFileSystemWrapper``` as the embedded ```FileSystem```.

1a. - This also makes it more robust by ensuring that even if RocksDB

has some stray calls to Env APIs rather than FileSystem, they will go

through the same object and thus there is no risk of getting out of

sync.

2. Provide a ```NewCompositeEnv()``` API that can be used to construct a

PosixEnv with a custom FileSystem implementation. This eliminates an

indirection to call Env APIs, and relieves the FileSystem developer of

the burden of having to implement wrappers for the Env APIs.

3. Add a couple of missing FileSystem APIs - ```SanitizeEnvOptions()``` and

```NewLogger()```

Tests:

1. New unit tests

2. make check and make asan_check

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6552

Reviewed By: riversand963

Differential Revision: D20592038

Pulled By: anand1976

fbshipit-source-id: c3801ad4153f96d21d5a3ae26c92ba454d1bf1f7

Summary:

Currently, `db_stress` tests a randomly picked one of `GetLiveFiles`,

`GetSortedWalFiles`, and `GetCurrentWalFile` with a 1/N chance when the

command line parameter `get_live_files_and_wal_files_one_in` is specified.

The problem is that `GetSortedWalFiles` and `GetCurrentWalFile` are unreliable

in the sense that they can return errors if another thread removes a WAL file

while they are executing (which is a perfectly plausible and legitimate scenario).

The patch splits this command line parameter into three (one for each API),

and changes the crash test script so that only `GetLiveFiles` is tested during

our continuous crash test runs.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6491

Test Plan:

```

make check

python tools/db_crashtest.py whitebox

```

Reviewed By: siying

Differential Revision: D20312200

Pulled By: ltamasi

fbshipit-source-id: e7c3481eddfe3bd3d5349476e34abc9eee5b7dc8

Summary:

ldb and sst_dump are most important tools and they don't dependend on gflags. In cmake, we don't have an way to only build these two tools and exclude other tools. This is inconvenient if the environment has a problem with gflags. Add such an option WITH_CORE_TOOLS.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6506

Test Plan: cmake and build with WITH_TOOLS and without.

Differential Revision: D20473029

fbshipit-source-id: 3d730fd14bbae6eeeae7f9cc9aec50a4e488ad72

Summary:

I start to see following failures:

tools/db_bench_tool.cc: In constructor ‘rocksdb::NormalDistribution::NormalDistribution(unsigned int, unsigned int)’:

tools/db_bench_tool.cc:1528:58: error: declaration of ‘max’ shadows a member of 'this' [-Werror=shadow]

NormalDistribution(unsigned int min, unsigned int max) :

^

tools/db_bench_tool.cc:1528:58: error: declaration of ‘min’ shadows a member of 'this' [-Werror=shadow]

tools/db_bench_tool.cc: In constructor ‘rocksdb::UniformDistribution::UniformDistribution(unsigned int, unsigned int)’:

tools/db_bench_tool.cc:1546:59: error: declaration of ‘max’ shadows a member of 'this' [-Werror=shadow]

UniformDistribution(unsigned int min, unsigned int max) :

^

tools/db_bench_tool.cc:1546:59: error: declaration of ‘min’ shadows a member of 'this' [-Werror=shadow]

when I build from GCC 4.8. Rename those variables to fix the problem.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6537

Test Plan: make all with the compiler that used to show the failure.

Differential Revision: D20448741

fbshipit-source-id: 18bcf012dbe020f22f79038a9b08f447befa2574

Summary:

fix a few build warnings that are treated as failures with more strict MSVC warning settings

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6517

Differential Revision: D20401325

Pulled By: pdillinger

fbshipit-source-id: b44979dfaafdc7b3b8cb44a565400a99b331dd30

Summary:

Preliminary support for iterator with user timestamp. Current implementation does not consider merge operator and reverse iterator. Auto compaction is also disabled in unit tests.

Create an iterator with timestamp.

```

...

read_opts.timestamp = &ts;

auto* iter = db->NewIterator(read_opts);

// target is key without timestamp.

for (iter->Seek(target); iter->Valid(); iter->Next()) {}

for (iter->SeekToFirst(); iter->Valid(); iter->Next()) {}

delete iter;

read_opts.timestamp = &ts1;

// lower_bound and upper_bound are without timestamp.

read_opts.iterate_lower_bound = &lower_bound;

read_opts.iterate_upper_bound = &upper_bound;

auto* iter1 = db->NewIterator(read_opts);

// Do Seek or SeekToFirst()

delete iter1;

```

Test plan (dev server)

```

$make check

```

Simple benchmarking (dev server)

1. The overhead introduced by this PR even when timestamp is disabled.

key size: 16 bytes

value size: 100 bytes

Entries: 1000000

Data reside in main memory, and try to stress iterator.

Repeated three times on master and this PR.

- Seek without next

```

./db_bench -db=/dev/shm/rocksdbtest-1000 -benchmarks=fillseq,seekrandom -enable_pipelined_write=false -disable_wal=true -format_version=3

```

master: 159047.0 ops/sec

this PR: 158922.3 ops/sec (2% drop in throughput)

- Seek and next 10 times

```

./db_bench -db=/dev/shm/rocksdbtest-1000 -benchmarks=fillseq,seekrandom -enable_pipelined_write=false -disable_wal=true -format_version=3 -seek_nexts=10

```

master: 109539.3 ops/sec

this PR: 107519.7 ops/sec (2% drop in throughput)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6255

Differential Revision: D19438227

Pulled By: riversand963

fbshipit-source-id: b66b4979486f8474619f4aa6bdd88598870b0746

Summary:

Some combinatino of --index_with_first_key and --index_shortening_mode can signifcantly improve performance for large values. Expose them in db_bench.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5859

Test Plan: Run them with the new options and observe the behavior.

Differential Revision: D20104434

fbshipit-source-id: 21d48a732a9caf20b82312c7d7557d747ea3c304

Summary:

When dynamically linking two binaries together, different builds of RocksDB from two sources might cause errors. To provide a tool for user to solve the problem, the RocksDB namespace is changed to a flag which can be overridden in build time.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6433

Test Plan: Build release, all and jtest. Try to build with ROCKSDB_NAMESPACE with another flag.

Differential Revision: D19977691

fbshipit-source-id: aa7f2d0972e1c31d75339ac48478f34f6cfcfb3e

Summary:

In the current code base, RocksDB generate the checksum for each block and verify the checksum at usage. Current PR enable SST file checksum. After a SST file is generated by Flush or Compaction, RocksDB generate the SST file checksum and store the checksum value and checksum method name in the vs_info and MANIFEST as part for the FileMetadata.

Added the enable_sst_file_checksum to Options to enable or disable file checksum. Added sst_file_checksum to Options such that user can plugin their own SST file checksum calculate method via overriding the SstFileChecksum class. The checksum information inlcuding uint32_t checksum value and a checksum name (string). A new tool is added to LDB such that user can dump out a list of file checksum information from MANIFEST. If user enables the file checksum but does not provide the sst_file_checksum instance, RocksDB will use the default crc32checksum implemented in table/sst_file_checksum_crc32c.h

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6216

Test Plan: Added the testing case in table_test and ldb_cmd_test to verify checksum is correct in different level. Pass make asan_check.

Differential Revision: D19171461

Pulled By: zhichao-cao

fbshipit-source-id: b2e53479eefc5bb0437189eaa1941670e5ba8b87

Summary:

Right, when reading from option files, no readahead is used and 8KB buffer is used. It might introduce high latency if the file system provide high latency and doesn't do readahead. Instead, introduce a readahead to the file. When calling inside DB, infer the value from options.log_readahead. Otherwise, a default 512KB readahead size is used.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6372

Test Plan: Add --log_readahead_size in db_bench. Run it with several options and observe read size from option files using strace.

Differential Revision: D19727739

fbshipit-source-id: e6d8053b0a64259abc087f1f388b9cd66fa8a583

Summary:

We see some odd errors complaining math. However, it doesn't seem that it is needed to be included. Remove the include of math.h. Just removing it from db_bench doesn't seem to break anything. Replacing sqrt from std::sqrt seems to work for histogram.cc

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6373

Test Plan: Watch Travis and appveyor to run.

Differential Revision: D19730068

fbshipit-source-id: d3ad41defcdd9f51c2da1a3673fb258f5dfacf47

Summary:

This reverts commit 8e309b35bb.

The stress tests are failing . Revert it until we figure the root cause.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6327

Differential Revision: D19537657

Pulled By: maysamyabandeh

fbshipit-source-id: bf34a5dd720825957729e136e9a5a729a240e61a

Summary:

kHashSearch is incompatible with larger than 1 values for index_block_restart_interval. Setting it to 1 in stress tests would avoid confusion about the test parameters.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6324

Differential Revision: D19525669

Pulled By: maysamyabandeh

fbshipit-source-id: fbf3a797e0ebcebb4d32eba3728cf3583906fc8a

Summary:

Block-based table has index has been disabled in crash test due to bugs. We fixed a bug and re-enable it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6310

Test Plan: Finish one round of "crash_test_with_atomic_flush" test successfully while exclusively running has index. Another run also ran for several hours without failure.

Differential Revision: D19455856

fbshipit-source-id: 1192752d2c1e81ed7e5c5c7a9481c841582d5274

Summary:

A previous change meant to make db_stress to run on sync=1 mode for 1/20 of the time in crash_test, but a bug caused to to always run on sync=1 mode. Fix it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6304

Test Plan: Start and kill "python -u tools/db_crashtest.py --simple whitebox" multiple times and observe that most times sync=0 is used while some times sync=1 is used.

Differential Revision: D19433000

fbshipit-source-id: 7a0adba39b17a1b3acbbd791bb0cdb743b91fa95

Summary:

This commit is suspected in some crash test failures such as

Verification failed for column family 0 key 78438077: Value not found: NotFound:

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6243

Test Plan: 'make check' and start 'make crash_test'

Differential Revision: D19220495

Pulled By: pdillinger

fbshipit-source-id: 6c4709cee80ab4344e06ce360f51e947d79fb3fa

Summary:

Currently, db_stress generates fixed length keys of 8 bytes. This patch adds the ability to generate variable length keys. Most of the db_stress code continues to work with a numeric key randomly generated, and the numeric key also acts as an index into the values_ array. The numeric key is mapped to a variable length string key in a deterministic way. Furthermore, the ordering is preserved.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6165

Test Plan: run make crash_test

Differential Revision: D19204646

Pulled By: anand1976

fbshipit-source-id: d2d46a96615b4832a8be2a981f5913905f0e1ca7

Summary:

Several improvements to crash_test/stress_test:

(1) Stress_test to support an parameter of bottommost compression

(2) Rename those FLAGS_* variables that are not gflags to avoid confusion

(3) Crash_test to randomly generate compression type for bottommost compression with half the chance.

(4) Stress_test to sanitize unsupported compression type to snappy, so that crash_test to cover all possible compression types and people don't need to worry about they don't support all comrpession types in their environment.

(5) In crash_test, when generating db_stress command, sort arguments in alphabeta order, so that it is easier to find value for a specific argument.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6215

Test Plan: Run "make crash_test" for a while and see the botommost option shown in LOG files.

Differential Revision: D19171255

fbshipit-source-id: d7001e246c4ff9ee5760776eea0be97738650735

Summary:

Add the verification in operateDB to verify GetLiveFiles, GetSortedWalFiles and GetCurrentWalFile. The test will be called every 1 out of N, N is decided by get_live_files_and_wal_files_one_i, whose default is 1000000.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6224

Test Plan: pass db_stress default run.

Differential Revision: D19183358

Pulled By: zhichao-cao

fbshipit-source-id: 20073cf72ede77a3e0d3cf5f28304f1f605d2b1a

Summary:

Currently, db_stress performs verification by calling `VerifyDb()` at the end of test and optionally before tests start. In case of corruption or incorrect result, it will be too late. This PR adds more verification in two ways.

1. For cf consistency test, each test thread takes a snapshot and verifies every N ops. N is configurable via `-verify_db_one_in`. This option is not supported in other stress tests.

2. For cf consistency test, we use another background thread in which a secondary instance periodically tails the primary (interval is configurable). We verify the secondary. Once an error is detected, we terminate the test and report. This does not affect other stress tests.

Test plan (devserver)

```

$./db_stress -test_cf_consistency -verify_db_one_in=0 -ops_per_thread=100000 -continuous_verification_interval=100

$./db_stress -test_cf_consistency -verify_db_one_in=1000 -ops_per_thread=10000 -continuous_verification_interval=0

$make crash_test

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6173

Differential Revision: D19047367

Pulled By: riversand963

fbshipit-source-id: aeed584ad71f9310c111445f34975e5ab47a0615

Summary:

The new Python syntax check could fail if external entities

were cloned or symlinked to a subdir in a rocksdb git clone. (E.g.

Facebook internal LITE build.) Only look for Python files in specific

subdirs

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6225

Test Plan: python tools/check_all_python.py (still 34 files checked)

Reviewed By: gfosco

Differential Revision: D19186110

Pulled By: pdillinger

fbshipit-source-id: 1fefa54e36b32cd5d96d3d1a43e8a2a694c22ea5

Summary:

This reverts commit 54f9092b0c.

It making our daily stress tests fail. Revert it until the issues are fixed.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6220

Differential Revision: D19179881

Pulled By: maysamyabandeh

fbshipit-source-id: 99de0eaf776567fa81110b9ad2608234a16083ce

Summary:

We're seeing assertion violations like this in crash test:

db_stress: table/block_based/block_based_table_reader.cc:4129: virtual uint64_t rocksdb::BlockBasedTable::ApproximateSize(const rocksdb::Slice&, const rocksdb::Slice&, rocksdb::TableReaderCaller): Assertion `end_offset >= start_offset' failed.***

And ApproximateSize appears only to be called with the level_compaction_dynamic_level_bytes option.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6217

Test Plan:

temporarily put an assert(false) in ApproximateSize and

briefly run 'make crash_test'

Differential Revision: D19179174

Pulled By: pdillinger

fbshipit-source-id: 506e6549aea0da19b363a1a6da04373c364d92e4

Summary:

Adds a python script to syntax check all python files in the

repository and report any errors.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6209

Test Plan:

'make check' with and without seeded syntax errors. Also look

for "No syntax errors in 34 .py files" on success, and in java_test CI output

Differential Revision: D19166756

Pulled By: pdillinger

fbshipit-source-id: 537df464b767260d66810b4cf4c9808a026c58a4

Summary:

Beside extending index_type to kHashSearch, it clarifies in the code base that this feature is incompatible with index_block_restart_interval > 1.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6210

Test Plan:

```

make -j32 crash_test

Differential Revision: D19166567

Pulled By: maysamyabandeh

fbshipit-source-id: 3aaf75a70a8b462d372d43aac69dbd10df303ec7

Summary:

The patch makes it possible to set the BlobDB configuration option

`garbage_collection_cutoff` on the command line. In addition, it changes

the `db_bench` code so that the default values of BlobDB related

parameters are taken from the defaults of the actual BlobDB

configuration options (note: this changes the the default of

`blob_db_bytes_per_sync`).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6211

Test Plan: Ran `db_bench` with various values of the new parameter.

Differential Revision: D19166895

Pulled By: ltamasi

fbshipit-source-id: 305ccdf0123b9db032b744715810babdc3e3b7d5

Summary:

Add an option to db_stress, verify_checksum_one_in, to call DB::VerifyChecksum() once every N ops.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6203

Differential Revision: D19145753

Pulled By: anand1976

fbshipit-source-id: d09edf21f309ad53aa40dd25b7a563d50665fd8b

Summary:

Fix two crash test issues:

1. sync mode should not run with disable_wal=true

2. disable "compaction_readahead_size" for now. With it on, some block checksum verification failure will happen in compaction paths. Not sure why, but disable it for now to keep the test clean.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6200

Test Plan: Run "make crash_test" and "make crash_test_with_atomic_flush" and see it runs way longer than before the fix without failing.

Differential Revision: D19143493

fbshipit-source-id: 438fad52fbda60aafd142e1b65578addbe7d72b1

Summary:

Several options are trivially added to crash test and random values are picked.

Made simple test run non-dynamic level and normal test run dynamic level.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6176

Test Plan: Run crash_test and watch the printing

Differential Revision: D19053955

fbshipit-source-id: 958cb43c968541ebd87ed4d91e778bd1d40e7502

Summary:

Current implementation holds on to 10% of snapshots for 10x longer, and 1% of snapshots 100x longer.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6171

Test Plan:

```

make -j32 crash_test

Differential Revision: D19038399

Pulled By: maysamyabandeh

fbshipit-source-id: 75da2dbb5c47a0b3f37d299b8719e392b73b42c0

Summary:

The current Env API encompasses both storage/file operations, as well as OS related operations. Most of the APIs return a Status, which does not have enough metadata about an error, such as whether its retry-able or not, scope (i.e fault domain) of the error etc., that may be required in order to properly handle a storage error. The file APIs also do not provide enough control over the IO SLA, such as timeout, prioritization, hinting about placement and redundancy etc.

This PR separates out the file/storage APIs from Env into a new FileSystem class. The APIs are updated to return an IOStatus with metadata about the error, as well as to take an IOOptions structure as input in order to allow more control over the IO.

The user can set both ```options.env``` and ```options.file_system``` to specify that RocksDB should use the former for OS related operations and the latter for storage operations. Internally, a ```CompositeEnvWrapper``` has been introduced that inherits from ```Env``` and redirects individual methods to either an ```Env``` implementation or the ```FileSystem``` as appropriate. When options are sanitized during ```DB::Open```, ```options.env``` is replaced with a newly allocated ```CompositeEnvWrapper``` instance if both env and file_system have been specified. This way, the rest of the RocksDB code can continue to function as before.

This PR also ports PosixEnv to the new API by splitting it into two - PosixEnv and PosixFileSystem. PosixEnv is defined as a sub-class of CompositeEnvWrapper, and threading/time functions are overridden with Posix specific implementations in order to avoid an extra level of indirection.

The ```CompositeEnvWrapper``` translates ```IOStatus``` return code to ```Status```, and sets the severity to ```kSoftError``` if the io_status is retryable. The error handling code in RocksDB can then recover the DB automatically.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5761

Differential Revision: D18868376

Pulled By: anand1976

fbshipit-source-id: 39efe18a162ea746fabac6360ff529baba48486f

Summary:

With WritePrepared transactions configured with two_write_queues, unordered_write will offer the same guarantees as vanilla rocksdb and thus can be enabled in stress tests.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6164

Test Plan:

```

make -j32 crash_test_with_txn

Differential Revision: D18991899

Pulled By: maysamyabandeh

fbshipit-source-id: eece5e96b4169b67d7931e5c0afca88540a113e1

Summary:

Currently the default txn write policy in crash tests is WRITE_PREPARED. The patch randomly picks the write policy at the start of the crash test.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6158

Test Plan:

```

make -j32 crash_test_with_txn

```

Differential Revision: D18946307

Pulled By: maysamyabandeh

fbshipit-source-id: f77d7a94f99a08791ef9626da153d284bf521950

Summary:

Add an option to explicitly disable building shared versions of the

RocksDB libraries. The shared libraries cannot be built in cases where

some dependencies are only available as static libraries. This allows

still building RocksDB in these situations.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6122

Differential Revision: D18920740

fbshipit-source-id: d24f66d93c68a1e65635e6e0b663bae62c903bca

Summary:

Especially with non-integral bits/key now supported,

db_crashtest should vary the bloom_bits configuration. The probabilities

look like this:

1/2 chance of a uniform int from 0 to 19. This includes overall 1/40

chance of 0 which disables the bloom filter.

1/2 chance of a float from a lognormal distribution with a median of 10.

This always produces positive values but with a decent chance of < 1

(overall ~1/40) or > 100 (overall ~1/40), the enforced/coerced

implementation limits.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6103

Test Plan:

start 'make blackbox_crash_test' several times and look at

configuration output

Differential Revision: D18734877

Pulled By: pdillinger

fbshipit-source-id: 4a38cb057d3b3fc1327f93199f65b9a9ffbd7316

Summary:

db_stress_tool.cc now is a giant file. In order to main it easier to improve and maintain, break it down to multiple source files.

Most classes are turned into their own files. Separate .h and .cc files are created for gflag definiations. Another .h and .cc files are created for some common functions. Some test execution logic that is only loosely related to class StressTest is moved to db_stress_driver.h and db_stress_driver.cc. All the files are located under db_stress_tool/. The directory name is created as such because if we end it with either stress or test, .gitignore will ignore any file under it and makes it prone to issues in developements.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6134

Test Plan: Build under GCC7 with and without LITE on using GNU Make. Build with GCC 4.8. Build with cmake with -DWITH_TOOL=1

Differential Revision: D18876064

fbshipit-source-id: b25d0a7451840f31ac0f5ebb0068785f783fdf7d

Summary:

PR https://github.com/facebook/rocksdb/issues/5937 changed the db_stress tool to also require db_stress_tool.cc,

and updated the Makefile but not the CMakeLists.txt file. This updates

the CMakeLists.txt file so that the CMake build succeeds again.

PR https://github.com/facebook/rocksdb/issues/5950 updated the Makefile build to package db_stress_tool.cc into

its own librocksdb_stress.a library. I haven't done that here since

there didn't really seem to be much benefit: the Makefile-based build

does not install this library.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6117

Test Plan: Confirmed the CMake build succeeds on an Ubuntu 18.04 system.

Differential Revision: D18835053

Pulled By: riversand963

fbshipit-source-id: 6e2a66834716e73b1eb736d9b7159870defffec5

Summary:

```

In file included from /usr/include/c++/4.8.2/algorithm:62:0,

from ./db/merge_context.h:7,

from ./db/dbformat.h:16,

from ./tools/block_cache_analyzer/block_cache_trace_analyzer.h:12,

from tools/block_cache_analyzer/block_cache_trace_analyzer.cc:8:

/usr/include/c++/4.8.2/bits/stl_algo.h: In instantiation of ‘_RandomAccessIterator std::__unguarded_partition(_RandomAccessIterator, _RandomAccessIterator, const _Tp&, _Compare) [with _RandomAccessIterator = __gnu_cxx::__normal_iterator<std::pair<std::basic_string<char>, long unsigned int>*, std::vector<std::pair<std::basic_string<char>, long unsigned int> > >; _Tp = std::pair<std::basic_string<char>, long unsigned int>; _Compare = rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1]’:

/usr/include/c++/4.8.2/bits/stl_algo.h:2296:78: required from ‘_RandomAccessIterator std::__unguarded_partition_pivot(_RandomAccessIterator, _RandomAccessIterator, _Compare) [with _RandomAccessIterator = __gnu_cxx::__normal_iterator<std::pair<std::basic_string<char>, long unsigned int>*, std::vector<std::pair<std::basic_string<char>, long unsigned int> > >; _Compare = rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1]’

/usr/include/c++/4.8.2/bits/stl_algo.h:2337:62: required from ‘void std::__introsort_loop(_RandomAccessIterator, _RandomAccessIterator, _Size, _Compare) [with _RandomAccessIterator = __gnu_cxx::__normal_iterator<std::pair<std::basic_string<char>, long unsigned int>*, std::vector<std::pair<std::basic_string<char>, long unsigned int> > >; _Size = long int; _Compare = rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1]’

/usr/include/c++/4.8.2/bits/stl_algo.h:5499:44: required from ‘void std::sort(_RAIter, _RAIter, _Compare) [with _RAIter = __gnu_cxx::__normal_iterator<std::pair<std::basic_string<char>, long unsigned int>*, std::vector<std::pair<std::basic_string<char>, long unsigned int> > >; _Compare = rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1’

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:583:79: required from here

/usr/include/c++/4.8.2/bits/stl_algo.h:2263:35: error: no match for call to ‘(rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1) (std::pair<std::basic_string<char>, long unsigned int>&, const std::pair<std::basic_string<char>, long unsigned int>&)’

while (__comp(*__first, __pivot))

^

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:582:9: note: candidates are:

[=](std::pair<std::string, uint64_t>& a,

^

In file included from /usr/include/c++/4.8.2/algorithm:62:0,

from ./db/merge_context.h:7,

from ./db/dbformat.h:16,

from ./tools/block_cache_analyzer/block_cache_trace_analyzer.h:12,

from tools/block_cache_analyzer/block_cache_trace_analyzer.cc:8:

/usr/include/c++/4.8.2/bits/stl_algo.h:2263:35: note: bool (*)(std::pair<std::basic_string<char>, long unsigned int>&, std::pair<std::basic_string<char>, long unsigned int>&) <conversion>

while (__comp(*__first, __pivot))

^

/usr/include/c++/4.8.2/bits/stl_algo.h:2263:35: note: candidate expects 3 arguments, 3 provided

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:583:46: note: rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1

std::pair<std::string, uint64_t>& b) { return b.second < a.second; });

^

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:583:46: note: no known conversion for argument 2 from ‘const std::pair<std::basic_string<char>, long unsigned int>’ to ‘std::pair<std::basic_string<char>, long unsigned int>&’

In file included from /usr/include/c++/4.8.2/algorithm:62:0,

from ./db/merge_context.h:7,

from ./db/dbformat.h:16,

from ./tools/block_cache_analyzer/block_cache_trace_analyzer.h:12,

from tools/block_cache_analyzer/block_cache_trace_analyzer.cc:8:

/usr/include/c++/4.8.2/bits/stl_algo.h:2266:34: error: no match for call to ‘(rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1) (const std::pair<std::basic_string<char>, long unsigned int>&, std::pair<std::basic_string<char>, long unsigned int>&)’

while (__comp(__pivot, *__last))

^

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:582:9: note: candidates are:

[=](std::pair<std::string, uint64_t>& a,

^

In file included from /usr/include/c++/4.8.2/algorithm:62:0,

from ./db/merge_context.h:7,

from ./db/dbformat.h:16,

from ./tools/block_cache_analyzer/block_cache_trace_analyzer.h:12,

from tools/block_cache_analyzer/block_cache_trace_analyzer.cc:8:

/usr/include/c++/4.8.2/bits/stl_algo.h:2266:34: note: bool (*)(std::pair<std::basic_string<char>, long unsigned int>&, std::pair<std::basic_string<char>, long unsigned int>&) <conversion>

while (__comp(__pivot, *__last))

^

/usr/include/c++/4.8.2/bits/stl_algo.h:2266:34: note: candidate expects 3 arguments, 3 provided

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:583:46: note: rocksdb::BlockCacheTraceAnalyzer::WriteSkewness(const string&, const std::vector<long unsigned int>&, rocksdb::TraceType) const::__lambda1

std::pair<std::string, uint64_t>& b) { return b.second < a.second; });

^

tools/block_cache_analyzer/block_cache_trace_analyzer.cc:583:46: note: no known conversion for argument 1 from ‘const std::pair<std::basic_string<char>, long unsigned int>’ to ‘std::pair<std::basic_string<char>, long unsigned int>&’

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6106

Differential Revision: D18783943

Pulled By: riversand963

fbshipit-source-id: cc7fc10565f0210b9eebf46b95cb4950ec0b15fa

Summary:

format_version=5 enables new Bloom filter. Using 2/5

probability for "latest and greatest" rather than naive 1/4.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6102

Test Plan: start 'make blackbox_crash_test'

Differential Revision: D18735685

Pulled By: pdillinger

fbshipit-source-id: e81529c8a3f53560d246086ee5f92ee7d79a2eab

Summary:

**NOTE**: this also needs to be back-ported to 6.4.6 and possibly older branches if further releases from them is envisaged.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6081

Differential Revision: D18710107

Pulled By: zhichao-cao

fbshipit-source-id: 03260f9316566e2bfc12c7d702d6338bb7941e01

Summary:

Recently, a bug was found related to a seek key that is close to SST file boundary. However, it only occurs in a very small chance in db_stress, because the chance that a random key hits SST file boundaries is small. To boost the chance, with 1/16 chance, we pick keys that are close to SST file boundaries.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6037

Test Plan: Did some manual printing out, and hack to cover the key generation logic to be correct.

Differential Revision: D18598476

fbshipit-source-id: 13b76687d106c5be4e3e02a0c77fa5578105a071

Summary:

Right now, in db_stress, as long as prefix extractor is defined, TestIterator always uses. There is value of cover total_order_seek = true when prefix extractor is define. Add a small chance that this flag is turned on.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6039

Test Plan: Run the test for a while.

Differential Revision: D18539689

fbshipit-source-id: 568790dd7789c9986b83764b870df0423a122d99

Summary:

Right now, crash_test always uses 16KB max_manifest_file_size value. It is good to cover logic of manifest file switch. However, information stored in manifest files might be useful in debugging failures. Switch to only use small manifest file size in 1/15 of the time.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6034

Test Plan: Observe command generated by db_crash_test.py multiple times and see the --max_manifest_file_size value distribution.

Differential Revision: D18513824

fbshipit-source-id: 7b3ae6dbe521a0918df41064e3fa5ecbf2466e04

Summary:

Right now, db_stress doesn't cover SeekForPrev(). Add the coverage, which mirrors what we do for Seek().

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6022

Test Plan: Run "make crash_test". Do some manual source code hack to simular iterator wrong results and see it caught.

Differential Revision: D18442193

fbshipit-source-id: 879b79000d5e33c625c7e970636de191ccd7776c

Summary:

In stress test, all iterator verification is turned off is lower bound is enabled. This might be stricter than needed. This PR relaxes the condition and include the case where lower bound is lower than both of seek key and upper bound. It seems to work mostly fine when I run crash test locally.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5869

Test Plan: Run crash_test

Differential Revision: D18363578

fbshipit-source-id: 23d57e11ea507949b8100f4190ddfbe8db052d5a

Summary:

Right now, in db_stress's CF consistency test's TestGet case, if failure happens, we do normal string printing, rather than hex printing, so that some text is not printed out, which makes debugging harder. Fix it by printing hex instead.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5989

Test Plan: Build db_stress and see t passes.

Differential Revision: D18363552

fbshipit-source-id: 09d1b8f6fbff37441cbe7e63a1aef27551226cec

Summary:

In the previous PR https://github.com/facebook/rocksdb/issues/4788, user can use db_bench mix_graph option to generate the workload that is from the social graph. The key is generated based on the key access hotness. In this PR, user can further model the key-range hotness and fit those to two-term-exponential distribution. First, user cuts the whole key space into small key ranges (e.g., key-ranges are the same size and the key-range number is the number of SST files). Then, user calculates the average access count per key of each key-range as the key-range hotness. Next, user fits the key-range hotness to two-term-exponential distribution (f(x) = f(x) = a*exp(b*x) + c*exp(d*x)) and generate the value of a, b, c, and d. They are the parameters in db_bench: prefix_dist_a, prefix_dist_b, prefix_dist_c, and prefix_dist_d. Finally, user can run db_bench by specify the parameters.

For example:

`./db_bench --benchmarks="mixgraph" -use_direct_io_for_flush_and_compaction=true -use_direct_reads=true -cache_size=268435456 -key_dist_a=0.002312 -key_dist_b=0.3467 -keyrange_dist_a=14.18 -keyrange_dist_b=-2.917 -keyrange_dist_c=0.0164 -keyrange_dist_d=-0.08082 -keyrange_num=30 -value_k=0.2615 -value_sigma=25.45 -iter_k=2.517 -iter_sigma=14.236 -mix_get_ratio=0.85 -mix_put_ratio=0.14 -mix_seek_ratio=0.01 -sine_mix_rate_interval_milliseconds=5000 -sine_a=350 -sine_b=0.0105 -sine_d=50000 --perf_level=2 -reads=1000000 -num=5000000 -key_size=48`

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5953

Test Plan: run db_bench with different parameters and checked the results.

Differential Revision: D18053527

Pulled By: zhichao-cao

fbshipit-source-id: 171f8b3142bd76462f1967c58345ad7e4f84bab7

Summary:

DBImpl extends the public GetSnapshot() with GetSnapshotForWriteConflictBoundary() method that takes snapshots specially for write-write conflict checking. Compaction treats such snapshots differently to avoid GCing a value written after that, so that the write conflict remains visible even after the compaction. The patch extends stress tests with such snapshots.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5897

Differential Revision: D17937476

Pulled By: maysamyabandeh

fbshipit-source-id: bd8b0c578827990302194f63ae0181e15752951d

Summary:

This reverts commit 351e25401b.

All branches have been fixed to buildable on FB environments, so we can revert it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5999

Differential Revision: D18281947

fbshipit-source-id: 6deaaf1b5df2349eee5d6ed9b91208cd7e23ec8e

Summary:

A recent commit make periodic compaction option valid in FIFO, which means TTL. But we fail to disable it in crash test, causing assert failure. Fix it by having it disabled.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5993

Test Plan: Restart "make crash_test" many times and make sure --periodic_compaction_seconds=0 is always the case when --compaction_style=2

Differential Revision: D18263223

fbshipit-source-id: c91a802017d83ae89ac43827d1b0012861933814

Summary:

We have updated earlier release branches going back to 5.5 so they are

built using gcc7 by default. Disabling ancient versions before that

until we figure out a plan for them.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5990

Test Plan: Ran the script locally.

Differential Revision: D18252386

Pulled By: ltamasi

fbshipit-source-id: a7bbb30dc52ff2eaaf31a29ecc79f7cf4e2834dc

Summary:

Recently, pipelined write is enabled even if atomic flush is enabled, which causing sanitizing failure in db_stress. Revert this change.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5986

Test Plan: Run "make crash_test_with_atomic_flush" and see it to run for some while so that the old sanitizing error (which showed up quickly) doesn't show up.

Differential Revision: D18228278

fbshipit-source-id: 27fdf2f8e3e77068c9725a838b9bef4ab25a2553

Summary:

More release branches are created. We should include them in continuous format compatibility checks.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5985

Test Plan: Let's see whether it is passes.

Differential Revision: D18226532

fbshipit-source-id: 75d8cad5b03ccea4ce16f00cea1f8b7893b0c0c8

Summary:

In pipeline writing mode, memtable switching needs to wait for memtable writing to finish to make sure that when memtables are made immutable, inserts are not going to them. This is currently done in DBImpl::SwitchMemtable(). This is done after flush_scheduler_.TakeNextColumnFamily() is called to fetch the list of column families to switch. The function flush_scheduler_.TakeNextColumnFamily() itself, however, is not thread-safe when being called together with flush_scheduler_.ScheduleFlush().

This change provides a fix, which moves the waiting logic before flush_scheduler_.TakeNextColumnFamily(). WaitForPendingWrites() is a natural place where the logic can happen.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5716

Test Plan: Run all tests with ASAN and TSAN.

Differential Revision: D18217658

fbshipit-source-id: b9c5e765c9989645bf10afda7c5c726c3f82f6c3

Summary:

Right now, in db_stress's iterator tests, we always use the same CF to validate iterator results. This commit changes it so that a randomized CF is used in Cf consistency test, where every CF should have exactly the same data. This would help catch more bugs.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5983

Test Plan: Run "make crash_test_with_atomic_flush".

Differential Revision: D18217643

fbshipit-source-id: 3ac998852a0378bb59790b20c5f236f6a5d681fe

Summary:

Right now in CF consitency stres test's TestGet(), keys are just fetched without validation. With this change, in 1/2 the time, compare all the CFs share the same value with the same key.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5863

Test Plan: Run "make crash_test_with_atomic_flush" and see tests pass. Hack the code to generate some inconsistency and observe the test fails as expected.

Differential Revision: D17934206

fbshipit-source-id: 00ba1a130391f28785737b677f80f366fb83cced

Summary:

Since we already parse env_uri from command line and creates custom Env

accordingly, we should invoke the methods of such Envs instead of using

Env::Default().

Test Plan (on devserver):

```

$make db_bench db_stress

$./db_bench -benchmarks=fillseq

./db_stress

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5943

Differential Revision: D18018550

Pulled By: riversand963

fbshipit-source-id: 03b61329aaae0dfd914a0b902cc677f570f102e3

Summary:

Since SeekForPrev (used by Prev) is not supported by HashSkipList when prefix is used, we disable it when stress testing HashSkipList.

- Change the default memtablerep to skip list.

- Avoid Prev() when memtablerep is HashSkipList and prefix is used.

Test Plan (on devserver):

```

$make db_stress

$./db_stress -ops_per_thread=10000 -reopen=1 -destroy_db_initially=true -column_families=1 -threads=1 -column_families=1 -memtablerep=prefix_hash

$# or simply

$./db_stress

$./db_stress -memtablerep=prefix_hash

```

Results must print "Verification successful".

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5942

Differential Revision: D18017062

Pulled By: riversand963

fbshipit-source-id: af867e59aa9e6f533143c984d7d529febf232fd7

Summary:

Plain table SSTs could crash sst_dump because of a bug in

PlainTableReader that can leave table_properties_ as null. Even if it

was intended not to keep the table properties in some cases, they were

leaked on the offending code path.

Steps to reproduce:

$ db_bench --benchmarks=fillrandom --num=2000000 --use_plain_table --prefix-size=12

$ sst_dump --file=0000xx.sst --show_properties

from [] to []

Process /dev/shm/dbbench/000014.sst

Sst file format: plain table

Raw user collected properties

------------------------------

Segmentation fault (core dumped)

Also added missing unit testing of plain table full_scan_mode, and

an assertion in NewIterator to check for regression.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5940

Test Plan: new unit test, manual, make check

Differential Revision: D18018145

Pulled By: pdillinger

fbshipit-source-id: 4310c755e824c4cd6f3f86a3abc20dfa417c5e07

Summary:

In the current trace replay, all the queries are serialized and called by single threads. It may not simulate the original application query situations closely. The multi-threads replay is implemented in this PR. Users can set the number of threads to replay the trace. The queries generated according to the trace records are scheduled in the thread pool job queue.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5934

Test Plan: test with make check and real trace replay.

Differential Revision: D17998098

Pulled By: zhichao-cao

fbshipit-source-id: 87eecf6f7c17a9dc9d7ab29dd2af74f6f60212c8

Summary:

expose db stress test by providing db_stress_tool.h in public header.

This PR does the following:

- adds a new header, db_stress_tool.h, in include/rocksdb/

- renames db_stress.cc to db_stress_tool.cc

- adds a db_stress.cc which simply invokes a test function.

- update Makefile accordingly.

Test Plan (dev server):

```

make db_stress

./db_stress

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5937

Differential Revision: D17997647

Pulled By: riversand963

fbshipit-source-id: 1a8d9994f89ce198935566756947c518f0052410

Summary:

The patch adds a new command line parameter --decode_blob_index to sst_dump.

If this switch is specified, sst_dump prints blob indexes in a human readable format,

printing the blob file number, offset, size, and expiration (if applicable) for blob

references, and the blob value (and expiration) for inlined blobs.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5926

Test Plan:

Used db_bench's BlobDB mode to generate SST files containing blob references with

and without expiration, as well as inlined blobs with and without expiration (note: the

latter are stored as plain values), and confirmed sst_dump correctly prints all four types

of records.

Differential Revision: D17939077

Pulled By: ltamasi

fbshipit-source-id: edc5f58fee94ba35f6699c6a042d5758f5b3963d

Summary:

Currently, db_bench only supports PutWithTTL operations for BlobDB but

not regular Puts. The patch adds support for regular (non-TTL) Puts and also

changes the default for blob_db_max_ttl_range to zero, which corresponds

to no TTL.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5921

Test Plan:

make check

./db_bench -benchmarks=fillrandom -statistics -stats_interval_seconds=1

-duration=90 -num=500000 -use_blob_db=1 -blob_db_file_size=1000000

-target_file_size_base=1000000 (issues Put operations with no TTL)

./db_bench -benchmarks=fillrandom -statistics -stats_interval_seconds=1

-duration=90 -num=500000 -use_blob_db=1 -blob_db_file_size=1000000

-target_file_size_base=1000000 -blob_db_max_ttl_range=86400 (issues

PutWithTTL operations with random TTLs in the [0, blob_db_max_ttl_range)

interval, as before)

Differential Revision: D17919798

Pulled By: ltamasi

fbshipit-source-id: b946c3522b836b92b4c157ffbad24f92ba2b0a16

Summary:

The loop in OperateDb() is getting quite complicated with the introduction of multiple key operations such as MultiGet and Reseeks. This is resulting in a number of corner cases that hangs db_stress due to synchronization problems during reopen (i.e when -reopen=<> option is specified). This PR makes it more robust by ensuring all db_stress threads vote to reopen the DB the exact same number of times.

Most of the changes in this diff are due to indentation.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5893

Test Plan: Run crash test

Differential Revision: D17823827

Pulled By: anand1976

fbshipit-source-id: ec893829f611ac7cac4057c0d3d99f9ffb6a6dd9

Summary:

This PR allows for the creation of custom env when using sst_dump. If

the user does not set options.env or set options.env to nullptr, then sst_dump

will automatically try to create a custom env depending on the path to the sst

file or db directory. In order to use this feature, the user must call

ObjectRegistry::Register() beforehand.

Test Plan (on devserver):

```

$make all && make check

```

All tests must pass to ensure this change does not break anything.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5845

Differential Revision: D17678038

Pulled By: riversand963

fbshipit-source-id: 58ecb4b3f75246d52b07c4c924a63ee61c1ee626

Summary:

This is the 2nd attempt after the revert of https://github.com/facebook/rocksdb/pull/4020

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5895

Test Plan:

```

./tools/db_crashtest.py blackbox --simple --interval=10 --max_key=10000000

```

Differential Revision: D17822137

Pulled By: maysamyabandeh

fbshipit-source-id: 3d148c0d8cc129080410ff859c04b544223c8ea3

Summary:

When multiple operations are performed in a db_stress thread in one loop

iteration, the reopen voting logic needs to take that into account. It

was doing that for MultiGet, but a new option was introduced recently to

do multiple iterator seeks per iteration, which broke it again. Fix the

logic to be more robust and agnostic of the type of operation performed.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5876

Test Plan: Run db_stress

Differential Revision: D17733590

Pulled By: anand1976

fbshipit-source-id: 787f01abefa1e83bba43e0b4f4abb26699b2089e

Summary:

Two more bug fixes in db_stress:

1. this is to complete the fix of the regression bug causing overflowing when supporting FLAGS_prefix_size = -1.

2. Fix regression bug in compare iterator itself:

(1) when creating control iterator, which used the same read option as the normal iterator by mistake; (2) the logic of comparing has some problems. Fix them.

(3) disable validation for lower bound now, which generated some wildly different results. Disabling it to make normal tests pass while investigating it.

3. Cleaning up snapshots in verification failure cases. Memory is leaked otherwise.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5867

Test Plan: Run "make crash_test" for a while and see at least 1 is fixed.

Differential Revision: D17671712

fbshipit-source-id: 011f98ea1a72aef23e19ff28656830c78699b402

Summary:

When prefix_size = -1, stress test crashes with run time error because of overflow. Fix it by not using -1 but 7 in prefix scan mode.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5862

Test Plan:

Run

python -u tools/db_crashtest.py --simple whitebox --random_kill_odd \

888887 --compression_type=zstd

and see it doesn't crash.

Differential Revision: D17642313

fbshipit-source-id: f029e7651498c905af1b1bee6d310ae50cdcda41

Summary:

For now, crash_test is not able to report any failure for the logic related to iterator upper, lower bounds or iterators, or reseek. These are features prone to errors. Improve db_stress in several ways:

(1) For each iterator run, reseek up to 3 times.

(2) For every iterator, create control iterator with upper or lower bound, with total order seek. Compare the results with the iterator.

(3) Make simple crash test to avoid prefix size to have more coverage.

(4) make prefix_size = 0 a valid size and -1 to indicate disabling prefix extractor.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5846

Test Plan: Manually hack the code to create wrong results and see they are caught by the tool.

Differential Revision: D17631760

fbshipit-source-id: acd460a177bd2124a5ffd7fff490702dba63030b

Summary:

Further apply formatter to more recent commits.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5830

Test Plan: Run all existing tests.

Differential Revision: D17488031

fbshipit-source-id: 137458fd94d56dd271b8b40c522b03036943a2ab

Summary:

Some recent commits might not have passed through the formatter. I formatted recent 45 commits. The script hangs for more commits so I stopped there.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5827

Test Plan: Run all existing tests.

Differential Revision: D17483727

fbshipit-source-id: af23113ee63015d8a43d89a3bc2c1056189afe8f

Summary:

PR https://github.com/facebook/rocksdb/issues/4020 enabled partitioned indexes/filters in stress tests; however,

this causes assertion failures in BatchedOpsStressTest. This patch

disables them until we can root cause the failures.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5811

Test Plan: Ran the script and made sure it only uses the binary search index.

Differential Revision: D17399366

Pulled By: ltamasi

fbshipit-source-id: adb116e6297f9c6ccd7ac15b6a16c9aa91f21ac5

Summary:

file_reader_writer.h and .cc contain several files and helper function, and it's hard to navigate. Separate it to multiple files and put them under file/

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5803

Test Plan: Build whole project using make and cmake.

Differential Revision: D17374550

fbshipit-source-id: 10efca907721e7a78ed25bbf74dc5410dea05987

Summary:

PR https://github.com/facebook/rocksdb/issues/4020 implicitly enabled the hash index as well in stress/crash

tests, resulting in assertion failures in Block. This patch disables

the hash index until we can pinpoint the root cause of these issues.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5792

Test Plan:

Ran tools/db_crashtest.py and made sure it only uses index types 0 and 2

(binary search and partitioned index).

Differential Revision: D17346777

Pulled By: ltamasi

fbshipit-source-id: b4318f37f1fda3ee1bbff4ef2c2f556ca9e6b551

Summary:

This is required to compile on Windows with Visual Studio 2015.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5786

Differential Revision: D17335994

fbshipit-source-id: 8f9568310bc6f697e312b5e24ad465e9084f0011

Summary:

- In `db_stress`, support choosing index type and whether to enable filter partitioning, and randomly set those options in crash test

- When partitioned filter is enabled by crash test, force partitioned index to also be enabled since it's a prerequisite